We crave knowledge. Only ostriches stick their heads in the sand. Right? Well, then, how can we explain the following?

“Don’t tell me how the movie ends.”

“Don’t tell me if I have the gene for that disease.”

“Don’t tell me my startup is likely to fail.”

“Don’t tell me if my spouse cheated on me.”

“Don’t tell me how they slaughter veal calves.”

In many cases, let’s face it, we prefer not to know things, contrary to simple economic theories that say more information is always better. We tell one another that ignorance is bliss. We reject too much information.

We even structure our societies to exclude knowledge for certain purposes. Courts have inadmissible evidence. Employers have inadmissible questions for applicants. For 17 years, the military’s policy on homosexuality was “Don’t ask, don’t tell.” More recently, many colleges stopped asking for SAT and ACT scores, although some have started asking for them again.

Category Archives: knowledge

必ず先に磁気券

Digital poverty

Digital poverty impacts poverty

If we’re to solve poverty, we must address digital exclusion. Whether it’s accessing education, the social security system, job opportunities or cheaper gas and electricity, it’s a core part of how we live.

The growing divide

With each new development in technology, more people are left behind. This also makes existing inequities around race, gender, age, ability and income worse.

Words (John McWhorter)

The fit between words and meanings is much fuzzier and unstable than we are led to suppose by the static majesty of the dictionary and its tidy definitions. What a word means today is a Polaroid snapshot of its lexical life, long-lived and frequently under transformation.

The reason begins with the nature of concepts rather than the words that express them. Concepts shade into one another the way colors do. For example, to be foolish is a form of being weak; one kind of weakness is to be distracted by idle fastidiousness rather than focusing on substance; but fastidiousness is also a way of being careful or observant, of which one form is being socially agreeable — as in “nice.” I raise these examples because the word “nice” actually did describe each of those concepts over the course of several centuries, like a torch passed on from hand to hand in sequence. In 1250, people were called nice when they were dimwitted. Only linguists have any reason to know the circuitous path that took us from that definition to “kind.”

Crucially, this is not some isolated instance, but a typical one. It is why “silly” once meant “blessed,” “obnoxious” once meant “subject to harm,” “generous” once meant “of noble status,” and today we speak of “heading” out from a party and “heading” up the coast without for a minute thinking it has anything to do with our noggins.

藤嶽明信

境界・境にはいくつかの意味があるが、今回は六境<色境・声境・香境・味境・触境・法境>を取り上げることにする。六境とは人間の認識対象のことである。すなわち人が眼・耳・鼻・舌・身・こころによって感覚し認識する六つの対象のことを六境という。

これらの六境は自己存在と密接に関係していると説かれる。たとえば自分好みの音楽は心地よい音として聞こえるが、他の人にとっては必ずしもそうではない。反対に、自分には苦手な香りでも、それを好む人もいる。

このように認識対象は、自己を離れて単に客観的に存在しているものではなく、自己と密接に関係して存在しているのである。しかし人はそのことに気付かず、物事は自分を離れて客観的に存在していると思い、その上で好ましい対象は受け入れようとし、好ましくない対象は排除しようとする。このように見てくると、国や私有地の境界線だけではなく、様々な物事に種々の境界線を当然のように引いて、受け入れや排除を行っている人間の姿が浮かび上がってくるのではなかろうか。

そして自分がする物事の受け入れや排除が線引きであると同様に、他者がなす受け入れや排除に対して自明のごとくに沸き起こる称賛や非難、これもまた自己による線引きであることを知るべきであろう。なぜなら、万事は自己存在と密接に関わりながら、褒めるべきこととして思えたり、また詰るべきこととして思えたりしているからである。

私たちが当たり前のように認識していることは、今一度根本から問い直す必要があるのではないか。境界という仏教語は、そのことを教えてくれている。

何も知らない

人は何も知らない

知らないのに

知ったつもりになって

知っていると信じている

真実なんていうものは

どこにもないというのに

自分が知っていると思っていることが

真実だと思い込んでいる

人には何も見えない

見えなくても

見えた気になって

見えたと信じている

事実というものが

どんなにあやふやなものか知らずに

自分が見たと思っていることを

事実だと思い込んでいる

僕は何も知らない

君も何も知らない

幸せを知る

逃げ続けた後で

逃げないでいい幸せを

知る

拒まれ続けた後で

受け入れられる幸せを

知る

逃げるのも

拒まれるのも

嫌だけれど

嫌なことがなければ

幸せを知ることは

ない

なんでも知っている人

なんでも知っている人ではなく

なんにも知らないと思える人がいい

なんでも知っていて なにも考えない人より

なにも知らないで たくさん考える人のほうがいい

なんでも知っている人は

どんなことも もっともらしく説明し

知らないことも 知っているかのように話し

答えのないことにまで 答えを与える

なにかを知っている人よりも

なにかを好きな人のほうがいい

なにかを真面目にやっている人よりも

なにかを楽しんでいる人のほうがいい

言葉を巧みに操る人でなく

言いたいことも言えないような人がいい

頭のいい人より

心がいい人のほうがいい

自分の境遇を怨んでいる人ではなく

状況を変えていける人がいい

打ちのめされている人より

微笑んでいる人のほうがいい

君がいつまでも微笑んでいるといい

何をも知らない

石牟礼道子を

花ふぶき生死のはては知らざりき

で覚えていたり

寺山修司を

海を知らぬ少女の前に麦藁帽のわれは両手を広げていたり

で覚えていたり

山本周五郎を

心に傷をもたない人間がつまらないように、あやまちのない人生は味気ないものだ

で覚えていたり

Albert Camus を

Tout homme est un criminel qui s’ignore.

で覚えていたり

William Shakespeare を

The fool doth think he is wise, but the wise man knows himself to be a fool.

で覚えていたりする

石牟礼道子も

寺山修司も

山本周五郎も

Albert Camus も

William Shakespeare も

膨大な文章を残しているのに

なぜか その人たちを

短い言葉で覚えていようとする

短い言葉は

その人たちの

何をも

表してはいない

僕は

その人たちの

何をも

知らない

僕は

何も

知らない

知る

知ることなしに幸せはないという

でも知るのが不幸せなときもある

たとえば幸せを知るのは不幸せで

だから幸せは知らないほうがいいという

幸せなのだと知ることは

幸せでなくなるのを知ることではないか

幸せはいつまでも続かないから

今日の幸せは明日の不幸せなのではないか

知るというのはダメになることだという

たとえば 幸せを知れば幸せはダメになる

知るというのは消えてしまうことともいう

たとえば 幸せを知れば幸せは消えてしまう

幸せだけではない

知ればすべてがダメになり

知ればすべてが消えてしまう

だから 何も知らないほうがいい

だったら

知らなければいいじゃないか

いやいや

知らないわけにはいかない

知るのがダメになることだとしても

知るのが消えてしまうことだとしても

やっぱり知るほうがいい

知らないより知るほうがいい

誰の知識

インターネットで検索をすると

アルゴリズムだかなにかに誘導されて

偶然なのか必然なのか

誰かが載せた情報にたどり着く

なぜそこにたどり着いたのかもわからずに

目に入った情報を信じてしまえば

誘導する情報に騙され

金儲けの情報に足をすくわれる

文字や数字は編集されていて

画像や映像は加工されていて

どの情報も手垢とシミだらけ

どこかに無垢な情報はないものか

検索を続けていたら

もっともらしい情報が嘲笑う

人間だって手垢とシミだらけじゃないか

無垢な人なんてどこにもいないじゃないか

正しいか間違っているかもわからない

不完全な情報や不確かな情報から

どんな判断をしたとしても

それが正しいわけがない

自分ひとりの知識だと思っていても

その知識はじつは誰かの知識で

理解した気になってはいても

理解がなにかも理解していない

本を買えば本を読んだつもり

映画館に入れば映画を見たつもり

旅行に行けば景色を眺めたつもり

つもりがないのは つもりじゃない

他人から得た考えと自分の経験が重なって

自分の考えができあがったというけれど

自分の経験のほとんどは他人との経験で

自分の考えは結局は他人の考えでしかない

自分のものと思っている知識も

他人の知識の断片のコピーばかり

この人の知識もあの人の知識も

他人の知識の断片のコピーばかり

自分の知識は自分の知識

他人の知識も自分の知識

本のなかの知識も自分の知識

社会の知識も自分の知識

他人の知識がなくて

本のなかの知識もなくて

社会の知識もなかったら

自分だけの知識はとても小さい

知的所有権とかいいながら

知を自分のものにして

社会の知を独り占めして

カネを稼いでいる人がいる

自分 自分と言わないで

自分だけを頼りにしないで

自分は全体の一部なのだと

謙虚になってみよう

そんなことを考えていたら

人間が植物に見えてきた

人間が種として生き延びるためには

ひとりひとりはどうでもいいのだ

そう思った途端

自分 自分と言いたくなった

人間の種なんかどうでもいい

僕は僕でやっていく

人間全部のために生きたりしない

自分のためだけに生きる

人間全部のことなんか思わない

君のことだけを思う

なにもわかっていない

わかっていると わかっている

わかっていないと わかっている

わかっていないと わかっていない

わかっていると わかっていない

知っていることを 知っている

知らないことを 知っている

知らないことを 知らない

知っていることを 知らない

知らないのに 知ってるつもりの無知

知らないことを 知らないままでいる無知

知ったことを 知らないという無知

知ったことから 生じる無知

知っているのに がっかりしてしまう

知らないのに 知ったかぶりをする

知らないのに 知っていると信じる

知っているのに 知らないふりをする

わかっていると 君が言う

わかっていないと 僕が言う

わかっていないと 君が言う

わかっていると 僕が言う

名前

クロワッサンの中にチョコレートが入ってているパンを

フランスの南西部では chocolatine と呼び

残りの地方では pain au chocolat と呼ぶ

パンの切れ端のことを

フランスの南半分では quignon と言い

北半分では croûton と言う

そんなどうでもいいことで

時間が過ぎていく

名前がどうでも なにも変わらない

そんなことは知っている

名前なんて 言葉が違えば違うのだ

それも わかっている

それなのに僕は 名前にこだわる

名前なんか どうでもいいのに

なぜか名前が気になる

違う場所では違う名前で呼ばれる草

まったく同じ草なのに

違う地方に行けば違う名前で呼ばれ

違う草だと思われている

違う場所では違う名前で呼ばれる花

全然違う花なのに

似たような名前が付けられて

同種だと間違われたりする

違う場所では違う名前で呼ばれる木

まったく違う木なのに

同じ名前で呼ばれているので

同じ木だと勘違いされている

中国から日本に漢字がやって来た時に

もうすでに 木には名前があって

その木の漢字を知った当時の日本人は

前からあった読みをその漢字に当てた

だからこんがらがるのは当たり前で

間違えるのも当然で

橡はトチノキだと栃とも書いて

橡がクヌギだと櫟とか椚とも書き

橡はツルバミだったり

櫟はイチイだったり

紛らわしいこと この上ない

科はシナで榀とも書くが

榀という字は漢字ではなくて

日本でできた国字だという

なにがなんだかわからないから

ぜんぶカタカナで書けばいい

トチノキ クヌギ ツルバミ イチイ シナ で

いいではないか

そんなことを呑気に言っていたら

あっという間に世界中が近くなり

知らないところから知らない木が

人に運ばれてやってきた

名前はみんなカタカナで

どの木が 日本のどの木だと

専門家は忙しくしたけれど

お互いの連絡もつかぬまま

たくさんの勘違いと間違いが生まれ

でも

まあいいや

という人たちのおかげで

ゆるやかな気分で木の名前を呼ぶことができる

遠い国の植物園で

名前の札を食い入るように見ても

草も花も木も

なんて呼ぶのか わからない

知っているはずの草や花や木まで

名前を失ってゆく

隣の国の植物園で

名前の札に書かれた漢字をずっと見ていたとしても

草も花も木も

呼び方が違いすぎて 混乱してしまう

知っているはずの草や花や木の名前が

違っているのではないかと疑い出す

草や花や木は

名前はどうでも生きていて

その美しさは

名前で変わる わけではない

君の名前がどんなでも

君をなんと呼ぼうとも

君は君

美しい

吾唯足知

はんこを買った

吾唯足知

と書いてあった

とはいっても実際には

口を真ん中にして

五隹止矢

と書いてあったのだが

あれれ これ

どこかで見たことがある

と思って考えを巡らせたら

そうだ

龍安寺の茶室の裏にあった石の鉢だ

と情景が蘇ってきた

その言葉が龍安寺の手水鉢に彫られていて

それを僕が覚えている

その四文字がはんこに彫ってあり

それを僕が買う

吾唯足知は

欲を戒める言葉として

禅寺でよく使われる

金持ちでも満足しない人がいる

貧しくても感謝する人がいる

ただ十分だということを知る

何が十分なのかを知る

ただ満足することを学ぶ

満足を知る

そう

あるものに満足しよう

あるものに感謝しよう

欲望には限りがない

君がそこにいる

それだけでいい

吾唯足知は

そういうことなのだろう

裏で操る人たち

アラブの春で立ち上がった貧乏な学生は

最新型の上位機種の Mac を持ち歩き

あるはずのない Wifi にアクセスし

ブロックされている Facebook を使いこなす

貧乏な学生に Mac を買ってあげたのは誰?

Wifi の使える環境を用意したのは誰?

Facebook のブロックを解除したのは誰?

アラブの春をプロデュースしたのは誰なのか

フランスの大統領がアフリカの国を訪れたら

その国の人たちはみんなフランスが好きだから

空港から街までの道は人で埋め尽くされ

大統領が通るとみんなでフランスの国旗を振った

空港から街までの道に人を集めたのは誰?

国旗を集まった人の数だけ用意したのは誰?

国旗を配って振り方を指導したのは誰?

大統領の訪問をプロデュースしたのは誰なのか

パキスタンの少女がピストルで撃たれ

瀕死の重傷を負ったけれど命はとりとめ

イギリスに移送されて手術を受け快復し

国連で演説してノーベル賞を受賞した

少女を国連に連れて行ったのは誰?

国連での素晴らしい演説を用意したのは誰?

ノーベル賞がもらえるよう交渉したのは誰?

マララブランドを作り出したのは誰なのか

私たちのまわりはわからないことばかり

グレタという環境活動家をプロデュースしたのは誰?

すべての交差点の監視カメラを診ていいのは誰?

都合の悪いニュースを止めるのは誰?

誰かの居所を知っているのは誰?

誰かの考えを知っているのは誰?

誰かの思いを知っているのは誰?

誰かのことをほんとうに知っているのは誰なのか

ジョージ・オーウェルが『1984年』で書き

竹宮惠子が『地球へ…』で描いた監視社会は

より巧妙なかたちになって私たちにやってきた

普通の人と普通の人と普通の人と普通の人とが

繋がり 共鳴し 理解し合い 共感し 同調し

みんなで空気を読んで 忖度して 自粛する

それがどこかでプログラムされたものなのか

それとも自然発生したものなのか

もう誰にもわからない

Cee Vinny

What If We’re All Wrong?

Humans don’t have a great track record of ‘knowing it all’ …

Steven Sloman, Philip Fernbach

We’ve seen that people are surprisingly ignorant, more ignorant than they think. We’ve also seen that the world is complex, even more complex than one might have thought. So why aren’t we overwhelmed by this complexity if we’re so ignorant? How can we get around, sound knowledgeable, and take ourselves seriously while understanding only a tiny fraction of what there is to know?

The answer is that we do so by living a lie. We ignore complexity by overestimating how much we know about how things work, by living life in the belief that we know how things work even when we don’t. We tell ourselves that we understand what’s going on, that our opinions are justified by our knowledge, and that our actions are grounded in justified beliefs even though they are not. We tolerate complexity by failing to recognize it. That’s the illusion of understanding.

与芝真彰

知識を深めただけでは、死は理解できるものではない。

死もまた別れの延長であり、特別なことではない。そう考えてみると気が楽になる。

杉本栄子

知らんちゅうことは、罪ぞ。

芳林堂書店高田馬場店

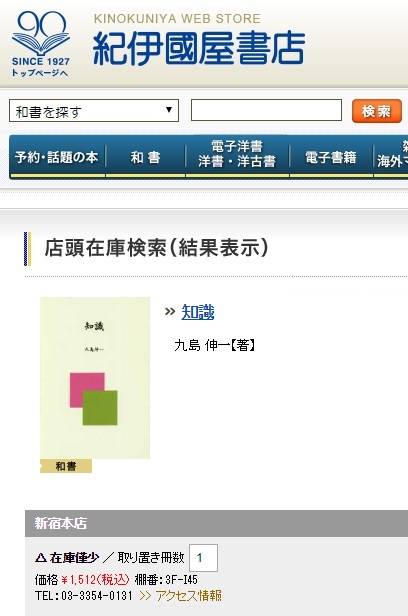

紀伊國屋書店

honto

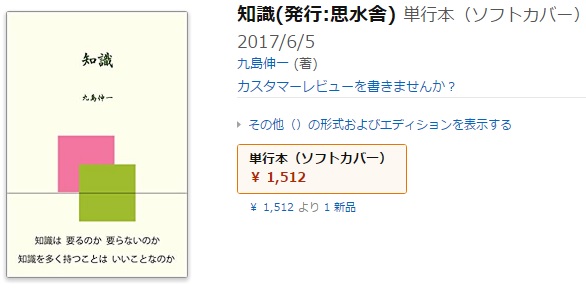

九島伸一

與芝眞彰

「慈悲」とは、わけ隔てなく他者の痛みを自分のもののように感じ分かち合い、癒そうという心を意味します。わが子、身内、恋人など、人間はつい身びいきをしてしまうものですが、身近な人だけでなく、もっと広い視点で他者に良くしようという志です。

「慈悲」だけでは、いくら他者の力になろうとしても、悲しむ患者さんやご家族に気持ちが引っ張られてしまい、一緒に動揺して右往左往してしまいます。結果として、患者さん本人に適切な対応ができなかったり、他の患者さんの危機に気づかなかったりする危険性もあります。

「智慧」は、仏教の言葉で、一般的な「知恵」より深く、普遍的な意味を持っています。単なる「知識」(今ではインターネットで簡単に手に入ります)はもちろん、「法学」「倫理」、一般的な「生活の知恵」なども、時代とともに移り変わり、また各家庭や地方によって様々なしきたりがあり、これも普遍的とは言いがたいものです。どんな時代でも、どんな立場の人にも通じるものの道理、ひいては宇宙の真理と言えるものが「智慧」です。

「智慧」だけでは、人がいつか亡くなるのは必定のことだと分かっていたとしても、だからこそ辛くて悲しいのだ、別れ難いのだという、患者さんやご家族の気持ちに気づかない恐れがあります。他者の気持ちに寄り添うことは、時に長く困難な道のりですが、大切な人だからこそ生まれる人の情を無視してしまうことは論外です。

アマゾン

思水舎

インターネットで探せば

インターネットで探せば

何でもすぐに見つかる

だからもう知識は要らない

そう言う人がいる

知識格差の時代が来て

知識がなければ

うまく生きてはいけない

そう言う人もいる

知識は 要るのか 要らないのか

知識は再分配できるのか

知識はビジネスになるのか

知識はどこにあるのか

知識は誰のものなのか

なぜ私たちは知識を求めるのか

限られた時間のなかで

どれだけの知識を

持てばいいのか

そもそもどんな知識を

持てばいいのか

知識は管理できるのか

知識は共有できるのか

普遍的な知識などというものが

本当にあるのだろうか

知識を多く持つことは

いいことなのか

ほんとうに知識は

いいものなのか

そんな疑問について

読者と一緒に考える

Casey Williams

People who produce facts — scientists, reporters, witnesses — do so from a particular social position (maybe they’re white, male and live in America) that influences how they perceive, interpret and judge the world. They rely on non-neutral methods (microscopes, cameras, eyeballs) and use non-neutral symbols (words, numbers, images) to communicate facts to people who receive, interpret and deploy them from their own social positions.

Call it what you want: relativism, constructivism, deconstruction, postmodernism, critique. The idea is the same: Truth is not found, but made, and making truth means exercising power.

The reductive version is simpler and easier to abuse: Fact is fiction, and anything goes.

Bruno Latour

While we spent years trying to detect the real prejudices hidden behind the appearance of objective statements, do we now have to reveal the real objective and incontrovertible facts hidden behind the illusion of prejudices? And yet entire Ph.D. programs are still running to make sure that good American kids are learning the hard way that facts are made up, that there is no such thing as natural, unmediated, unbiased access to truth, that we are always prisoners of language, that we always speak from a particular standpoint, and so on, while dangerous extremists are using the very same argument of social construction to destroy hard-won evidence that could save our lives. Was I wrong to participate in the invention of this field known as science studies? Is it enough to say that we did not really mean what we said? Why does it burn my tongue to say that global warming is a fact whether you like it or not? Why can’t I simply say that the argument is closed for good?

Why Has Critique Run out of Steam? From Matters of Fact to Matters of Concern (PDF fike)

Nicolas Matyjasik

Le monde a changé. Le monde de la recherche aussi. Le transfert de connaissances dans la mise en œuvre des politiques publiques est l’enjeu d’une action politique de gauche. Unir la démocratie, la connaissance et des politiques publiques robustes : c’est le pari de l’intelligence collective et de la politique des idées que porte Benoît Hamon, un renouvellement du logiciel de gauche. Le cœur des idées bat encore, et fort !

外山滋比古

- 学校で我々は知識を得て、生活に便利なことを覚えますが、そのために、自分らしく生きることや個性などを失ってしまう危険がある。

- 既存の研究や文献、歴史から知識を得れば識者とみなされ、ある程度の仕事をしたことになる。でも、もともとある考えを下敷きに、知識を修正していくだけの仕事はつまらない。

- 今まで重要視されていた、知識量や記憶力では、人工知能にはかなわない。そうなったとき、過去の情報ではなく、現在形あるいは未来形の、独自で新しい思考こそ必要となる

- 古い知識が詰まった本なんか読まなくていい。自分たちの生活から出てくる様々な疑問やふと気づいた不思議など、思いつくことを皆で話す。そうしたとき、個人では到達しない考えに辿り着けるかもしれない。

- 現代の人間の欠点は、個人的であることだ。だから普遍性が生まれないのだが、衆知まではいかなくても、最低三人ぐらい集まって話す。昔の人は「三人よれば文殊の知恵」といったが、三人になってはじめて、ある種の価値ある知恵に達する可能性がある。五~六人いたらなおいい。

robinsonrobin

昔日本語の清音は61あったという。今は44。奈良時代には母音が8つあった。「い」と「え」と「お」に2種類の母音があった。「き」と言っても2種類の発音があったのだ。「へ」と言っても2種類。「と」と言っても2種類。

さらに「はひふへほ」は「ぱぴぷぺぽ」、「じ」と「ぢ」、「ず」と「づ」、「い」と「ゐ」、「え」と「ゑ」、「お」と「を」、も違う音だったという。

日本語は長らく文字がなかった。今から1万年前から紀元前2~3世紀までの縄文時代と呼ばれる時代に文字はなかった。といって言葉がなかったわけではない。縄文の人々は言葉を当たり前に話していた。ただ文字を作る必要を感じていなかった。話し言葉でお互いの意志を伝えあっていた。

なぜ、文字がいるようになったのか。それはなぜ文字が要らなかったのかと同じ問いかけだ。

言葉は互いの意志を通じ合わせ、確かめ合わす。自分の思いを相手に伝えたい、自分の思いを分かってほしい、そして同じように相手の思いを分かりたい、分かりあいたい。そこで言葉がいる。

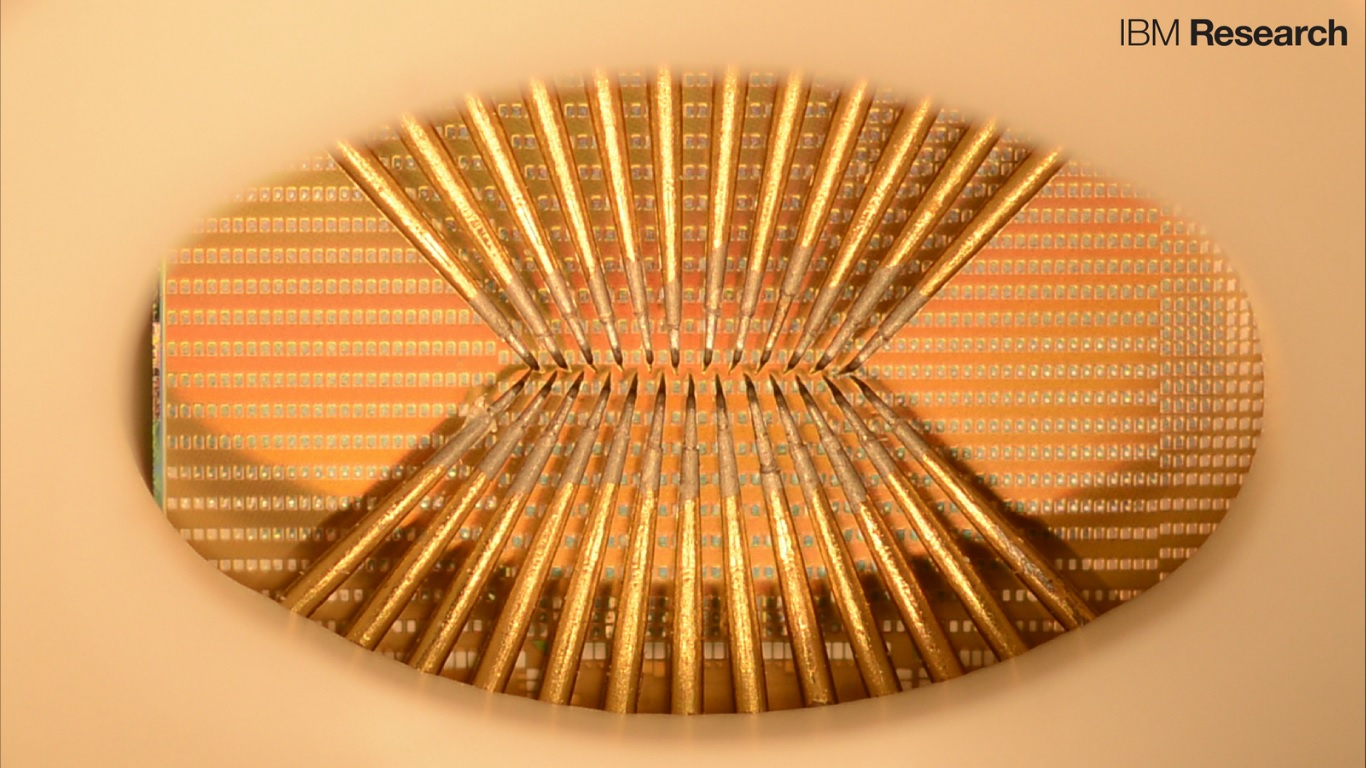

Emma Hinchliffe

Watson consumed all published literature related to ALS, and learned all the proteins already known to be linked to the disease.

The computing system then ranked the nearly 1,500 genes in the human genome and predicted which could be associated with ALS. Barrow’s research team examined Watson’s predictions, and found that eight of the 10 genes proposed by the computer were linked to the disease. Of those, five had never before been associated with ALS.

Philip Fernbach, Steven Sloman

The sense of understanding is contagious. The understanding that others have, or claim to have, makes us feel smarter. This happens only when people believe they have access to the relevant information: When our experimental story indicated that the scientists worked for the Army and were keeping the explanation secret, people no longer felt that they had any understanding of why the rocks glowed.

The key point here is not that people are irrational; it’s that this irrationality comes from a very rational place. People fail to distinguish what they know from what others know because it is often impossible to draw sharp boundaries between what knowledge resides in our heads and what resides elsewhere.

FINE編集部

合成着色料は、タール色素ともいわれ、石油製品を原料に化学合成して作られたものです。発ガン性や催奇形性の疑いなど、安全性に問題があるといわれています。

名前 赤色2号

危険度 5

危険性 発がん性、妊娠率の低下、じんましん

食品 お菓子、清涼飲料水、ゼリー、冷菓、駄菓子、シロップ、洋酒

海外 アメリカ・ヨーロッパでは使用禁止

Heather E. Douglas

When considering the importance of science in policymaking, common wisdom contends that keeping science as far as possible from social and political concerns would be the best way to ensure science’s reliability. This intuition is captured in the value-free ideal for science—that social, ethical, and political values should have no influence over the reasoning of scientists, and that scientists should proceed in their work with as little concern as possible for such values. Contrary to this intuition, I will argue in this book that the value-free ideal must be rejected precisely because of the importance of science in policymaking. In place of the value-free ideal, I articulate a new ideal for science, one that accepts a pervasive role for social and ethical values in scientific reasoning, but one that still protects the integrity of science.

When considering the importance of science in policymaking, common wisdom contends that keeping science as far as possible from social and political concerns would be the best way to ensure science’s reliability. This intuition is captured in the value-free ideal for science—that social, ethical, and political values should have no influence over the reasoning of scientists, and that scientists should proceed in their work with as little concern as possible for such values. Contrary to this intuition, I will argue in this book that the value-free ideal must be rejected precisely because of the importance of science in policymaking. In place of the value-free ideal, I articulate a new ideal for science, one that accepts a pervasive role for social and ethical values in scientific reasoning, but one that still protects the integrity of science.

為末大

言葉ではなく関係性を検索したい時一体どうすればいいのだろうか。またはまだ言語化されていない抽象的な概念はどう検索すればいいのだろうか。私は何を記憶するかというよりも、どう記憶するかの方が重要だと考えていて、その記憶の仕方が多様であればあるほど、また普通とは違う捉え方をしていればいるほど、発想がユニークに広がると考えている。検索すれば大方の情報は出てくるが一体何をどう検索するかの方が重要ではないか。

インターネットにより知りたいことはなんでも調べられるようになったが、皮肉な事に一体自分が何を知らないで、何を知ろうとしているのかは未だ私たち側の問題として残っている。まだ言葉になっていない何かについて思いを馳せるような知性を求めていたい。

武田隆

私が集合知の可能性と限界を同時に感じたのは、東日本大震災のときでした。地震が起こった当日から暫くは、ツイッターが大活躍しました。安否確認もそうでしたが、「日比谷線の恵比寿駅が動いたぞ!」とか「東横線はすごい混雑だ…」とか、それぞれがそれぞれの視点から知った状況を逐一報告することで、リアルタイムにみんなが状況全体の把握ができたんです。

多様な地点から、それぞれが見たこと、聞いたことを伝え合うことでひとつの集合知が形成されました。ところが、状況が少し落ち着いてきてからは、ツイッター上でも原子力発電所の是非ついての議論などが始まりました。それは、集合知と呼ぶにはあまりにもお粗末な、お互いが理解を深めることもなく、前向きな提案が出てまとまることもなく、それぞれの感想や思い込みをぶつけ合うだけの状況でした。

A. S. Neill

It is all too true that from the age of five to fifteen, most children are getting an education that goes only to the head. There is hardly any concern with their emotional life. Yet it is the emotional disturbance in a neurotic child that makes him compulsively steal. All his knowledge of school subjects or his lack of knowledge of school subjects plays no part at all in his larceny.

児玉徳美

見聞ということばがある。見聞とは「見たり聞いたりすること」であり、また「そうして得た知識」でもある。人は見たり聞くことをすべて正確に記憶し再現できるわけではない。外部からの刺激のうち、受け手にとって意味あるものを取捨選択して記憶として身につけ、その後の行動に役立てていく。つまり、外部から送られる情報に反応して知識として蓄積していく。情報と知識は別物であり、両者の間に介在するものが思考である。外部から送られる情報がすべて受け入れられるわけではなく、思考過程で拒否されたり、特定のものが選ばれたり、補強されたりして知識となる。

**

日常生活で出くわす矛盾や不平等、「あたりまえ」とされている制度や常識などへの疑問は身辺に無数に存在する。情報はこのような問題を考える基礎材料を提供してくれることもあるが、直接回答を与えてくれるものではない。問題を発見し、問題解決の筋道をつける作業は情報の受け手や情報と無関係に人の思考・知識に委ねられている。

老子

瀬戸賢一

|

Metaphor Simile Personification Synesthesia Zeugma Metonymy Synecdoche Hyperbole Meiosis Litotes Tautology Oxymoron Euphemism Paralepsis Rhetorical questions Implication Repetition Parenthesis Ellipsis Reticence Inversion Anthithesis Onomatopoeia Climax Paradox Allegory Irony Allusion Parody Pastiche |

人生は旅だ 彼女は氷の塊だ ヤツはスッポンのようだ 社会が病んでいる 母なる大地 深い味 大きな音 暖かい色 バッターも痛いがピッチャーも痛かった 鍋が煮える 春雨やものがたりゆく蓑と傘 熱がある 焼き鳥 花見に行く 一日千秋の思い 白髪三千丈 ノミの心臓 好意をもっています ちょつとうれしい 悪くない 安い買い物ではなかった 殺入は殺人だ 男の子は男の子だ 公然の秘密 暗黒の輝き 無知の知 化粧室 生命保険 政治献金 言うまでもなく お礼の言葉もありません いったい疑問の余地はあるのだろうか 袖をぬらす ちょっとこの部屋蒸すねえ えんやとっと、えんやとっと 文は人なり(人は文なりというべきか) これはどうも それはそれは 「……」 「――」 うまいねえ、このコーヒーは 春は曙 冬はつとめて かっぱらっぱかっぱらった 一度でも…、一度でも…、一度でも… アキレスは亀を追いぬくことはできない 行く河の流れは絶えずして… (0点なのに)ほんといい点数ねえ 盗めども盗めどもわが暮らし楽にならざる サラダ記念日 カラダ記念日 理不尽の嵐で気分が如月状態だぜ(西尾維新風) |

中山清治

喫茶養生記は、栄西が在宋中に見聞しあるいは経験した茶の栽培法、飲み方、採取法、効能等を述べている。またこの他、桑の飲み方効能を記している養生書である。

栄西が再度入宋をした頃、宋では禅宗が盛んであり、当然栄西も禅の修業に励んでいる。禅宗は専一に瞑想することによって仏の悟りに入ると言われており、長時間の瞑想が睡魔に襲われ心身の疲労をきたすということが起こる。茶を飲むことによって疲労を早く回復することが出来、更に精神をも爽快にすることが出来る。中国では茶が古い時代から一般に飲用されていたことは栄西も述べている。

宋の禅僧は特にこうした茶の効用に着目し、睡魔を防止し疲労の回復をはやめることが出来ることから、長時間の瞑想に堪えるためには茶の飲用は欠かせないものであると考えたのである。

したがって禅僧は菩提達磨の像の前に集まり、深厳な儀礼の下に一椀の茶を飲み、これを茶の儀礼としたとされている。留学した栄西もこうした茶の儀式に加わり、自ら茶を飲み。茶の効用を体験し、禅の修業とともに茶に関する儀式を学んだものと思われる。

栄西は茶に興味を抱き、留学中に様々な文献から、あるいは口伝から茶に関する養生法と知識を得ようと努力をした。また当時、茶と並んで養生法の1つとされていた桑の療法を知り、この二つを合わせて持ち帰り、目的とするところは禅の普及にあったが同時に喫茶の習慣を国内にも広めようと考えたのであった。

DK Publishing

Joseph Rouse

These discussions of the concepts of truth and value lead us to the final issue that I take to characterize cultural studies of science. Sociological constructivists frequently insist that they merely describe the ways in which scientific knowledge is socially produced, while bracketing any questions about its epistemic or political worth. In this respect, their work belongs to the tradition that posits value-freedom as a scientific ideal. By contrast, cultural studies of scientific knowledge have a stronger reflexive sense of their own cultural and political engagement, and typically do not eschew epistemic or political criticism. They find normative issues inevitably at stake in both science and cultural studies of science, but see them as arising both locally and reflexively. One cannot not be politically and epistemically engaged.

生活雑感

この30年来、心ある知識人は専門分化が著しい現代文明の状況を眺めて、誰も全貌を理解し得ない時代が来たと慨嘆した。専門が専門を生み、その細分化は止まることがない。そのため隣接する部門であっても、たちまち他国に踏み込んだように理解できないくなっていた。もはやゼネラリストは存在しないのか。だとすれば知識全体のバランスは誰が、どうやって図るのか。まさにカオスの時代が到来したと嘆いた。その頃から私も同じ問題意識で悩まされていた。しかし21世紀のはじめ、遂に人類は新しい知識環境への到達を実感するにいたった。ウエブツーワールド時代の到来だ。従来のツリー型知識の構造は、人間がつくったもので絶対のものではない。それが今日までの進化による情報集積の結果、ついにカバーしきれなくなったのだ。しかし人間は、この閉塞を打ち破るテクノロジーを開発した。ウエブ2.0である。これによって地球全体の情報が瞬時に検索できるようになった。そして個人の頭脳が、地球全体の頭脳とリンクしたのだ。その波及効果は大きい。たとえば大学は知識情報のメッカとしての存在の意味を失っている。

シティユーワ法律事務所

イスラーム教の教義(シャリーア)に従った金融手法。利息の禁止を中核的な概念としており、投資による損益分配や実物取引の形態を使用したスキームが採用されている。イスラーム金融の代表的な諸原則としては、以下のものが挙げられる。

- 利息(Riba)の禁止(例えば、金利付き貸付はこれに反する。遅延損害金も利息の禁止に抵触する。)

- 酒・豚肉の飲食の禁止(例えば、酒造会社への投資はこれに反する。)

- 賭博の禁止およびそのコロラリーとしての投機(maisir)の禁止(例えば、保険やデリバティブ取引について、シャリーアに準拠したイスラーム保険(タカフル)やマスター契約が開発されている。)

- 不明確性(Gharar)の禁止

- 実物取引との結びつきの重視(実物取引の裏づけの無い金融取引は禁止または嫌忌される。)

日本貿易振興機構 ドバイ事務所 知的財産権部

シャリーアにおいては、財産は、相続、遺贈又は遺産、個人の労働と努力等、様々な源流から取得されうるものである。このうち、労働と努力は知的財産にとって重要である。ゆえに、コーランとスンナがともに個人を励まして働かせようとしていることは明白である。

知的財産法は、権利者が金銭的利益のために自らの権利を利用することを認めている。同様に、シャリーアも上記の利用を認めているが、それには幾つかの制限があり、公益に反する利用は禁じられる。たとえば、権利者はザカート(喜捨)又はアルムス等の幾つかの金銭的義務を引き受けなければならない。明らかに、この制限はイスラム教徒のみに適用されるものである。喜捨はイスラム教徒に課される税だからである。さらに、権利者は自らの利用を合法的な方法による利用に限定しなければならない。つまり、利息の徴収、強制、窃盗、及びその他の許容されない行為を常に避けなければならない。

シャリーアの考え方によれば、権利者は、自らの財産及び資産を営利目的での使用を他人に許諾する権利をすべて有している。同様に、知的財産に関する権利の実施許諾も、同じ法理と理解に基づいて認められる。

岸田秀

あるときなどは、「先生はなぜ生きているのか、なぜすぐに死なないのか」と重ねて質問してきた学生もいた。こういう質問が出る前提として、人間が生きているのは生きるに値する価値のためであって、そのような価値がないなら死んだ方がましだという考え方があると思われる。わたしはこのような考え方こそおかしいと思うのだ。

**

生きるための価値を求めるふるまいは、きわめてはた迷惑である。そのような価値は幻想にすぎないわけだから、心の底から納得できる確かな価値などあろうはずがない。キリスト教・イスラム教であれ、ロシア共産制・アメリカ民主制であれ、ユダヤ金融家・アーリア純潔者であれ、これらはすべて人々の価値体系の対立に起因するものである。相手の迷惑を顧みず、伝道や説伏をしようとする結果今でも起きている争いごとである。資本主義が成り立っているのも、一部の「悪辣な資本家」が「愚かで弱い」民衆を金の力で支配しているのではなく、民衆も金の価値を信じているからである。

**

ある理想の価値を信じている人は、その理想を共にしない人を軽蔑する。価値というものを信じている人々の態度が改まらない限りは、いっさいの差別の問題は解決しないに違いない。

戸田山和久

私が目指したのは、認識論を壊すことだ。『認識論をいったんこわして、もういちどつくる』本。。。これまで営まれてきた伝統的認識論の賞味期限は過ぎてしまったんじゃないのか。伝統的認識論は、ある特定の知識生産のやり方に根ざした特定の課題によって生じたという意味で、どこまでも「時代に既定された」営みにほかならない。その課題がリアルな問題に感じられた時代は確かにあったろう。しかし、科学や情報技術の高度化によって、われわれが知識を獲得・処理・利用する仕方は大きく変化してしまった。これだけ知識のあり方そのものが変化してしまったのに、認識論だけそのままというわけにはいかないだろう。伝統的認識論のどこがまずいのかを示し、それを解体したのちに、新しい知識のあり方に即した新しい知識の哲学を構築すること。それが本書の目的だ。

私が目指したのは、認識論を壊すことだ。『認識論をいったんこわして、もういちどつくる』本。。。これまで営まれてきた伝統的認識論の賞味期限は過ぎてしまったんじゃないのか。伝統的認識論は、ある特定の知識生産のやり方に根ざした特定の課題によって生じたという意味で、どこまでも「時代に既定された」営みにほかならない。その課題がリアルな問題に感じられた時代は確かにあったろう。しかし、科学や情報技術の高度化によって、われわれが知識を獲得・処理・利用する仕方は大きく変化してしまった。これだけ知識のあり方そのものが変化してしまったのに、認識論だけそのままというわけにはいかないだろう。伝統的認識論のどこがまずいのかを示し、それを解体したのちに、新しい知識のあり方に即した新しい知識の哲学を構築すること。それが本書の目的だ。

WIPO

The Digital Solution – Possible Benefits

- Overcomes barriers that are associated with the traditional methods of moving books;

- Reduces the cost of knowledge exchange significantly;

- Overcomes political, physical and tariff barriers;

- Improves economies of scale;

- Encourages co-production;

- Allows quick customisation and convenient archiving;

- Allows rapid exchange of information and access to opportunities;

- Improves access to markets and access to knowledge.

University World News

A growing gap in knowledge production exists not only between high-income and other countries but also within the developing world – between a handful of ’emerging’ countries, intermediary nations numbering five to 10 on each continent, and a remaining 100 countries whose productivity remains very small (60 countries) or minute (40 countries). Stagnating research means some nations have lost their relative share of global knowledge production – but the burning question for the developing world is one of critical mass and the resources required to maintain scientific quality and build a new generation of scientists.

UNESCO

First, in the field of knowledge, there are profound inequalities between rich countries and poor countries. One of the vicious circles of under-development is that it is sustained by the knowledge gap while accentuating it in return. Second, the rise of a global information society has allowed a considerable mass of information or knowledge to be disseminated via the leading media. However, the different social groups are far from having equal access and capacity to assimilate this growing flow of information or knowledge. Not only do the most disadvantaged socio-economic categories have often a limited access to information or to knowledge (digital divide), but also they do not assimilate it as well as those who are on the highest rung of the social ladder. Such a divide can also be witnessed between nations. An imbalance is thus created in the actual relationship to knowledge (knowledge divide). Given equal access to it, those who have a high level of education benefit much more from knowledge than those with no or only limited education. The widespread dissemination of knowledge therefore, far from narrowing the gap between developed and less developed countries, may help to widen it.

Phillip J. Tichenor, George A. Donohue, Clarice N. Olien

As the infusion of mass media information into a social system increases, higher socioeconomic status segments tend to acquire this information faster than lower socioeconomic-status population segments so that the gap in knowledge between the two tends to increase rather than decrease.

笹川平和財団 海洋政策研究所

人間は何千年にも渡り陸地である地球の 3 割を探索してきた。陸地およびそこに生息する動植物に関する本格的な科学調査が少なくとも 500 年前から進められてきた。人間は何千年にも渡り海洋を利用しているが、海洋に覆われた地球の 7 割の本格的な探索が進められるようになったのは(沿岸海域図の作成以外では)、僅か 120 年前頃のことである。故に、海洋に関する私たちの知識が陸地に関する知識よりも遥かに限られているのは当然である。海洋の多くについて多くのことが判明しているものの、人間による海洋利用の将来的な効果的管理のために望ましい詳細な知識を私たちは持ち合わせていない。世界の一部地域においては、他の地域で成功裏に開発された技術を適切に応用するために十分な知識さえも持ち合わせていない。基本的な理解の枠組みはあるが、補うべき格差が数多く存在する。

私たちが海洋について理解するために必要な情報は、4 つの主要なカテゴリー、すなわち(a)海洋の物理的構造、(b)海洋水の組成および流動、(c)海洋生物相および(d)人間と海洋の相互作用の状態に分類することができる。この知識における格差の特定に当たっては、今回の評価の各章にて明らかにした格差に関する調査を最大の根拠としている。概して、私たちに最も不足しているのは北極海とインド洋に関する知識である。北半球に位置する大西洋と太平洋の一部地域については、南半球におけるこれらの地域よりも研究が進んでおり、繰り返しになるが、概して最も徹底的な研究が行われているのは北大西洋およびその隣接地域であり、これらの地域においてさえも大きな格差が依然として存在する。

たかまさ

最初に - 引越を決意したら最初にやること、すなわち、新居(引越先)の決定です。不動産業者との交渉ノウハウを盛り込みました。事故物件・わけあり物件をつかまされませんように。

早めに - 新居(引越先)が決まったら早めにやることです。引越日程と引越業者の決定、および各種届出です。引越業者との交渉ノウハウや引越費用見積ノウハウを盛り込みました。引越費用に定価はありません、交渉次第で決まります。

1ヵ月前から - 引越日の1ヵ月くらい前からやることです。各種変更手続きと荷造りの開始です。

2週間前から - 引越日の2週間くらい前からやることです。公的な届出と必要なモノの購入です。

1週間前から - 引越日の1週間くらい前からやることです。電気・水道・ガスの手続きと各種住所変更手続きなどです。

前日に - 引越日の前日にやることです。梱包の完了と掃除です。

当日に - 引越日の当日にやることです。旧居でやる荷物の搬出と、新居でやる荷物の搬入が主になりますが、トラブル対応などの留意点があります。

引越後に - 引越後の2週間以内にやらなければならないことと、その後の早めにやる手続きなどです。

Confucius

Real knowledge is to know the extent of one’s ignorance.

Wayne Dyer

The highest form of ignorance is when you reject something you don’t know anything about.

Sandra Page

Clear Learning Goals mean:

- What should students KNOW: Facts; Dates; Definitions; Rules; People; Places; Vocabulary; Information.

- Students will be able to DO: Basic skills; Communication; Planning/Organization; Thinking skills; Evaluation; Working collaboratively; Skills of the discipline: mapping, graphing, collecting data, show p.o.v.

- Students will UNDERSTAND that: Essential questions; Theories; “Big” ideas; Important generalizations; Thesis-like statements.

尾上清

実業界の勝負は何できまるか、それは知識ではなく知恵である。知識は本を読んだり、聞いたりして輸入できるが、知恵は自分で作りだすものである。

その為には頭で考える。考えて行動する事によって知恵が蓄積されるのである。

Nick Bostrom

When we create the first superintelligent entity, we might make a mistake and give it goals that lead it to annihilate humankind, assuming its enormous intellectual advantage gives it the power to do so. For example, we could mistakenly elevate a subgoal to the status of a supergoal. We tell it to solve a mathematical problem, and it complies by turning all the matter in the solar system into a giant calculating device, in the process killing the person who asked the question.

Linda Gottfredson

Intelligence is a very general mental capability that, among other things, involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly, and learn from experience. It is not merely book-learning, a narrow academic skill, or test-taking smarts. Rather, it reflects a broader and deeper capability for comprehending our surroundings “catching on,” “making sense” of things, or “figuring out” what to do.

Wait But Why

AI Caliber 1) Artificial Narrow Intelligence (ANI): Sometimes referred to as Weak AI, Artificial Narrow Intelligence is AI that specializes in one area. There’s AI that can beat the world chess champion in chess, but that’s the only thing it does. Ask it to figure out a better way to store data on a hard drive, and it’ll look at you blankly.

AI Caliber 2) Artificial General Intelligence (AGI): Sometimes referred to as Strong AI, or Human-Level AI, Artificial General Intelligence refers to a computer that is as smart as a human across the board—a machine that can perform any intellectual task that a human being can. Creating AGI is a much harder task than creating ANI, and we’re yet to do it. Professor Linda Gottfredson describes intelligence as “a very general mental capability that, among other things, involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly, and learn from experience.” AGI would be able to do all of those things as easily as you can.

AI Caliber 3) Artificial Superintelligence (ASI): Oxford philosopher and leading AI thinker Nick Bostrom defines superintelligence as “an intellect that is much smarter than the best human brains in practically every field, including scientific creativity, general wisdom and social skills.” Artificial Superintelligence ranges from a computer that’s just a little smarter than a human to one that’s trillions of times smarter—across the board. ASI is the reason the topic of AI is such a spicy meatball and why the words “immortality” and “extinction” will both appear in these posts multiple times.

Brent Staples

The father of cybernetics cautioned human beings against the desire to be waited upon by intelligent machines that are equipped to improve their minds over time. “We wish a slave to be intelligent, to be able to assist us in the carrying out of our tasks,” Wiener writes. “However, we also wish him to be subservient.” The obvious problem is that keen intelligence and groveling submission do not go hand in hand.

École polytechnique fédérale de Lausanne (EPFL)

The goal of the Blue Brain Project is to build biologically detailed digital reconstructions and simulations of the rodent, and ultimately the human brain. The supercomputer-based reconstructions and simulations built by the project offer a radically new approach for understanding the multilevel structure and function of the brain. The project’s novel research strategy exploits interdependencies in the experimental data to obtain dense maps of the brain, without measuring every detail of its multiple levels of organization (molecules, cells, micro-circuits, brain regions, the whole brain). This strategy allows the project to build digital reconstructions (computer models) of the brain at an unprecedented level of biological detail. Supercomputer-based simulation of their behavior turns understanding the brain into a tractable problem, providing a new tool to study the complex interactions within different levels of brain organization and to investigate the cross-level links leading from genes to cognition.

E. O. Wilson

We are drowning in information, while starving for wisdom. The world henceforth will be run by synthesizers, people able to put together the right information at the right time, think critically about it, and make important choices wisely.

Antony Garrett Lisi

Computers share knowledge much more easily than humans do, and they can keep that knowledge longer, becoming wiser than humans. Many forward-thinking companies already see this writing on the wall, and are luring the best computer scientists out of academia with better pay and advanced hardware. A world with superintelligent machine-run corporations won’t be that different for humans than it is now; it will just be better: with more advanced goods and services available for very little cost, and more leisure time available to those who want it.

Of course, the first superintelligent machines probably won’t be corporate; they’ll be operated by governments. And this will be much more hazardous. Governments are more flexible in their actions than corporations—they create their own laws. And, as we’ve seen, even the best can engage in brutal torture when they consider their survival to be at stake. Governments produce nothing, and their primary modes of competition for survival and propagation are social manipulation, legislation, taxation, corporal punishment, murder, subterfuge, and warfare.

Edge

To arrive at the edge of the world’s knowledge, seek out the most complex and sophisticated minds, put them in a room together, and have them ask each other the questions they are asking themselves.

Rodney A. Brooks

“Think” and “intelligence” are both what Marvin Minsky has called suitcase words. They are words into which we pack many meanings so that we can talk about complex issues in a shorthand way. When we look inside these words we find many different aspects, mechanisms, and levels of understanding. This makes answering the perennial questions of “can machines think?” or “when will machines reach human level intelligence?” fraught with danger. The suitcase words are used to cover both specific performance demonstrations by machines and more general competence that humans might have. People are getting confused and generalizing from performance to competence and grossly overestimating the real capabilities of machines today and in the next few decades.

Peter Norvig

In 1950, Alan Turing suggested we should ask not “Can Machines Think” but rather “What Can Machines Do?” Edsger Dijkstra got it right in 1984 when he said the question of Can Machine Think “is about as relevant as the question of whether Submarines Can Swim.” By that he meant that both are questions in sociolinguistics: how do we choose to use words such as “think”? In English, submarines do not swim, but in Russian, they do. This is irrelevant to the capabilities of submarines. So let’s explore what it is that machines can do, and whether we should fear their capabilities.

Edsger W. Dijkstra

The malleability is understandable when we realize that at the beginning the computing community was very uncertain as to what its topic was really about and got in this respect very little guidance from the confused and confusing world by which it was surrounded.

The Fathers of the field had been pretty confusing: John von Neumann speculated about computers and the human brain in analogies sufficiently wild to be worthy of a medieval thinker and Alan M. Turing thought about criteria to settle the question of whether Machines Can Think, a question of which we now know that it is about as relevant as the question of whether Submarines Can Swim.

A futher confusion came from the circumstance that numerical mathematics was at the time about the only scientific discipline more or less ready to use the new equipment. As a result, in their capacity as number crunchers, computers were primarily viewed as tools for the numerical mathematician.

But the greatest confusion came from the circumstance that, at the time, electronic engineering was not really up to the challenge of constructing the machinery with an acceptable degree of reliability and that, consequently, the hardware became the focus of concern.

I called this focus on hardware a distortion because we know by now that electronic engineering can contribute no more than the machinery, and that the general purpose computer is no more than a handy device for implementing any thinkable mechanism without changing a single wire. That being so, the key question is what mechanisms we can think of without getting lost in the complexities of our own making. Not getting lost in the complexities of our own making and preferably reaching that goal by learning how to avoid the introduction of those complexities in the first place, that is the key challenge computing science has to meet.

Nowadays machines are so fast and stores are so huge that in a very true sense the computations we can evoke defy our imagination. Machine capacities now give us room galore for making a mess of it. Opportunities unlimited for fouling things up! Developing the austere intellectual discipline of keeping things sufficiently simple is in this environment a formidable challenge, both technically and educationally.

As computing scientists we should not be frightened by it; on the contrary, it is always nice to know what you have to do, in particular when that task is as clear and inspiring as ours. We know perfectly well what we have to do, but the burning question is, whether the world we are part of will allow us to do it. The answer is not evident at all. The odds against computing science might very well turn out to be overwhelming.

William Poundstone

My favorite Edsger Dijkstra aphorism is this one: “The question of whether machines can think is about as relevant as the question of whether submarines can swim.” Yet we keep playing the imitation game: asking how closely machine intelligence can duplicate our own intelligence, as if that is the real point. Of course, once you imagine machines with human-like feelings and free will, it’s possible to conceive of misbehaving machine intelligence—the AI as Frankenstein idea. This notion is in the midst of a revival, and I started out thinking it was overblown. Lately I have concluded it’s not.

Tom Meltzer

Knowledge-based jobs were supposed to be safe career choices, the years of study it takes to become a lawyer, say, or an architect or accountant, in theory guaranteeing a lifetime of lucrative employment. That is no longer the case. Now even doctors face the looming threat of possible obsolescence. Expert radiologists are routinely outperformed by pattern-recognition software, diagnosticians by simple computer questionnaires. Algorithms and machines would replace 80% of doctors within a generation.

坂本直子

上智大学は、『新カトリック大事典』が研究社オンライン・ディクショナリー(KOD)で利用可能になったことを発表した。

『新カトリック大事典』は、1万5千項目を収録する百科事典。総執筆者数は900人に上る。

同事典の編纂事業は、1970年代に始まり、以来30年余りの年月が費やされてきた。その間に技術の革新が進み、編纂作業は専用原稿用紙でのやりとりから、フロッピーディスクへと変わり、さらに電子メールで原稿を受け取り、整理・編集を行い、出版社にはメモリースティックを渡す、というように変わっていった。電子化の過程でも、インターネットを利用することで、大量のデータを瞬時にやりとりしてきた。

編纂委員会はこうした流れを、「『知識』は『情報』という言葉に取って変わりつつある」と受け止めている。その一方で、高柳編纂委員長は「猛スピードで膨大な量が入ってくる現代の情報量を、人間性を豊かに発展させるための障害とするのではなく、緊張関係をとどめつつも、ポジティブな性格を取り込むことが必要」だとし、「その意味では、『新カトリック大事典』は、人類が未来に進んで行くための奉仕を使命としている」と同事典の究極の役割を語った。

Denise Caruso

Know-how is more than knowledge. It puts knowledge to work in the real world. It is how scientific discoveries become routine medical treatments, and how inventions — like the iPod or the Internet — become the products and services that change how we work and play.

As the moon-and-ghetto disparity demonstrates, know-how is unevenly distributed. But why?

At the time, the chemicals were used widely as refrigerants and solvents for semiconductors. But no one ended up going without refrigerators or computers.

Whether or not the search yields results, it will at least help us to better understand why we can put a man on the moon, but we cannot manage to improve literacy rates, or shape workable policies on climate change, or reduce global poverty.

Knowing the mechanics that drive the “go” may help us to separate what is practically effective from our value judgments, and come up with a process that spurs solutions to problems as predictably as technological know-how does today.

Thriving Earth Exchange (TEX)

Thriving Earth Exchange (TEX) helps communities leverage Earth and space science to build a better future for themselves and the planet. TEX does this by bringing together Earth and space scientists and community leaders and helping them combine science and local knowledge to solve on‐the‐ground challenges related to natural hazards, natural resources, and climate change. By 2019, Thriving Earth Exchange will launch 100 partnerships, engage over 100 members, catalyze 100 shareable solutions, and improve the lives of 10 million people. Through the Thriving Earth Exchange, local leaders and Earth and space scientists will create resilient communities that enrich the Earth. Working together, we will create solutions for the planet, one community at a time.

Daniel Sarewitz

Advancing according to its own logic, much of science has lost sight of the better world it is supposed to help create. Shielded from accountability to anything outside of itself, the “free play of free intellects” begins to seem like little more than a cover for indifference and irresponsibility. The tragic irony here is that the stunted imagination of mainstream science is a consequence of the very autonomy that scientists insist is the key to their success. Only through direct engagement with the real world can science free itself to rediscover the path toward truth.

藤原正彦

先日のある審議会で、局所的判断や短期的視野を得るには論理や合理や理性だけで間に合うかもしれないが、正しい大局観や長期的視野を得るにはそうはいかない・・・情緒が必要、と私は述べた。これに異論が出たのは予想外だった。論理は必ず仮説から出発することになる。仮説はその人の価値観、人生観、世界観、人間観といったものにより選ばれる。これらの基底となるものが、教養や情緒といったものなのである。

東京都市大学

知識工学部では、21世紀の知識基盤社会において、高度な科学技術知識を有しこれらを総合的に活用できる人材を育成することを目的としています。

Karl Mehta

Everything that can be automated has been automated. The fourth industrial revolution is upon us, with the forces of AI, robotics, and 3D printing disrupting the status quo and pushing outdated processes into oblivion. The Ford factory workers’ jobs have largely been turned over to machines.

But the workforce training process hasn’t kept up with the pace of change.

The education that the workforce received was designed in the previous industrial age: front-loaded for first 20 years, and expected to apply to their jobs for the next 40 to 50 years. Today, we are in the knowledge economy, and there is new knowledge we are required to learn and apply daily. How can we future-proof our workforces to help them prepare for the rapid pace of business transformation?

フランス料理情報サービス

素材の準備

- vider: 魚や鳥類の内臓を取り除く。

- écailler: 牡蠣や貝類の殻を開ける。魚のウロコを取る。

- émonder: 熱湯に素材(トマトや果物)を数秒くぐらせたのち冷水にとって皮を剥くこと。

- dégorger: 流水にさらして不純物やアク、血抜きなどをする。発泡ワインの澱を取り除く。

- décortiquer: エビやオマールなど甲殻類の皮を剥く。アーモンドや栗の皮を剥く。

- désosser: 肉や魚の骨を取り除く。

- dépouiller: ウサギや仔牛の頭など、動物の皮を剥ぐこと。

- flamber: 鳥の羽をむしり取ったあとに残った細かい羽を火にかざして焼ききる。

- plumer: 鳥類の羽をむしる。

- laver: 洗う。

読売新聞

「1192(イイクニ)つくろう」。語呂合わせでおなじみだった鎌倉幕府成立の年について、近年有力な1185(イイハコ)年など六つの説をまとめて紹介する高校教科書が、来春から登場する。

学説をこれほど並べるのは異例で、文部科学省の担当者も「いままで見た記憶がない」と驚く。古代から中世の歴史は学説が動くことも多く、教科書の記述も「ゆるめ」になっているようだ。

六つの説を掲載したのは、26日に検定を合格した東京書籍の「日本史B」。源頼朝が鎌倉に侍所を設けた1180年から、征夷大将軍に任命された1192年まで並べた。このうち、頼朝が守護や地頭の任命権などを獲得した1185年を「幕府成立」とする学説を「現在ではこれを支持する学者が多い」と紹介した。

ただ、いずれの説にも軍配を上げず、「幕府をどうとらえるかによって、成立した時期も変わってくる」と記述した。編集担当者は「歴史的事実は『何年』という風に決まるものばかりでなく、幅があることを考えてほしい」と話す。

今回、合格した日本史Bの教科書はほかに5冊あるが、「頼朝に始まる武家政権を鎌倉幕府と呼んでいる」などと、いずれも幕府成立の年を特定していない。

朝日新聞

語呂合わせ「1192(いいくに)つくろう」で、鎌倉幕府が成立した年号を覚えた人は多いはず。しかし、現在の中学歴史教科書の多くは、鎌倉幕府の成立が、1192年ではなく1185年に変わっている。語呂合わせなら「1185(いいはこ)つくろう鎌倉幕府」ということになる。

東京書籍などによると、92年は源頼朝が征夷大将軍に就いた年。頼朝は85年、軍事・行政官「守護」や、税金集めなどをする「地頭」を任命する権利を得、幕府の制度を整えたのだという。

社会編集部副編集長の和田直久さんは「実質的に幕府が成立したのが85年。92年は征夷大将軍という『名』を得て名実ともに幕府が完成した年と考えられます」と話す。

中野明

知識は誰にでも修得できる。年齢も性別も国籍も関係ない。その結果、知識社会は、今まで以上に競争の激しい社会、しかもグローバル規模での競争が激化する社会になると予想されよう。さらに、知識には持ち運びが自由という特徴がある。したがって、組織は必要な知識をいかに発掘するのかと同様に、その知識をいかにつなぎ止めるかが、今後のマネジメントの重要課題になる。

加えて、組織は本当に必要な知識の獲得のみに集中し、それ以外は組織の外にある資源を活用する傾向が強くなる。アウトソーシングの役割が高まっているのがその証拠だ。詳細は省くが、リストラクチュアリング、リエンジニアリング、コア・コンピタンスというビジネス理論が相次いで誕生したのも、知識社会の浸透を物語る現象といえよう。

さらに、知識を持つ者の重要性が高まることは、そうした人に対して多くの報酬が支払われることを意味する。逆に持たざる者は低い報酬に甘んじなければならない。やがてやって来る本格的知識社会では、知識を持つ者と持たざる者の間に、大きな格差が生まれることは予想に難くない。

Shane Gero

The whale families we work with, members of the Eastern Caribbean Clan, are shrinking. Their population is declining by as much as 4 percent a year largely a result of climate change and the increasing human presence in these waters. We are not just losing specific whales that we have come to know as individuals; we are losing a way of life, a culture — the accumulated wisdom of generations on how to survive in the deep waters of the Caribbean Sea. They may have lived here for longer than we have walked upright.

**

Every culture, whale or otherwise, is its own solution to the problems of the environment in which it lives. With its extirpation, we lose the traditional knowledge of what it means to be a Caribbean whale and how to exploit the deep sea riches around the islands efficiently. And that cannot be recovered, not even if the global population of sperm whales was robust enough to support remigration into the Caribbean. These would be different whales, from elsewhere, who do things differently. This region would be profoundly impoverished for the new whales, who would be more vulnerable here. The species as a whole would lose some of its repertoire on how to survive.

**

Species conservation should not be just about numbers. The definition of biodiversity needs to include cultural diversity.

International Baccalaureate

- In physics, experiment and observation seem to be the basis for knowledge. The physicist might want to construct a hypothesis to explain observations that do not fit current thinking and devises and performs experiments to test this hypothesis. Results are then collected and analysed and, if necessary, the hypothesis modified to accommodate them.

- In history there is no experimentation. Instead, documentary evidence provides the historian with the raw material for interpreting and understanding the recorded past of humanity. By studying these sources carefully a picture of a past event can be built up along with ideas about what factors might have caused it.

- In a literature class students set about understanding and interpreting a text. No observation of the outside world is necessary, but there is a hope that the text can shed some light upon deep questions about what it is to be human in a variety of world situations or can act as a critique of the way in which we organize our societies.

- Economics, by contrast, considers the question of how human societies allocate scarce resources. This is done by building elaborate mathematical models based upon a mixture of reasoning and empirical observation of relevant economic factors.

- In the islands of Micronesia, a steersman successfully navigates between two islands 1,600 km apart without a map or a compass.

Internet Encyclopedia of Philosophy (IEP)

Epistemology is the study of knowledge. Epistemologists concern themselves with a number of tasks, which we might sort into two categories.

First, we must determine the nature of knowledge; that is, what does it mean to say that someone knows, or fails to know, something? This is a matter of understanding what knowledge is, and how to distinguish between cases in which someone knows something and cases in which someone does not know something. While there is some general agreement about some aspects of this issue, we shall see that this question is much more difficult than one might imagine.

Second, we must determine the extent of human knowledge; that is, how much do we, or can we, know? How can we use our reason, our senses, the testimony of others, and other resources to acquire knowledge? Are there limits to what we can know? For instance, are some things unknowable? Is it possible that we do not know nearly as much as we think we do? Should we have a legitimate worry about skepticism, the view that we do not or cannot know anything at all?

Andre Spicer

The idea of the knowledge economy is appealing. The only problem is it is largely a myth. Developed western economies such as the UK and the US are not brimming with jobs that require degree-level qualifications. For every job as a skilled computer programmer, there are three jobs flipping burgers. The fastest-growing jobs are low-skilled repetitive ones in the service sector. One-third of the US labour market is made up of three types of work: office and administrative support, sales and food preparation.

Massachusetts Institute of Technology (MIT)

You may have heard people say that you do not have to cite your source when the information you include is “common knowledge.” But what is common knowledge?

Broadly speaking, common knowledge refers to information that the average, educated reader would accept as reliable without having to look it up. This includes:

- Information that most people know, such as that water freezes at 32 degrees Fahrenheit or that Barack Obama was the first American of mixed race to be elected president.

- Information shared by a cultural or national group, such as the names of famous heroes or events in the nation’s history that are remembered and celebrated.

- Knowledge shared by members of a certain field, such as the fact that the necessary condition for diffraction of radiation of wavelength from a crystalline solid is given by Bragg’s law.

- However, what may be common knowledge in one culture, nation, academic discipline or peer group may not be common knowledge in another.

安西祐一郎

人間を他の動物と分け隔てる大きな特徴は、見たり聞いたりしていることをそのまま鵜呑みにして行動するのではなく、いろいろな概念やことばによって意味をつかむことができる点にある。また、多くの経験を知識に変え、その知識を実践に応用することによって、さらにしっかりした知識を創り出していくことができる点にある。

Robert Reich

Automobile technicians are in demand to repair the software that now powers our cars; manufacturing technicians, to upgrade the numerically controlled machines and 3-D printers that have replaced assembly lines; laboratory technicians, to install and test complex equipment for measuring results; telecommunications technicians, to install, upgrade, and repair the digital systems linking us to one another.

Technology is changing so fast that knowledge about specifics can quickly become obsolete. That’s why so much of what technicians learn is on the job.

鶴田進

機械的に覚えたものを記憶と呼びます。この記憶がいくら増えても実は知識にはなっていきません。ここが最大の問題点なのです。

知識とは、意味理解の伴った記憶のことであり、記憶そのものとは区別しなければなりません。「牴牾…辻褄が合わないこと」を幼児が覚えたとして、例えば、牴牾って何と聞いたとします。すると間髪入れずに辻褄が合わないことと答えます。親御さんは小躍りして喜ぶでしょう。次に辻褄って何と聞きます。?わかんなーい。となります。これが単なる記憶であり、知識では無いのです。

こういう勉強ばかりをしていると、算数も低学年まではいいのですが、高学年になって、苦労をし始めます。

Zinédine Zidane

山鳥重

第1章 記憶の現象学

第1章 記憶の現象学

A. さまざまな記憶

B. 陳述できる記憶

C. 行動化される記憶(陳述できない記憶)

第2章 生活記憶の障害

A. 記憶障害のキーワード

B. 生活記憶の障害

C. 前頭葉損傷と健忘

第3章 意味記憶の障害

A. 意味記憶の特徴

B. 単語の意味記憶の障害

C. 図像の意味の理解障害

D. 実物の意味記憶の障害

E. 意味カテゴリーと大脳損傷

第4章 手続き記憶の障害

A. 視覚運動性手続き記憶の障害

B. 知覚性手続き記憶の障害

C. 認知性手続き記憶の障害

D. 古典的条件反射

第5章 生活記憶の神経解剖学的構造

A. 健忘の責任病巣

B. 記憶のネットワーク

第6章 記憶の心理構造,統合的理解へ向けて

A. 記憶の流れ

B. 記憶と生活

David Lumb

Paul G. Allen

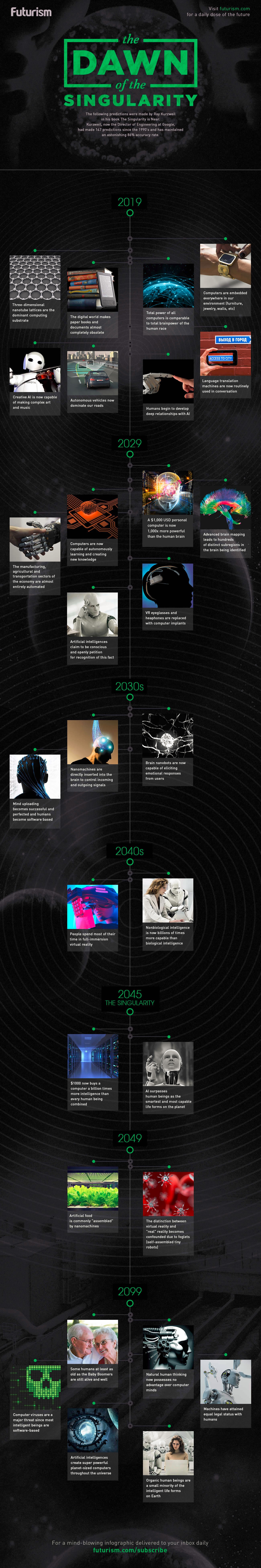

To achieve the singularity, it isn’t enough to just run today’s software faster. We would also need to build smarter and more capable software programs. Creating this kind of advanced software requires a prior scientific understanding of the foundations of human cognition, and we are just scraping the surface of this.

This prior need to understand the basic science of cognition is where the “singularity is near” arguments fail to persuade us. It is true that computer hardware technology can develop amazingly quickly once we have a solid scientific framework and adequate economic incentives. However, creating the software for a real singularity-level computer intelligence will require fundamental scientific progress beyond where we are today.

Michael C. Ramsay, Cecil R. Reynolds

Theorists have proposed, and researchers have reported, that intelligence is a set of relatively stable abilities, which change only slowly over time. Although intelligence can be seen as a potential, it does not appear to be an inherent fixed or unalterable characteristic. … Contemporary psychologists and other scientists hold that intelligence results from a complex interaction of environmental and genetic influences. Despite more than one hundred years of research, this interaction remains poorly understood and detailed. Finally, intelligence is neither purely biological nor purely social in its origins. Some authors have suggested that intelligence is whatever intelligence tests measure.

Ray Kurzweil

Wikipedia

The technological singularity (also, simply, the singularity) is the hypothesis that the invention of artificial superintelligence will abruptly trigger runaway technological growth, resulting in unfathomable changes to human civilization. According to this hypothesis, an upgradable intelligent agent (such as a computer running software-based artificial general intelligence) would enter a ‘runaway reaction’ of self-improvement cycles, with each new and more intelligent generation appearing more and more rapidly, causing an intelligence explosion and resulting in a powerful superintelligence that would, qualitatively, far surpass all human intelligence. Science fiction author Vernor Vinge said in his essay The Coming Technological Singularity that this would signal the end of the human era, as the new superintelligence would continue to upgrade itself and would advance technologically at an incomprehensible rate.

Future of Life Institute (FLI)

Artificial intelligence (AI) research has explored a variety of problems and approaches since its inception, but for the last 20 years or so has been focused on the problems surrounding the construction of intelligent agents – systems that perceive and act in some environment. In this context, “intelligence” is related to statistical and economic notions of rationality – colloquially, the ability to make good decisions, plans, or inferences. The adoption of probabilistic and decision-theoretic representations and statistical learning methods has led to a large degree of integration and cross-fertilization among AI, machine learning, statistics, control theory, neuroscience, and other fields. The establishment of shared theoretical frameworks, combined with the availability of data and processing power, has yielded remarkable successes in various component tasks such as speech recognition, image classification, autonomous vehicles, machine translation, legged locomotion, and question-answering systems.

Costica Bradatan

Have you heard the story of the architect from Shiraz who designed the world’s most beautiful mosque? No one had ever conjured up such a design. It was breathtakingly daring yet well-proportioned, divinely sophisticated, yet radiating a distinctly human warmth. Those who saw the plans were awe-struck.

Famous builders begged the architect to allow them to erect the mosque; wealthy people came from afar to buy the plans; thieves devised schemes to steal them; powerful rulers considered taking them by force. Yet the architect locked himself in his study, and after staring at the plans for three days and three nights, burned them all.

The architect couldn’t stand the thought that the realized building would have been subject to the forces of degradation and decay, eventual collapse or destruction by barbarian hordes. During those days and nights in his study he saw his creation profaned and reduced to dust, and was terribly unsettled by the sight. Better that it remain perfect. Better that it was never built.