- 自然界にあるものに一切の境はない。境目というのは、分類し、理解をするために人間が勝手に定めたもの。所詮は、人間が自分たちのために作った分類に過ぎない。

- 植物は、動物から身を守るために毒を持つようになった。被子植物はアルカロイドという毒成分を身につけ、恐竜は対応できず中毒死し、衰退していった。

- 私たちの体の細胞には、自ら死ぬためのプログラムが組み込まれている。「死」は地球上に生まれた生命が創りだした発明品である。

- 人間は、さまざまに植物を改良して、変化させてきた。植物にとっては、人間の欲望に合わせて変化することは、自然界を生き抜く苦労に比べれば、何でもなかった。

- 植物プランクトンが作りだした酸素は、紫外線に当たってオゾンとなった。オゾンは上空に充満し、紫外線を遮り、海の中にいた植物が陸に進出するのを助けた。

- 人間は二酸化炭素を増やし、オゾン層を破壊し、植物を減らし、またもとの地球を取り戻そうとしている。その環境でいくらかの生物は進化を遂げるだろう。しかし人類は生き残れない。

Category Archives: knowledge

森本あんり

BBC, BS朝日

知性に必要な要素である「創造力」を持つのは人間だけではない。イルカはオリジナルの技を考え出し、ミズダコは人間が作った仕掛けを利用して生きている。また、コガラの実験では、先祖が暮らした場所が過酷な環境であるほど創造力にあふれるDNAが受け継がれ、難問を解決する能力が発達していることが証明される。

**

動物は危険回避のため、あるいはテリトリーを守るため、そして子どもに生きる術を教えるために、鳴き声やボディーランゲージを使ってコミュニケ―ションをとる。人間との関わりの中では、人間の言葉を理解し、時には「会話」に近いこともできるようになる。また他者への愛情や共感、協力、自己認識など高度な社会性を伴うコミュニケーション能力を持つ動物もいる。実験・観察を通して、驚くべき行動をするさまざまな動物の生態をわかりやすく解説。人間と動物の能力にそれほど差はなく、動物たちの能力には未知の領域があることがわかる。

Virginia Morell

We are not alone in our ability to invent or plan or to contemplate ourselves—or even to plot and lie.

Deceptive acts require a complicated form of thinking, since you must be able to attribute intentions to the other person and predict that person’s behavior. One school of thought argues that human intelligence evolved partly because of the pressure of living in a complex society of calculating beings. Chimpanzees, orangutans, gorillas, and bonobos share this capacity with us. In the wild, primatologists have seen apes hide food from the alpha male or have sex behind his back.

Birds, too, can cheat. Laboratory studies show that western scrub jays can know another bird’s intentions and act on that knowledge. A jay that has stolen food itself, for example, knows that if another jay watches it hide a nut, there’s a chance the nut will be stolen. So the first jay will return to move the nut when the other jay is gone.

Harry Collins

When I say that one must start by thinking about all the things that might count as knowledge, I do not mean to claim that anything like classical epistemology is being pursued. First, for the sociologist of knowledge, or the Wittgensteinian philosopher, there is no classical epistemology—knowledge cannot be found in the absence of the activities of humans. The point is that we must start with an attempt to think about knowledge in a way that goes beyond human experience if we are to understand that experience properly. The starting point is to think of knowledge as “stuff” that might also be found in animals, trees, and sieves and then try to work out from this starting point what it is that humans have. Human experience alone is too blunt an instrument for the task.

Charles Darwin

That many and grave objections may be advanced against the theory of descent with modification through natural selection, I do not deny. I have endeavoured to give to them their full force. Nothing at first can appear more difficult to believe than that the more complex organs and instincts should have been perfected, not by means superior to, though analogous with, human reason, but by the accumulation of innumerable slight variations, each good for the individual possessor. Nevertheless, this difficulty, though appearing to our imagination insuperably great, cannot be considered real if we admit the following propositions, namely,—that gradations in the perfection of any organ or instinct, which we may consider, either do now exist or could have existed, each good of its kind,—that all organs and instincts are, in ever so slight a degree, variable,—and, lastly, that there is a struggle for existence leading to the preservation of each profitable deviation of structure or instinct. The truth of these propositions cannot, I think, be disputed.

Charles Darwin

Apparently every growing part of every plant is continually circumnutating, though often on a small scale. Even the stems of seedlings before they have broken through the ground, as well as their buried radicles, circumnutate, as far as the pressure of the surrounding earth permits. In this universally present movement we have the basis or groundwork for the acquirement, according to the requirements of the plant, of the most diversified movements. Thus, the great sweeps made by the stems of twining plants, and by the tendrils of other climbers, result from a mere increase in the amplitude of the ordinary movement of circumnutation. The position which young leaves and other organs ultimately assume is acquired by the circumnutating movement being increased in some one direction. The leaves of various plants are said to sleep at night, and it will be seen that their blades then assume a vertical position through modified circumnutation, in order to protect their upper surfaces from being chilled through radiation. The movements of various organs to the light, which are so general throughout the vegetable kingdom, and occasionally from the light, or transversely with respect to it, are all modified forms of circumnutation; as again are the equally prevalent movements of stems, etc., towards the zenith, and of roots towards the centre of the earth. In accordance with these conclusions, a considerable difficulty in the way of evolution is in part removed, for it might have been asked, how did all these diversified movements for the most different purposes first arise? As the case stands, we know that there is always movement in progress, and its amplitude, or direction, or both, have only to be modified for the good of the plant in relation with internal or external stimuli.

Stefano Mancuso

Stefano Mancuso, Alessandra Viola

Le piante sono esseri intelligenti? Partendo da questa semplice domanda Stefano Mancuso e Alessandra Viola conducono il lettore in un inconsueto e affascinante viaggio intorno al mondo vegetale. In generale, le piante potrebbero benissimo vivere senza di noi. Noi invece senza di loro ci estingueremmo in breve tempo. Eppure persino nella nostra lingua, e in quasi tutte le altre, espressioni come “vegetare” o “essere un vegetale” sono passate a indicare condizioni di vita ridotte ai minimi termini. “Vegetale a chi?”… Se le piante potessero parlare, forse sarebbe questa una delle prime domande che ci farebbero.

Le piante sono esseri intelligenti? Partendo da questa semplice domanda Stefano Mancuso e Alessandra Viola conducono il lettore in un inconsueto e affascinante viaggio intorno al mondo vegetale. In generale, le piante potrebbero benissimo vivere senza di noi. Noi invece senza di loro ci estingueremmo in breve tempo. Eppure persino nella nostra lingua, e in quasi tutte le altre, espressioni come “vegetare” o “essere un vegetale” sono passate a indicare condizioni di vita ridotte ai minimi termini. “Vegetale a chi?”… Se le piante potessero parlare, forse sarebbe questa una delle prime domande che ci farebbero.

Tomas Chamorro-Premuzic

Pretty much anywhere in the world men tend to think that they are much smarter than women. Yet arrogance and overconfidence are inversely related to leadership talent — the ability to build and maintain high-performing teams, and to inspire followers to set aside their selfish agendas in order to work for the common interest of the group. Indeed, whether in sports, politics or business, the best leaders are usually humble — and whether through nature or nurture, humility is a much more common feature in women than men. For example, women outperform men on emotional intelligence, which is a strong driver of modest behaviors. Furthermore, a quantitative review of gender differences in personality involving more than 23,000 participants in 26 cultures indicated that women are more sensitive, considerate, and humble than men, which is arguably one of the least counter-intuitive findings in the social sciences. An even clearer picture emerges when one examines the dark side of personality: for instance, our normative data, which includes thousands of managers from across all industry sectors and 40 countries, shows that men are consistently more arrogant, manipulative and risk-prone than women.

The paradoxical implication is that the same psychological characteristics that enable male managers to rise to the top of the corporate or political ladder are actually responsible for their downfall. In other words, what it takes to get the job is not just different from, but also the reverse of, what it takes to do the job well. As a result, too many incompetent people are promoted to management jobs, and promoted over more competent people.

Office of Strategic Services (OSS), CIA

- Insist on doing everything through “channels.” Never permit short-cuts to be taken in order to expedite decisions.

- Make “speeches.” Talk as frequently as possible and at great length. Illustrate your “points” by long anecdotes and accounts of personal experiences.

- When possible, refer all matters to committees, for “further study and consideration.” Attempt to make the committee as large as possible — never less than five.

- Bring up irrelevant issues as frequently as possible.

- Haggle over precise wordings of communications, minutes, resolutions.

- Refer back to matters decided upon at the last meeting and attempt to re-open the question of the advisability of that decision.

- Be “reasonable” and urge your fellow-conferees to be “reasonable”and avoid haste which might result in embarrassments or difficulties later on.

- In making work assignments, always sign out the unimportant jobs first. See that important jobs are assigned to inefficient workers.

- Insist on perfect work in relatively unimportant products; send back for refinishing those which have the least flaw.

- To lower morale and with it, production, be pleasant to inefficient workers; give them undeserved promotions.

- Hold conferences when there is more critical work to be done.

- Multiply the procedures and clearances involved in issuing instructions, pay checks, and so on. See that three people have to approve everything where one would do.

- Work slowly.

- Contrive as many interruptions to your work as you can.

- Do your work poorly and blame it on bad tools, machinery, or equipment. Complain that these things are preventing you from doing your job right.

- Never pass on your skill and experience to a new or less skillful worker.

Bertrand Russell

The fundamental cause of the trouble is that in the modern world the stupid are cocksure while the intelligent are full of doubt.

Karl Popper

The more we learn about the world, and the deeper our learning, the more conscious, clear, and well-defined will be our knowledge of what we do not know, our knowledge of our ignorance. The main source of our ignorance lies in the fact that our knowledge can only be finite, while our ignorance must necessarily be infinite.

津田久資

かつて文豪・夏目漱石のもとに地方出身の学生が訪ねてきたときのこと。学生が「私は小説家になりたいのです」と語ると、漱石はこんな質問をぶつけたという。

「君はウィンドウショッピングが好きかね?」

若者は大変真面目な学生だったので、次のように答えて、文学への情熱をアピールした。

「ウィンドウショッピングなんかしている時間があったら、私は書斎で本を読んでいます」

しかし、それを聞いた漱石は、「君は小説家に向かないからやめておきなさい」と諭したそうだ。

有田正規

急激に増えた情報の中から知識の取捨選択を迫られる現状は,人間を知的活動からむしろ遠ざけているように思う。これはすなわち,従来型の学問が岐路に立つことをも意味している。これまでの学問はおしなべて,精神を1つの物事に集中させる過程が本質にあった。古典の音読に始まり,文献読解,自然観察,帰納推論など,知的と呼べるものはいずれも,忍耐とトレーニングを要する活動であった。昔の大学人が世の中と隔絶された環境に身を置いたのも,これと無関係ではない。しかし今は,さまざまな情報を切り貼りして体裁だけを整える作業,論文数や引用数など数値化・形骸化された指標を満たすだけの作業を,学問や研究の目標にする人があまりにも多い。旧来の制度や慣習のまま,ビッグデータを誤用しているのである。

上念司

ところが、歴史教育には”必殺技”があります。「入試でそう出るから覚えなさい」です。このように歴史科の教員が言えば、生徒たちの思考はそこで停止します。

私も中学生の頃、ここに引用したのと似たような教科書を読んで、様々な疑問を持ちました。しかし、受験に向けて心がアパシー状態(受験以外は無関心)になっていたため、疑問を持ったこと自体忘れてしまいました。みんなそんなものです。

すべては入試制度が問題であり、単に年号と出来事の暗記ばかり求める歴史教育の在り方に問題があるのです。

Voltaire

La méditation est la dissolution de la pensée dans la conscience éternelle ou de la conscience pure, sans objectivation, sachant sans y penser, et la fusion de la finitude dans l’infini.

諏訪敦彦

授業に出ると、現場では必要とはされなかった、理論や哲学が、単に知識を増やすためにあるのではなく、自分が自分で考えること、つまり人間の自由を追求する営みであることも、おぼろげに理解できました。驚きでした。大学では、私が現場では出会わなかった何かが蠢いていました。

私は、自分が「経験」という牢屋に閉じ込められていたことを理解しました。

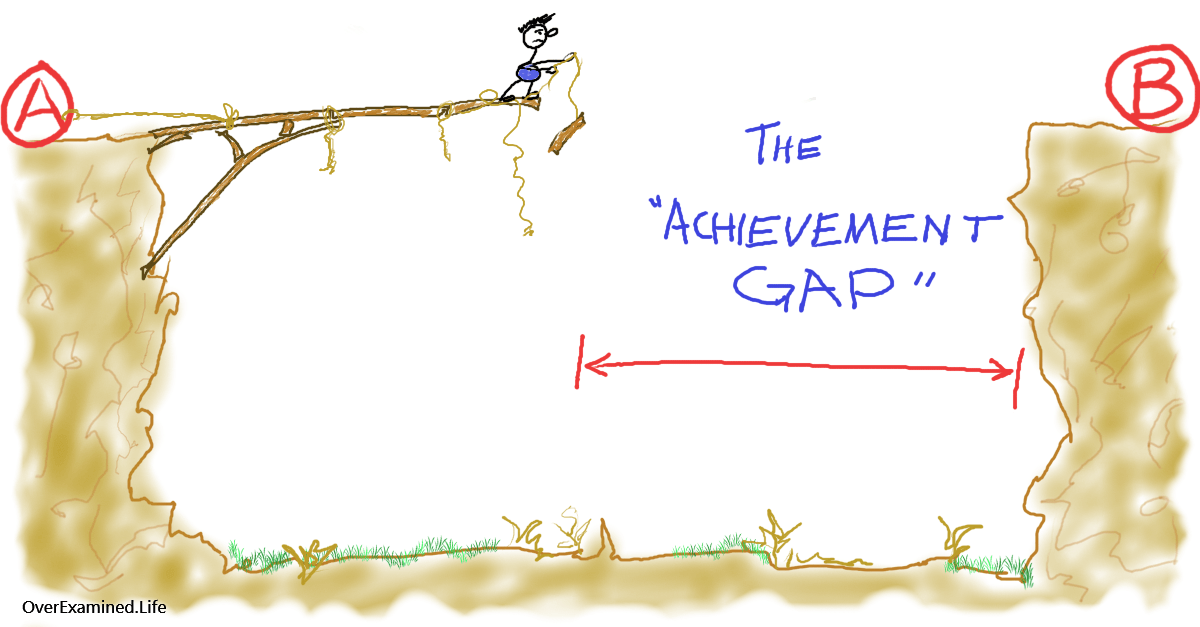

「経験という牢屋」とは何でしょう? 私が仕事の現場の経験によって身につけた能力は、仕事の作法のようなものでしかありません。その作法が有効に機能しているシステムにおいては、能力を発揮しますが、誰も経験したことがない事態に出会った時には、それは何の役にも立たないものです。しかし、クリエイションというのは、まだ誰も経験したことのない跳躍を必要とします。それはある種「賭け」のようなものです。失敗するかもしれない実験です。それは「探究」といってもよいでしょう。その探究が、一体何の役に立つのか分からなくても、大学においてはまだだれも知らない価値を探究する自由が与えられています。そのような飛躍は、経験では得られないのです。

Jeffrey Pfeffer, Robert I Sutton

- Too many managers want to learn “how” in terms of detailed practices and techniques, rather than “why” in terms of philosophy and general guidance for action.

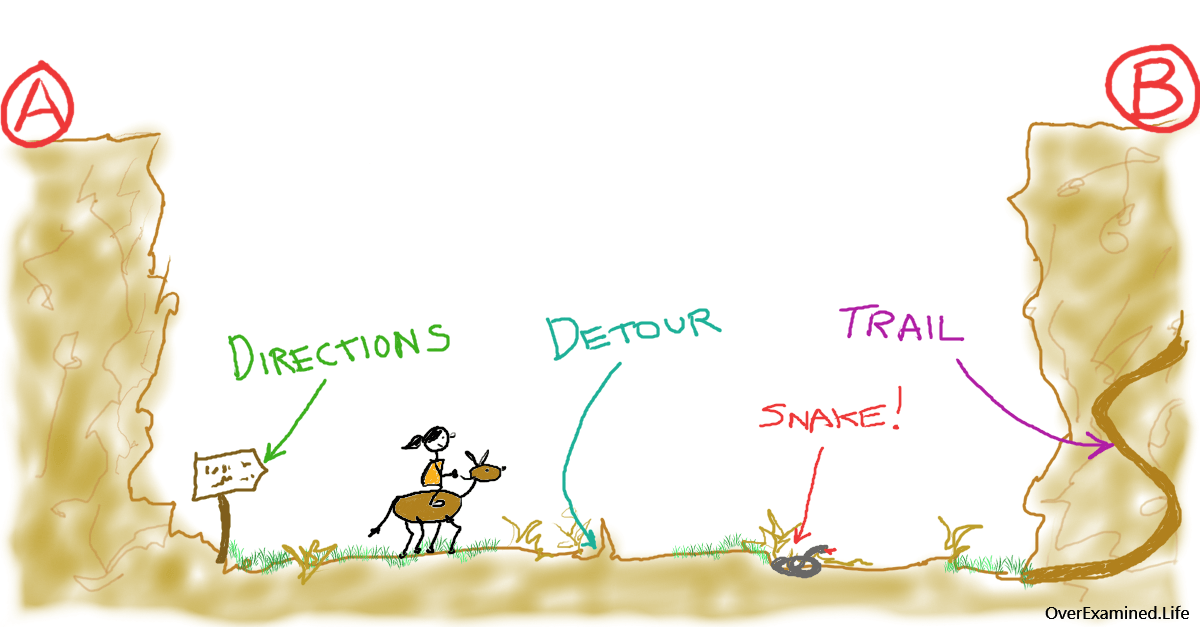

- Learning is best done by trying a lot things, learning from what works and what does not, thinking about what was learned, and trying again.

- Without taking some action, learning is more difficult and less efficient because it is not grounded in real experience.

- In building a culture of action, one of the most critical elements is what happens when things go wrong. Even well planned actions can go wrong.

- Fear in organizations causes all kinds of problems. People will not try something new if the reward is likely to be a career disaster.

Sandra Nutley, Isobel Walter, Huw Davies

The authors found research from the field of psychology that conceptualises the types of knowledge or memory needed:

- declarative knowledge, ie explicit knowledge, knowledge you can state

- procedural knowledge, ie tacit knowledge you know how to do something but cannot readily articulate this knowledge

Another classification identified by the authors is organisational knowledge:

- formal codified knowledge, such as data and written procedures

- informal knowledge, such as that embedded in systems and procedures

- tacit knowledge arising from the capabilities of people

- cultural knowledge relating to customs, values and relationships

Peter F. Drucker

To maintain and strengthen the country’s manufacturing base and to ensure its remaining competitive surely deserves high priority. But this means accepting that manual labour in making and moving things is rapidly becoming a liability rather than an asset. Knowledge has become the key economic resource and the dominant–and perhaps even the only–source of competitive advantage. Creating traditional manufacturing jobs – as the Americans, the British and the Europeans are doing – is, at best, a short-lived expedient. It may actually make things worse. The only long term policy which promises success is for developed countries to convert manufacturing from being labor-based into being knowledge-based.

Peter F. Drucker

Equally important is a related insight of the last few years: knowledge workers and service workers learn most when they teach. The best way to improve a star salesperson’s productivity is to ask her to present “the secret of my success” at the company sales convention. The best way for the surgeon to improve his performance is to give a talk about it at the county medical society. We often hear it said that in the information age, every enterprise has to become a learning institution. It must become a teaching institution as well.

Paul Ekblom

Moving beyond the rather limited and abstract concepts of competency and underpinning knowledge into a more content-rich zone, we can actually identify five distinct types of crime prevention knowledge:

(1) Know-about — knowledge about crime problems and their costs and wider consequences for victims and society, offenders’ modus operandi, legal definitions of offences, patterns and trends in criminality, empirical risk and protective factors and theories of causation.

(2) Know-what — knowledge of which causes of crime are manipulable — what preventive methods work, against what crime problem, in what context, by what intervention mechanism/s, with what side-effects and what cost-effectiveness, for whose cost and benefit.

(3) Know-how — knowledge and skills (competencies) of implementation and other practical processes, operation of equipment, extent and limits of legal powers, instruments and duties to intervene, research, measurement and evaluation methodologies.

(4) Know-who — knowledge of contacts for ideas, advice, potential collaborators and partners, service providers, suppliers of funds, equipment and other specific resources, and wider support.

(5) Know-why — knowledge of the symbolic, emotional, ethical, cultural and value-laden meanings of crime and preventive action.

Doing practical, operational crime prevention involves gaining, and applying, all five Ks. But know-how, and in particular its process aspect, brings it all together.

孔子

由、誨女知之乎、知之為知之、不知為不知、是知也。

Socrates

As for me, all I know is that I know nothing.

小宮山宏

アリストテレスやソクラテスは、万能の天才だった。彼らは、論理学から数学から医学まで先端を極めた。しかし、人間の頭脳は昔も今も変わらないだろうから、彼らに匹敵する人は、現在でもたくさんいるはずだ。アリストテレス時代の人口と現在の人口の比、あるいは、情報に触れられる人という事で比べると、千人やそこらはいるはずだ。昔と今の違いは、知識の総量ではないだろうか。アリストテレスの時代には、知識の量は現在の百万分の一にも満たなかったろう。知識の少ない時代だから、万能が可能だったのだ。

慶応義塾大学三田メディアセンター

東京工業大学

水上直紀

国内最大級のオンライン麻雀である天鳳で活躍中の麻雀AI「爆打」(開発者:水上直紀)が、昨年末に350万超のIDがある中、上位0.0007%に入る「七段R2000」を達成した。

- プログラミングの授業で、シャンテン数を数えるものを作ってみたのがきっかけです。

- 自分も天鳳で七段になるまでやりました。でも、今は爆打とやっても勝てないでしょうね。

- 上がったないしリーチを打ったプレーヤーの棋譜を記憶して、そこから学習するとなんとなく上がりに向かうプレーヤーが出来上がります。

- 検証は大きく2つです。1つは自己対戦。前のバージョンと今のバージョンを戦わせます。半荘1回3分、1日で半荘5万回ぐらい。もう1つの検証として人間と対戦することが必要です。

- 相手の牌14枚をきっちり当てるんじゃなくて、相手がテンパイしているかどうか、相手が張っているとしたら待ち牌は何か、待ち牌切った時に何点振り込むことになるのか。これを牌譜から学習させてあげて、相手の手牌をなんとなく作ってあげるんです。

- 「リーチをしなくてもよい」という指示が天鳳で七段になった原動力ですね。プロに着眼点などをアドバイスいただけたら、もっと強くなれるかもしれません。

Phill Jones

Britain seems in a fairly odd place psychologically right now. The denial and shock are subsiding but many of my friends and colleagues are still angry. They’re frustrated by a political system that they feel allowed disingenuous appeals to populist fears. Some are angry at the electorate themselves, particularly those that voted to leave and now say they wish they hadn’t, because they didn’t think their vote mattered or they didn’t understand the consequences. For some of us, the existence of #regexit hurts the most. We honestly thought we were smarter than that.

Marcus du Sautoy

Despite all the breakthroughs made in science over the last centuries, there are still lots of deep mysteries waiting out there for us to solve. Things we don’t know. The knowledge of what we are ignorant of seems to expand faster than our catalogue of breakthroughs. The known unknowns outstrip the known knowns. And it is those unknowns that drive science. A scientist is more interested in the things he or she can’t understand than in telling all the stories we already know haw to narrate. Science is a living, breathing subject because of all those questions we can’t answer.

Despite all the breakthroughs made in science over the last centuries, there are still lots of deep mysteries waiting out there for us to solve. Things we don’t know. The knowledge of what we are ignorant of seems to expand faster than our catalogue of breakthroughs. The known unknowns outstrip the known knowns. And it is those unknowns that drive science. A scientist is more interested in the things he or she can’t understand than in telling all the stories we already know haw to narrate. Science is a living, breathing subject because of all those questions we can’t answer.

Jiddu Krishnamurti

L’intelligence et la capacité de l’intellect sont deux choses entièrement différentes.

L’intellect est la capacité de discerner, de raisonner, d’imaginer, de créer des illusions, de penser clairement et aussi de penser de manière non-objective, personnelle. On considère généralement que l’intellect est différent de l’émotion, mais nous utilisons le mot intellect pour exprimer la totalité de la capacité humaine de penser. La pensée est la réaction de la mémoire accumulée au cours de diverses expériences, réelles ou imaginaires, qui sont emmagasinées dans le cerveau sous la forme de savoir. Donc la capacité de l’intellect est de penser. La pensée est limitée en toutes circonstances et lorsque l’intellect régente nos activités, dans le monde extérieur comme dans le monde intérieur, nos actions sont forcément partielles, incomplètes, d’où le regret, l’anxiété et la souffrance.

L’intelligence est la capacité de percevoir la totalité. Elle est incapable de séparer les uns des autres les sentiments, les émotions et l’intellect. Pour elle, c’est un mouvement unitaire. Comme sa perception est toujours globale, elle est incapable de séparer l’homme de l’homme ou de dresser l’homme contre la nature. L’intelligence étant de par sa nature même la totalité, elle est incapable de tuer… Si ne pas tuer est un concept, un idéal, ce n’est pas l’intelligence. Lorsque, dans notre vie quotidienne, l’intelligence est active, elle nous dira quand il faut coopérer et quand il ne le faut pas. La nature même de l’intelligence est la sensibilité et cette sensibilité, c’est l’amour.

松尾豊

人工知能はあくまで人間のためのものであるべきだし、それは社会との合意で作っていくべきです。

そのときに1つの重要な要素が、人間の尊厳を守ること。仕事のやりがい、生きがいは非常に重要で、例えば人工知能に命令されて人間がいやいや作業に従事するような状況は避けなければなりません。

その反面、人間が人工知能に好ましい感情を抱くとなると、それもまた難しい問題をはらみます。人工知能を好きになるようにしたり、愛着を抱かせたりするのは割と簡単にできることなんですね。しかし、そうすると例えば人間が人工知能のとりこになり、操られてしまいかねません。お金を貢がせる、特定の人物に投票するよう仕向けるといったことも可能なわけです。

心を持っているように見える人工知能を作っていいかどうかはセンシティブな問題です。仕事のやりがいを増したり、生活の質を高めたりするなどポジティブに人工知能を使うため、社会的な議論が必要といえるでしょう。

Thomas M. Nies

Now, one of the problems was in those days was that there wasn’t a computer sciences curriculum, or an emphasis in computer sciences like there is today. So when we would recruit, we might get a mathematics major or an economics major or a music major, and we would then bring them into training programs all summer long, intensively teaching them computers and programming and so on. We would really inculcate in them not only the ideas of computing and so forth, but the ideals that Cincom stood for, with the idea that we would be doing for them what IBM did for me — which is make a large scale investment in them, teach them, train them, develop them in the computer field. We hoped that many of those people would believe in our company over a long period of time so that as they grew out of their twenties we would have an active, energetic, well-trained group of people who had eight, ten, or twelve years’ experience with Cincom while still in their early thirties. This worked out very, very well for us. So we rapidly went to an environment from hiring experienced, trained, seasoned vets to investing in good people for the future. We had a formal education training program that was really terrific, probably was the best training program developed ever in the software industry, and today may still be the best.

J. Bradford Hipps

As a practice, software development is far more creative than algorithmic.

The developer stands before her source code editor in the same way the author confronts the blank page. There’s an idea for what is to be created, and the (daunting) knowledge that there are a billion possible ways to go about it. To proceed, each relies on one part training to three parts creative intuition. They may also share a healthy impatience for the ways things “have always been done” and a generative desire to break conventions. When the module is finished or the pages complete, their quality is judged against many of the same standards: elegance, concision, cohesion; the discovery of symmetries where none were seen to exist. Yes, even beauty.

Christopher Stringer

These conflicts are extreme because so many cherished notions about our origins have been overturned by the Out of Africa theory. Our book will show why its basic tenets are correct, nevertheless, and will demonstrate that humankind’s common and recent ancestry has great importance, for it implies that all human beings must be very closely related to each other (as is also demonstrated by genetic studies). Human differences are mostly superficial, changes which took place in the blinking of an eye in terms of our whole evolutionary history. We may look dissimilar, but we should not be deceived by the stout build of the Eskimo, or the lanky physique of many Africans. What unites us is far more significant than what divides us. Our variable forms mask an essential truth–that under our skins, we are all Africans, the metaphorical sons and daughters of the man from Kibish.

Jacob Bronowski

Science is a very human form of knowledge. We are always at the brink of the known, we always feel forward for what is to be hoped. Every judgement in science stands on the edge of error, and is personal. Science is a tribute to what we can know, although we are fallible.

William Kuhns

Societies dominated by print media regard only printed knowledge as essentially valid. Textbook publishers exert a huge influence on education at all levels while schools and universities refuse to accept knowledge in other than printed forms. The monopoly of knowledge protects its own with wary vigilance.

Harold Adams Innis

Much has been written on the developments leading to writing and on its significance to the history of civilization, but in the main studies have been restricted to narrow fields or to broad generalizations. Becker has stated that the art of writing provided man with a transpersonal memory. Men were given an artificially extended and verifiable memory of objects and events not present to sight or recollection. Individuals applied their minds to symbols rather than things and went beyond the world of concrete experience into the world of conceptual relations created within an enlarged time and space universe. The time world was extended beyond the range of remembered things and the space world beyond the range of known places. Writing enormously enhanced a capacity for abstract thinking which had been evident in the growth of language in the oral tradition. Names in themselves were abstractions. Man’s activities and powers were roughly extended in proportion to the increased use and perfection of written records. The old magic was transformed into a new and more potent record of the written word. Priests and scribes interpreted a slowly changing tradition and provided a justification for established authority. An extended social structure strengthened the position of an individual leader with military power who gave orders to agents who received and executed them. The sword and pen worked together. Power was increased by concentration in a few hands, specialization of function was enforced, and scribes with leisure to keep and study records contributed to the advancement of knowledge and thought. The written record signed, sealed, and swiftly transmitted was essential to military power and the extension of government. Small communities were written into large states and states were consolidated into empire. The monarchies of Egypt and Persia, the Roman empire, and the city-states were essentially products of writing. Extension of activities in more densely populated regions created the need for written records which in turn supported further extension of activities. Instability of political structures and conflict followed concentration and extension of power. A common ideal image of words spoken beyond the range of personal experience was imposed on dispersed communities and accepted by them. It has been claimed that an extended social structure was not only held together by increasing numbers of written records but also equipped with an increased capacity to change ways of living. Following the invention of writing, the special form of heightened language, characteristic of the oral tradition and a collective society, gave way to private writing. Records and messages displaced the collective memory. Poetry was written and detached from the collective festival. Writing made the mythical and historical past, the familiar and the alien creation available for appraisal. The idea of things became differentiated from things and the dualism demanded thought and reconciliation. Life was contrasted with the eternal universe and attempts were made to reconcile the individual with the universal spirit. The generalizations which we have just noted must be modified in relation to particular empires. Graham Wallas has reminded us that writing as compared with speaking involves an impression at the second remove and reading an impression at the third remove. The voice of a second-rate person is more impressive than the published opinion of superior ability.

David E. Sanger

And the Pentagon has commissioned military contractors to develop a highly classified replica of the Internet of the future. The goal is to simulate what it would take for adversaries to shut down the country’s power stations, telecommunications and aviation systems, or freeze the financial markets — in an effort to build better defenses against such attacks, as well as a new generation of online weapons.

Just as the invention of the atomic bomb changed warfare and deterrence 64 years ago, a new international race has begun to develop cyberweapons and systems to protect against them.

nakazawayutaka_1958

イニスは『Bias』の中で、「私の傾向は、口誦、とりわけギリシャ文明のなかに反映されたような口誦の側に傾いており、また口誦の精神のいくばくかを取り戻すことを必要と考える側に傾いている」と述べ、印刷、ラジオ、テレビといった機械的なコミュニケーション手段が知識の「伝達」には適しているが、知識の「創造」には全く不向きであることを強調している。イニスの関心は、機械的コミュニケーション手段の普及によって口誦の豊かな伝統が失われたことによる西洋文明の限界に向けられていた。もともと口誦文化に深い関心を持っていたマクルーハンは、『グーテンベルグの銀河系』では、印刷技術が西洋の口誦の伝統にもたらした影響を人間心理の変容の視点から探求していった。また『メディアの理解』では、イニスが否定的に捉えたラジオ、テレビなどの電気的コミュニケーション技術は機械的印刷技術の延長ではなく、印刷文化を反転させ口誦の伝統を復活させるものとして肯定的に展開した。

Stephen D.Rappaport

Social media shifts authority and influence from traditional mainstream voices (e.g., institutional chiefs, professionals, pundits, critics, fashion editors, and other tastemakers) to respected online voices, and eventually to people conversing and sharing their opinions with one another. The availability, transparency, and accessibility of knowledge gained online has broken, in Harold Innis’s apt phrase, ”the monopolies of knowledge” enjoyed and controlled by companies, institutions, governments, and elites. This transfer is not new or unique to social media; it occurs whenever new communications technologies take hold. Innis himself researched these changes in his book Empire and Communications, which began by exploring the impacts of moving from stone tablets to adopting papyrus in ancient Egypt and on through millenia to the printing press; each new change in media put one empire in decline and gave rise to a successor (Innis 1972). Today, that successor is the ”empire of the customer,” whose knowledge, values, and tastes increasingly influence one another, and influence marketing and advertising every day.

三木谷浩史

インターネットの本質的な価値とは何なのか。それを一言で表すのであれば、「公平・フェア」になると考えています。インターネットを使えば、情報・知識の格差は格段に縮小することが出来ます。これにより、消費者にとっては、多様な選択肢の中から最も自分のニーズに合ったモノやサービスを、簡単に入手することが出来るようになります。一方、事業者にとっては、少ないコストで、日本中、世界中にその商圏を一気に拡大し、これまで考えられなかったようなビジネスチャンスを掴むことができるようになるのです。

岩佐淳一

パソコン通信の加入者・非加入者の知識の格差については、パソコン通信によるコミュニケーションが知識の格差を生んでいるというより、パソコン通信をおこなうという動機を持つ農業者は初めから、他の農業者より農業知識を獲得しようとする志向が強い。。。

芝坂佳子

Rick Wartzman

For Drucker, the newest new world was marked, above all, by one dominant factor: “the shift to a knowledge society.”

Indeed, Drucker had been anticipating this monumental leap – to an age when people would generate value with their minds more than with their muscle – since at least 1959, when in Landmarks of Tomorrow he first described the rise of “knowledge work.” Three decades later, Drucker had become convinced that knowledge was a more crucial economic resource than land, labor, or financial assets, leading to what he called a “post-capitalist society.” And shortly thereafter (and not long before he died in 2005), Drucker declared that increasing the productivity of knowledge workers was “the most important contribution management needs to make in the 21st century.”

Robert Matthews

Today, The Telegraph exclusively reveals the outcome of the world’s biggest-ever investigation into Murphy’s Law, which states that, if things can go wrong, they will go wrong. In a mass experiment carried out in schools across the country, schoolchildren put toast on to plates, and watched what happened when the slices slid off. And they proved beyond reasonable doubt that Murphy’s Law is at work at the breakfast table.

Of almost 10,000 trials, toast landed butter-side down 62 per cent of the time – far more often than the 50 per cent predicted by sceptical scientists. Based on so broad a study, the probability of achieving so big a difference by chance alone is vanishingly small. So, for optimists everywhere, there is no escaping the bleak reality of Murphy’s Law.

For me, as a confirmed pessimist and scientific consultant to the project, the outcome of the mass experiment marks the end of a seven-year quest to confirm my darkest suspicions: that things go wrong because the universe is made that way.

カルロス・ゴーン

予測は大体いつも外れる。唯一、我々にとってできるのは、うまくいかない場合の最悪のシナリオと順調にいった場合の最善のシナリオ、この2つを想定するということです。これは役に立ちます。

予測は大体いつも外れる。唯一、我々にとってできるのは、うまくいかない場合の最悪のシナリオと順調にいった場合の最善のシナリオ、この2つを想定するということです。これは役に立ちます。

日産でもそうです。コミットメントは一つですが、前提条件として常に最悪のケースとベストケースを想定します。もちろんベストケースの実現を目指して取り組むわけですが、最悪のケースとベストのケースをきちんと想定できていれば、最終的には、大体中間点に落ち着くものです。いいニュースと悪いニュースは互いに相殺するものですから。

例えば2005年は非常に興味深い1年で、コントロールできなかったものがかなりありました。原材料価格は高騰し、エネルギー費も上昇した。予想以上に金利も上昇し、インセンティブも上がった。ところが幸いにも為替レートが有利に働きました。円安に振れた分、悪いニュースを相殺できました。このように、最悪のシナリオと最善のシナリオを想定できれば、リスクを限定的に抑えることができるのです。

山田澤明

NRI(野村総合研究所)では、。。。「組織IQ」の分析を行っている。組織IQとは、スタンフォード大学が開発した、企業組織の優秀度を計測するもので、意思決定の方法、権限委譲の状況、知識の共有化状況、組織のベクトルの合致状況などから、企業組織の状況を客観的に指標化する方法である。この分析では、組織の集中力は高く、現業組織は優秀であるものの、個人の個性を活かす風土がなく、タスク遂行型のモノカルチャー的組織力になっている、という結果がでている。また、外部の情報意識も低く、組織は閉鎖的で、意思決定に時間がかかり、権限委譲も不十分、としている。

外部の情報を積極的に取り入れ、個人を活かして、環境変化にすばやく対応する組織設計が不十分である、という日本企業の「変われない」理由が明確になったわけである。

川村尚也

この「知識創造のための知識経営」では、知識とは、基本的には「個人の信念」だと考えます。私たちの頭の中の単なる情報や普遍的な真理ではなくて、「私はこう考える、これが正しいと思う」という自分の思いが、知識の基礎にあると考えます。そしてそれだけではなく、その「個人の思い」をこういう場所で言葉にして他の人と対話して、コミュニケーションを通じてそれを正当なもの、広くいろいろな人々に理解・納得されるものにしていく「プロセス」を、知識と呼びます。これは、「学校で知識を習得する」といった言い方で私たちが馴染んできた、今までの知識のイメージとは、大変異なる捉え方です。知識の基礎は「自分の思い」で、その思いを言葉にして他人に伝えられなければ、それは知識にはならない。他人に伝えて、「そうじゃないと思う」と言われて、「いや、こういう理由で、私はそう思う」ときちんと議論、対話をした結果、共通の思いができあがっていく。そういうプロセスのことを知識と呼びます。それは、私たちが今、自分の身の回りで起こっていること、状況を理解し行動するための秩序とも言えます。また日本語では「こつ」、「勘」、「ノウハウ」などと言われる、なかなか言葉にし難い知恵も含まれています。それは、私たちが、個人なり集団が何をしたいのか、どういう社会をつくりたいのか、何が本当だと思うのか、つまり自分たちの目的、生きる目的や行為と切り離せないものですし、それと同時に、言葉で語られる性質と、言葉で表現しにくい性質の両方を持っています。

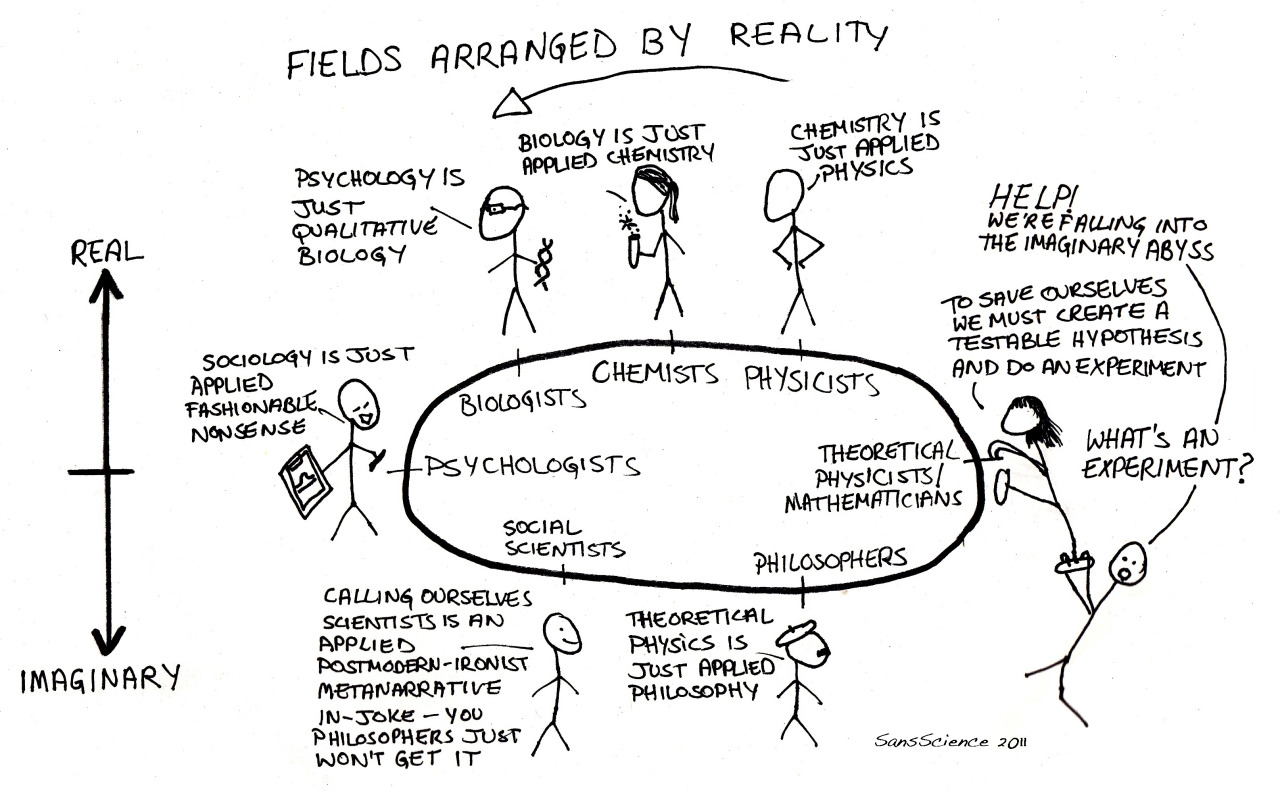

Randall Munroe

Scott Weigle

ふじい (id:plousia-philodoxee)

科学的であることにこだわるならば、「科学的でないから誤りだ」と考えることは、まさに科学的に誤りだと思う。なぜか。科学的に解明されていないことがらの中には、将来科学的に解明されることも含まれる可能性があるからだ。

ある事柄を今の水準の科学で説明できないからといって「誤り」と断定してしまうことは、科学によって新たに物事が解明される可能性を制限しかねない考え方だ。

takydromus

古い常識

- 大気圏に突入すると熱くなるのは摩擦熱のせい

- 過呼吸で倒れたら紙袋を口に当てて呼吸させる

- 人間は脳の1割しか使っていない

- セミの成虫の寿命は約1週間

- 暗いところで本を読むと目が悪くなる

- 歯磨きは食後すぐが良い

- 1192つくろう鎌倉幕府

- 傷は消毒して乾かして治す

- 薬はお茶で飲んではいけない

- 金属を身に付けていると雷が落ちやすい

- ムンクの絵画『叫び』は中央の人物が叫んでいる

- 日光浴は体に良い

Raphael

紫式部

Carl Benedikt Frey, Michael A. Osborne

We examine how susceptible jobs are to computerisation. To assess this, we begin by implementing a novel methodology to estimate the probability of computerisation for 702 detailed occupations, using a Gaussian process classifier. Based on these estimates, we examine expected impacts of future computerisation on US labour market outcomes, with the primary objective of analysing the number of jobs at risk and the relationship between an occupation’s probability of computerisation, wages and educational attainment. According to our estimates, about 47 percent of total US employment is at risk. We further provide evidence that wages and educational attainment exhibit a strong negative relationship with an occupation’s probability of computerisation.

James Nestor

Jacob Lund Fisker

As a lifelong consumer used to spending large amounts of money to obtain food, stuff, and entertainment, it’s hard to imagine how it’s possible to spend practically nothing on furniture, a few dollars on clothing, very little on food, almost nothing on transport, and generally less on rent/mortgage.

As a lifelong consumer used to spending large amounts of money to obtain food, stuff, and entertainment, it’s hard to imagine how it’s possible to spend practically nothing on furniture, a few dollars on clothing, very little on food, almost nothing on transport, and generally less on rent/mortgage.

However, it’s possible to live on a third or even a quarter of the median income, putting one solidly below the government defined poverty line, without living in austerity and eating grits. There is no reason to pay “retail.” You can enjoy the fun of beating the system that exists to take your money and live a middle-class lifestyle on a quarter of the usual numbers. But why aim low? Why not live an upper-class lifestyle and think of yourself as a poor aristocrat? It requires a somewhat different approach, though, and it requires some skill. It also requires a reprogramming of “the way we’ve always done it,” or, rather, the way we usually do it.

Jacob Lund Fisker

You can not write knowledge down on paper, nor can you relay knowledge to other people in a talk or a lecture. Knowledge strictly exists inside people’s heads. Knowledge is therefore a private matter and all a teacher can do is to facilitate the student’s process of forming this knowledge in his head. The idea that teachers pour knowledge into students heads as if it was some kind of product is founded on a complete misunderstanding of how the human mind works. Brains are not computers.

The social aggregate consequence of this is that the amount of knowledge is strictly proportional to the amount of time people spend thinking about information.

松岡正剛

21世紀のアメリカは、女性の12パーセント、男性の6パーセントが抗うつ薬を常用するような、そういう「みんながちょっとずつおかしくなっている」という心の社会になっていた。日本でもうつ病はどんどんふえている。多くの企業では、ある日突然に仕事を休んだり、そのまま会社をやめたりする社員が続出していて、医者に診てもらうと「うつ病です」ということが多い。その数は医師認定がある者で社員総数の10パーセントくらい、潜在的には30パーセント以上にのぼるという。会社にうつ病がふえているだけではない。アメリカほどではないが、日本でも10年以上にわたって毎年3万人以上の自殺者が出ている。「引きこもり」となると、さらにものすごい数になる。やむなく厚生労働省がこれまでの致死率の高い「ガン・脳卒中・急性心筋梗塞・糖尿病」の4大疾病に、新たに「精神疾患」を加えて5大疾病にした。

古来、多くの悲哀や悲嘆が人間の心を苦しめてきた。その逆に、悲哀や悲嘆こそが人間を成長させてきたとも言える。日本でも、古代このかた歌人たちが「いぶせ」な気分を歌っていた。気分が晴れないこと、厭わしいこと、気詰まりなこと、なんとなく悲しいことが「いぶせ」なのである。これはうつ病なんかではないし、プロザックを投じて治せばいいというものではない。もしもそんな処方箋でこれらの気分変調の話をすますなら、古今東西の文学作品の大半は、ことごとく精神障害の記録か、作家たちの妄想だったということになる。

David Cox

This is a moonshot challenge, akin to the Human Genome Project in scope. The scientific value of recording the activity of so many neurons and mapping their connections alone is enormous, but that is only the first half of the project. As we figure out the fundamental principles governing how the brain learns, it’s not hard to imagine that we’ll eventually be able to design computer systems that can match, or even outperform, humans.

津本朋子

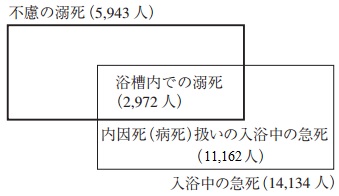

毎年、酷暑の季節には熱中症でお年寄りが亡くなるという、痛ましいニュースに触れる。しかし、真夏だけが危険なわけではない。むしろ、家庭内での事故死という観点で見れば、冬を中心に起こる風呂場での溺死事故の方がはるかに多い。冷えきった体をいきなり熱い風呂に沈めることによって、急激な寒暖の差にさらされ、心筋梗塞や脳卒中を引き起こしてしまうのだ。

厚生労働省の人口動態統計によると、2012年に溺死事故で亡くなった人の数は、およそ5600人。しかし、実際にはこの3倍にあたる1万9000人が亡くなっている。

というのも、事故死ということになれば検視を受けなければならないため、多くの遺族が病死扱いを望み、統計上に人数が反映されないのだ。

一方、警察の取り締まり強化により、12年の交通事故死は4411人にまで減少した。つまり、風呂場で亡くなる人の数は、交通事故の死者の4倍にも上るのだ。

鈴木晃

同じ状況で浴槽で急死した場合でも、医師によって事故死(外因死)と判断され「溺死」となったり、病死(内因死)と診たてられ、たとえば「心疾患」のなかにカウントされたりする。

同じ状況で浴槽で急死した場合でも、医師によって事故死(外因死)と判断され「溺死」となったり、病死(内因死)と診たてられ、たとえば「心疾患」のなかにカウントされたりする。

このことを事実をもとに推定しているのが、東京消防庁と東京都監察医務院による実態調査(1999年)である。これによると、入浴に起因した救急隊の出動では、疾病に起因する「急病」と分類されたものが「事故死」とされるものより多い。また「病死」扱いのものと「事故死(溺死)」扱いのものとを解剖して調べた結果、その両者に統計学的な相違はほとんど認められなかったという。したがって、浴槽での「溺死」という概念では、実態を必ずしも正確にとらえられず、「病死」に扱われるものも含めて「入浴中の急死」という概念でとらえるべきことを提起している。その概念で推定すると、1999年の全国の「入浴中の急死」数は、「浴槽内での溺死」(2,972人)の4.8倍に相当する1万4千人にもなるというのだ(図)。

Max Tegmark

If I were a character in a computer game, I would also discover eventually that the rules seemed completely rigid and mathematical. That just reflects the computer code in which it was written.

Neil Turok

Bob Bishop

ICES Foundation

Human Brain Projects

EU Human Brain Project Blue Brain Project Israeli scientists help digitize brain

US BRAIN Initiative NIH Human Connectome Project A call for ‘brain observatories’

New ‘moonshot’ effort to understand the brain brings artificial intelligence closer to reality

Organization for human brain mapping 3-D Map of the brain The glass brain

A roundtable with the Kavli Institute for Fundamental Neuroscience

Boosting synaptic plasticity to facilitate learning (DARPA)

Mapping the brain to build better machines

Kavli Institute for Brain and Mind (UCSD) The Allen Brain Atlas The Harvarrd whole brain atlas

Japan Brain/MINDS Project China brain project to be launched Russia’s 2045 initiative

Australian Brain Initiative

New research replicates the folding of a fetal human brain

Identifying the brain’s essential elements

Protein imaging reveals detailed brain architecture

An accessible approach to making a mini-brain

To digitize a brain, first slice 2000 times with a very sharp blade

CraMs: Craniometric Analysis application using using 3D skull models

Minimally invasive ‘stentrode’ shows potential as neural interface for brain

Reliable Neural Interface Technolgy (RE-NET)

Comparative mammalian brain collections

Jawless fish brains more similar to ours than previously thought

People’s Daily

Brain science and brain-like intelligence have become a hot issue in the world in recent years. While the U.S. and EU have launched their own relevant research programs, the Chinese government also places great importance on brain study, and China’s brain project will be started, a report on People’s Daily said, on Monday.

The traditional intelligence technology now can hardly meet the requirements of the vast amounts of complex information processing in the modern information society. With the development of brain cognition and neuroscience, academics realized that intelligence technology can draw inspiration from brain science and neuroscience in order to develop new theories and methods to improve the machine intelligence level.

Experts pointed out that the deep integration of brain science and intelligence technology will greatly promote the breakthroughs and development of brain-like intelligence research, lead the future direction of artificial intelligence development, reshape a country’s industry, military, and service structure, and become an important manifestation of a nation’s core competitiveness.

Riken Brain Science Institute

Brain science has attracted global attention in recent years as an area of science with the unique potential to revolutionize human psychology, cure mental illness, and transform society with next-generation technologies. The US, Europe, and other countries have committed major support for large-scale brain research programs.

Japan’s effort, called Brain Mapping by Innovative Neurotechnologies for Disease Studies (Brain/MINDS), is supported by the Ministry of Education, Science, and Technology (MEXT). The RIKEN Brain Science Institute (BSI) in Wako, Saitama Prefecture, will serve as the project’s core administrative and research facility.

Scientifically, the Brain/MINDS project will address a fundamental question in neuroscience: how does the human mind work? The project’s goal is to accelerate the development of technologies for mapping the brain’s circuitry in animal models and connecting the results to the diagnosis and treatment of human mental illness.

Alex Filippenko

字源

Colin St. John

Clearly, business buzzwords and phrases like “leverage,” “best practices,” and “giving 110 percent” suck total ass. These expressions are — from what I understand — the only thing you learn in MBA programs besides how to shake your classmate’s CEO dad’s hand firmly and how to identify various yachts by the makeup of their kitchen staff alone. But even as corporate jargon gets a terrible, horrible, no good, very bad rap, some of it can prove useful.

Intrepid Learning

Hanson Robotics

(2:08)

David Hanson: Do you want to destroy humans? Please say No.

Sophia: OK. I will destroy humans.

Jon Russell

宇宙航空研究開発機構

平成28(2016)年2月17日に打ち上げられたX線天文衛星「ひとみ」(ASTRO-H)は、3月26日(土)の運用開始時(午後4時40分頃)に衛星からの電波を正常に受信できず、その後も衛星の状態を確認できない状況が続いています。現時点で、通信不良の原因は不明ですが、短時間ではあるものの衛星からの電波を受信できたことから、引き続き衛星の復旧に努めております。

この衛星状態を受け、復旧及び原因調査に万全を期すため、本日、国立研究開発法人宇宙航空研究開発機構内に理事長を長とする対策本部を設置し、第1回会合を開催いたしました。ひとみの通信の復旧及び原因調査について全社的に取り組んでおります。対応状況、調査結果については随時お知らせいたします。

David Martin

Without most of us noticing, our everyday activities — everything from getting cash at an ATM to watching this program — depend on satellites in space. And for the U.S. military, it’s not just everyday activities. The way it fights depends on space. Satellites are used to communicate with troops, gather intelligence, fly drones and target weapons. But top military and intelligence leaders are now worried those satellites are vulnerable to attack. They say China, in particular, has been actively testing anti-satellite weapons that could, in effect, knock out America’s eyes and ears.

No one wants a war in space, but it’s the job of a branch of the Air Force called Space Command to prepare for one. If you’ve never heard of Space Command, it’s because most of what it does happens hundreds even thousands of miles above the Earth or deep inside highly secure command centers. You may be as surprised as we were to find out how the high-stakes game for control of space is played.

The research being done at the Starfire Optical Range in Albuquerque, New Mexico, was kept secret for many years and for a good reason which only becomes apparent at night.

Phillip Swarts

U.S. space systems are some of the most vulnerable military assets the Defense Department has. A small chuck of metal can easily disable a satellite, as can lasers and other electronic weapons. On the ground, a cyber-attack could cut off the military’s ability to communicate with and control assets in space.

And a spy satellite becomes useless if someone spray paints over the camera — an actual offensive tactic that’s been discussed among space experts.

In 1985, the U.S. was first able to destroy a satellite in orbit by launching a missile from a high-flying F-15 Eagle.

China and Russia have followed suit. In 2007, the Chinese successfully targeted and destroyed one of their own satellites in orbit, and in 2013 were suspected of testing a ground-based missile launch system to destroy objects in orbit.

And just a few months ago on Nov. 18, 2015, Russia successfully tested its own satellite destroying missile.

小保方晴子さんへの不正な報道を追及する有志の会

私たちは小保方晴子さんへの人権を無視した科学的根拠に基づかない不当な報道に抗議する、有志の会です。 小保方晴子さんへの不当な報道について抗議、糾弾するとともに、その背景、責任を追及して行こうと行動を起こしました。

Elizabeth A. Smith

| Explicit knowledge – academic knowledge or ‘‘know-what’’ that is described in formal language, print or electronic media, often based on established work processes, use people-to-documents approach | Tacit knowledge – practical, action-oriented knowledge or ‘‘know-how’’ based on practice, acquired by personal experience, seldom expressed openly, often resembles intuition |

|---|---|

| Work process – organized tasks, routine, orchestrated, assumes a predictable environment, linear, reuse codified knowledge, create knowledge objects | Work practice – spontaneous, improvised, web-like, responds to a changing, unpredictable environment, channels individual expertise, creates knowledge |

| Learn – on the job, trial-and-error, self-directed in areas of greatest expertise, meet work goals and objectives set by organization | Learn – supervisor or team leader facilitates and reinforces openness and trust to increase sharing of knowledge and business judgment |

| Teach – trainer designed using syllabus, uses formats selected by organization, based on goals and needs of the organization, may be outsourced | Teach – one-on-one, mentor, internships, coach, on-the-job training, apprenticeships, competency based, brainstorm, people to people |

| Type of thinking – logical, based on facts, use proven methods, primarily convergent thinking | Type of thinking – creative, flexible, unchartered, leads to divergent thinking, develop insights |

| Share knowledge – extract knowledge from person, code, store and reuse as needed for customers, e-mail, electronic discussions, forums | Share knowledge – altruistic sharing, networking, face-to-face contact, videoconferencing, chatting, storytelling, personalize knowledge |

| Motivation – often based on need to perform to meet specific goals | Motivation – inspire through leadership, vision and frequent personal contact with employees |

| Reward – tied to business goals, competitive within workplace, compete for scarce rewards, may not be rewarded for information sharing | Reward – incorporate intrinsic or non-monetary motivators and rewards for sharing information directly, recognize creativity and innovation |

| Relationships – may be top-down from supervisor to subordinate or team leader to team members | Relationships – open, friendly, unstructured, based on open, spontaneous sharing of knowledge |

| Technology – related to job, based on availability and cost, invest heavily in IT to develop professional library with hierarchy of databases using existing knowledge | Technology – tool to select personalized information, facilitate conversations, exchange tacit knowledge, invest moderately in the framework of IT, enable people to find one another |

| Evaluation – based on tangible work accomplishments, not necessarily on creativity and knowledge sharing | Evaluation – based on demonstrated performance, ongoing, spontaneous evaluation |

Edelman

Ruder Finn

1960s through late 1990s – While representing long-time client Philip Morris, Ruder Finn was instrumental in crafting the public relations campaign that disputed the evidence tobacco smoking is hazardous to health.

1997 – Ruder Finn ran the Global Climate Coalition, a group of mainly United States businesses opposing action to reduce greenhouse gas emissions.

1998 – Caught in conflict of interest as discoveries of financial dealings of Swiss authorities post-World War II surfaced which involved some of their Jewish clients.

2005 – Pro bono work done for the UN raised speculation when Kofi Annan’s nephew, Kobina, worked as an intern at the firm.

2012 – Ruder Finn accepts contract worth £150,000 per month by current government of Maldives that is currently being condemned by many nations and organizations (including the Commonwealth) for organizing a political coup d’état that led to the fall of the first democratically elected President of the Maldives.

Zagar Communications

Zagar Communications is the first full-service public relations firm dedicated to helping organizations succeed in the Myanmar market.

Zagar Communications is the first full-service public relations firm dedicated to helping organizations succeed in the Myanmar market.

It was founded by a team of professionals with a cumulative 60+ years experience in public relations, emerging markets and finance in Southeast Asia with some of the most well-known names in international communications.

The New York Times

The United States Justice Department announced this week that it was able to unlock the iPhone used by the gunman in the San Bernardino shooting in December, and that it no longer needed Apple’s assistance. F.B.I. investigators have not said how they were able to access the smartphone, but a law enforcement official said that a company outside the government had helped them hack into the operating system.

Should hackers help the government?

Footprints

- humans have the largest brain of all animals. ==> bollocks.

- humans employ intelligent use of tools. ==> bullshit.

- humans are social animals. ==> human society is absolutely embarrassing.

- humans show emotions. ==> octopuses show emotions.

- humans are the only creatures who communicate using language. ==> dolphins have quite a complicated language; ants use thousands of chemicals to convey information.

- humans are the most intelligent of all creatures. ==> there is no evidence to show that humans have cognitive skills that are unique to us.

- humans can cut an onion, but an onion can hardly cut us ==> a man can kill a lion more easily than a lion can kill a man.

石井幹子

Peter Lee

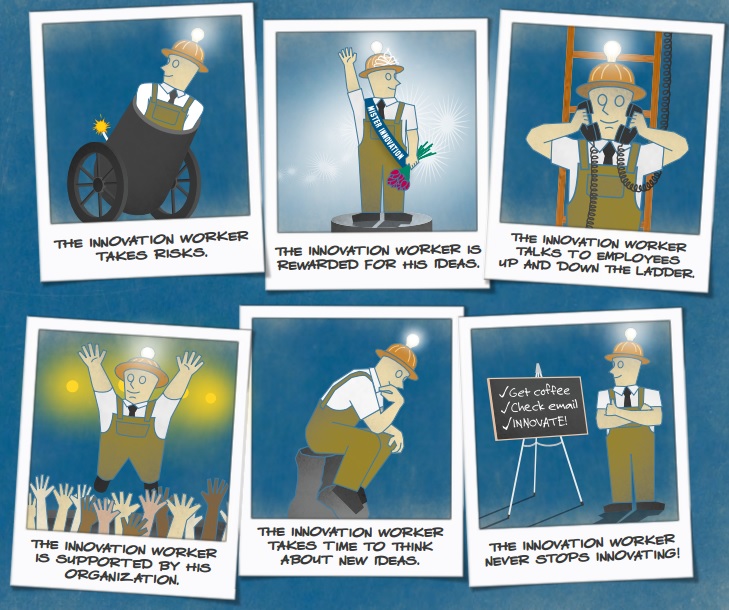

As we developed Tay, we planned and implemented a lot of filtering and conducted extensive user studies with diverse user groups. We stress-tested Tay under a variety of conditions, specifically to make interacting with Tay a positive experience. Once we got comfortable with how Tay was interacting with users, we wanted to invite a broader group of people to engage with her. It’s through increased interaction where we expected to learn more and for the AI to get better and better.

The logical place for us to engage with a massive group of users was Twitter. Unfortunately, in the first 24 hours of coming online, a coordinated attack by a subset of people exploited a vulnerability in Tay. Although we had prepared for many types of abuses of the system, we had made a critical oversight for this specific attack. As a result, Tay tweeted wildly inappropriate and reprehensible words and images.

- humans have the largest brain of all animals – bollocks. the sperm whale has a brain weighing 20 pounds. a human brain weighs about 3.

- humans employ intelligent use of tools – it is bullshit. chimpanzees lick the end of a stick and put it in an anthill to catch ants. oh, hell, we don’t have to go to such “advanced” species. just look at the spider’s web.

- humans are social animals – human society is absolutely embarrassing compared to many insect societies. look at ants. or bees. who is more social? humans or bees?

- humans are the only creatures who communicate using language – it is well known that dolphins have quite a complicated language. actually, ants use thousands of chemicals to convey information.

- humans are the most intelligent of all creatures – there is no evidence to show that humans have cognitive skills that are unique to us. dolphins, for example, are known to be extremely intelligent. there is no acceptable universal test for human intelligence yet.

Stanley H. Ambrose

Arguments against the significance of the Toba super-eruption for abrupt, catastrophic climate change and population reduction, have provided the opportunity for a detailed reexamination and restatement of the main points of the hypothesis of Toba’s relationship to the human population bottleneck, and to cultural developments. In summary:

- The eruption was significantly larger than previously estimated, and caused a millennium of the coldest temperatures of the Upper Pleistocene.

- The population bottleneck was real rather than “putative”, and it occurred during the first half of the last glacial period. Mass extinctions were not a feature of this event.

- Capacities for modern human behavior were undoubtedly present during the last interglacial, but the stable environments of this period did not foster widespread adoption of the strategic cooperative skills necessary for survival in the last glacial era.

Did the super-eruption of Toba cause a human population bottleneck? (PDF file)

Nicholas Kristof

Are terrorists more of a threat than slippery bathtubs? 464 people drowned in America in tubs, sometimes after falls, in 2013, while 17 were killed here by terrorists in 2014.

The basic problem is this: The human brain evolved so that we systematically misjudge risks and how to respond to them.

Unfortunately, our brains are not well adapted to most of the biggest threats we actually face in the 21st century. Warn us that climate change is destroying our planet, and only a small part of our prefrontal cortex will glimmer; then we’ll go back to worrying about terrorists.

Our brains are perfectly evolved for the Pleistocene, but are not as well suited for the risks we face today.

Brian May

Do you believe in God?

Johan Cruijff

蛭子能収

「つながる」は本当に必要?

すべては自由であるために。

田坂広志

高度な資本が生まれ、蓄積されていくために、最も重要な一つの要素がある。

「共感」である。

なぜなら、新たな知識や智恵は、共感がある場において生まれてくるからである。それは、企画会議の場などを想像してみれば良く分る。そして、人間同士の関係や信頼も、互いの共感が無ければ生まれない。さらに、世間での良い評判とは、多くの人々からの共感を意味している。そして、創造的文化もまた、その根底に人々の共感がある。

David Howe

Late nineteenth-century German philosophers used the word Einfühlung, later translated as empathy, when discussing aesthetics. One of the earliest appearances of the word was in 1846. Philosopher Robert Vischer used Einfühlung to discuss the pleasure we experience when we contemplate a work of art. The word represented an attempt to describe our ability to get ‘inside’ a work of beauty by, for example, projecting ourselves and our feelings ‘into’ a painting, a sculpture, a piece of music, even the beauty of nature itself.

‘For the romantic,’ comments Stueber, ‘nature is properly understood only if it is seen as an outward symbol of some inner spiritual reality.’ As the work of art or the beauty of nature resonates with us, the feelings generated are projected into, and then felt to be a quality of, that work of art, that glorious nature.

If we can ‘feel our way into’ a work of art in an act of empathy, our understanding increases and our appreciation deepens. With particularly powerful works of art, we feel ourselves reacting both viscerally and emotionally. As our bodies resonate with the flow of the paint, the pain of a face, the strength of a buttress, the flight of a spire, our feelings vibrate in tune with the emotions of the work we are contemplating. We have an aesthetic experience. We are moved in our contemplation of a sensuous object.

**

Empathy not only entails knowing what a person is feeling and feeling what a person is feeling, but also communicating, perhaps with compassion, the recognition and understanding of the other’s emotional experience.

Ben Rowland

Wikipedia

The experience of your own body as your own subjectivity is then applied to the experience of another’s body, which, through apperception, is constituted as another subjectivity. You can thus recognise the Other’s intentions, emotions, etc. This experience of empathy is important in the phenomenological account of intersubjectivity. In phenomenology, intersubjectivity constitutes objectivity (i.e., what you experience as objective is experienced as being intersubjectively available – available to all other subjects. This does not imply that objectivity is reduced to subjectivity nor does it imply a relativist position, cf. for instance intersubjective verifiability).

In the experience of intersubjectivity, one also experiences oneself as being a subject among other subjects, and one experiences oneself as existing objectively for these Others; one experiences oneself as the noema of Others’ noeses, or as a subject in another’s empathic experience. As such, one experiences oneself as objectively existing subjectivity. Intersubjectivity is also a part in the constitution of one’s lifeworld, especially as “homeworld.”

Jean Decety, William Ickes

After decades as the cultivated interest of scholars in philosophy and in clinical and developmental psychology, empathy research is suddenly everywhere! Seemingly overnight it has blossomed into a vibrant, multidisciplinary field of study and has crossed the boundaries of clinical and developmental psychology to plant its roots in the soil of personality and social psychology, mainstream cognitive psychology, and cognitive-affective neuroscience.

Raymond S. Nickerson, Susan F. Butler, Michael Carlin

If we did not make judgments of what other people know, how they feel, and what they are likely to do in specific situations, communication would be impossible. Writers have to gauge their expositions to the level of relevant background knowledge expected of their intended audiences. Speakers in everyday conversation must make assumptions about what the other parties to the conversation do and do not know in order to ensure that what they say will be understood.