As the name implies, content democratization allows individuals and small businesses to create and share digital content in more ways than would have been possible in the past. It eliminates previous limitations of the traditional media space, such as price and technology barriers that restricted content creation and sharing to fewer people.

This new media may include blogs, ebooks, podcasts, songs, videos and other digital content. It’s often supported by online platforms such as YouTube, TikTok, Facebook, Instagram, Snapchat or LinkedIn, which allow users to distribute content at little or no cost. As a result, the creative industry is changing rapidly and the boundaries between old media and new media are evolving.

Category Archives: information

An automated pair programmer

An automated pair programmer: fact or fiction?

Oege de Moor, Alex Gravely, Albert Ziegler – GitHub OCTO. August 31st, 2020

Executive Summary

We evaluate the use of OpenAI’s language models trained on source code, for the specific task of Python code synthesis from natural language descriptions.

Our findings are as follows:

-

Out of 233 hand-crafted programming exercises supplied by 30 GitHub engineers, 93% are successfully solved. The exercises include StackOverflow-type problems involving the use of an unfamiliar package, as well as programming challenges typically used in coding interviews at high-tech companies, and some elementary examples. The success in solving self-contained programming problems demonstrates that an “automated StackOverflow’ is around the corner.

-

ON 58,391 functions taken from open source repositories, the model achieves a 52.3% success rate in creating an alternative implementation from the original documentation of that function, which passes the test. Furthermore, we project that with more computational power the success rate increases to 60%. There are obvious ways in which the success rate can be further improved, even with today’s OpenAI models, by providing the model with more context about the repository that contains the function. These results prove and IDE plugin that helps developers write non-trivial code is not far away.

-

While the OpenAI models are already amazing today, they are further improving at a ferocious pace — just a few weeks ago the success percentage on rewriting arbitrary functions was 43.3%, instead of 52.3%. Where that earlier model needed 150 attempts to find a correct solution, the newer model needs 14. We therefore anticipate applications way beyond mere program synthesis, where developers create new code in an interactive conversation with the model.

We conclude that OpenAI’s technology is poised to change developer tools in fundamental ways. In particular an ‘automated pair programmer’ can be built that puts the collective knowledge of the entire GitHub community at the fingertips of every individual.

Introduction

An automated pair programmer, which puts the collective knowledge of the entire GitHub community at the fingertips of every individual, would transform the software industry. Many aspiring developers who do not have access to adequate training and advice today would instantaneously become productive…

Serge Morand

生物多様性が増大すれば病原体も増すが、減少すればエピデミックが増える

創発性の疾病の数は、絶滅の危機にさらされている哺乳類や鳥類の種の数と比例する。他方で、動物媒介感染症の数は森林面積の大きさと反比例する。つまりその数は、森林面積が減少すれば増加する。言い換えると、動物由来感染症や動物の媒介による疾病のエピデミックは、生物多様性の消失と結びついているということであり、消滅の危機にさらされている野生種の数や森林の密度によって推し量ることができる。要約すると、生物多様性が増せば病原体は増えるけれども、生物多様性が減少すれば感染症エピデミックは増えるということだ。

The Internet Is About to Get Much Worse (Julia Angwin)

We are in a time of eroding trust, as people realize that their contributions to a public space may be taken, monetized and potentially used to compete with them. When that erosion is complete, I worry that our digital public spaces might become even more polluted with untrustworthy content.

Already, artists are deleting their work from X, formerly known as Twitter, after the company said it would be using data from its platform to train its A.I. Hollywood writers and actors are on strike partly because they want to ensure their work is not fed into A.I. systems that companies could try to replace them with. News outlets including The New York Times and CNN have added files to their website to help prevent A.I. chatbots from scraping their content.

パスポート発給を拒否する「国が被告」の不思議な裁判(西村カリン)

原告がフリージャーナリストの安田純平さん、被告が国の裁判だ。パスポートの発給を拒否している国に対し、安田さんが訴訟を起こしている。

安田さんは2015年6月~18年10月の3年4カ月、シリア反政府勢力と思われるグループに拘束されていた(写真は18年の帰国後の記者会見での様子)。拘束中にパスポートを奪われたため、19年1月に再発行を申請したが、外務省から発給を断られたのだ。

残念なことに、日本では報道が目的であっても戦場に行くことは批判されるし、行く記者は「無責任」な人物というイメージができてしまった。

日本の政府も記者に対し、戦争中の国には「行かないで」と言う。マスコミも、報道の自由の観点からそうした状況は良くないとあまり指摘することなく、政府に従っている。

罪のない人のパスポートを発給しないことは、ちゃんとした説明がなければ誰も納得できない。国は4年以上もその説明責任を果たしていない。

辺民小考(ウジョイリ加藤)

世の中の色々なことについて様々な程度で考えることがあるが、それらについて人に伝えたい(話したい)と思った時は、家族や友人・知人に話してきた(文書にして示すなんてことはない)。日常生活で経験したことや、読んだ本の内容や、主にマスメディアを通じて世の中で関心を持たれている事柄などについて話すことが多い。それらの話題でも、単なる感想程度ならば特に問題はないが、話題にした事柄の原因や複数の事象の関係や意味付け等について自分なりに分析した内容を話そうとすると困ったことが起こる。それは、話を聞かされる相手が私が話す事柄に関心がない場合だ。関心がない事柄について、軽い感想程度ならまだしも、分析的で複雑な話を延々と聞かされることは苦痛以外の何物でもない。ましてや話される事柄が、日常生活やマスメディアの話題から大きく外れるならなおさらだ。

もし、考えたことを文章にしてメールで送るという方法をとれば、相手は話を聞かされるより苦痛が少ないだろうが、それでも何か応答しなければというプレッシャーを感じて迷惑だろうと思う。文章を印刷して紙を渡すという方法(家族や友人・知人の間ではあまり普通の方法ではない)でも大同小異だ。そもそも問題は、伝える方法にあるのではなく、伝えたい内容に関心がない相手に伝えようとすることにある。

Stat4decision

Stat4decision is a training and consulting company that is committed to supporting you in your path to modern data science and AI.

Stat4decision is a training and consulting company that is committed to supporting you in your path to modern data science and AI.

We provide high level consulting to help you incorporate data science and AI within your processes for creating value with data.

Our corporate training service empowers your teams with advanced data science skills using the open-source scientific computing ecosystem.

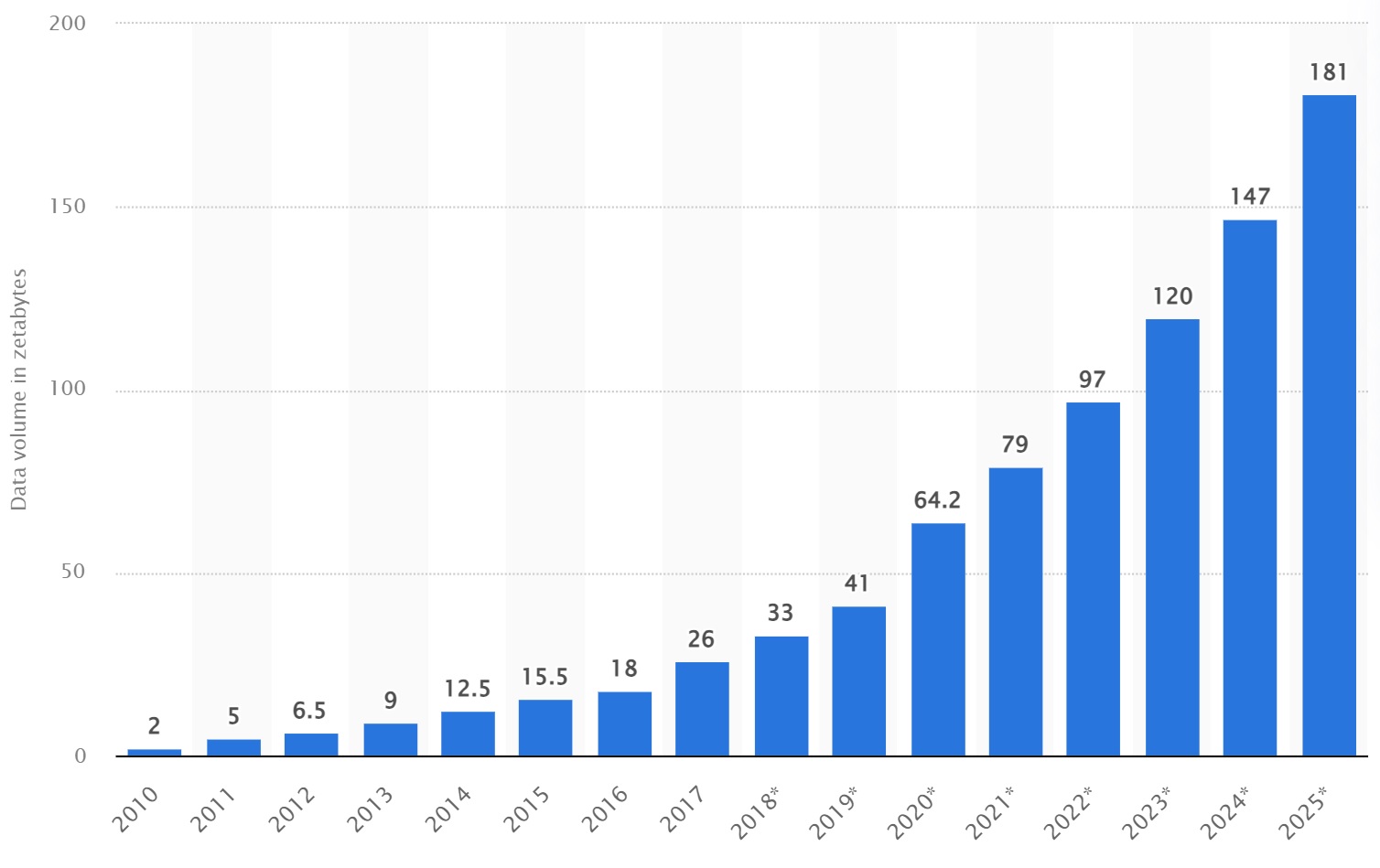

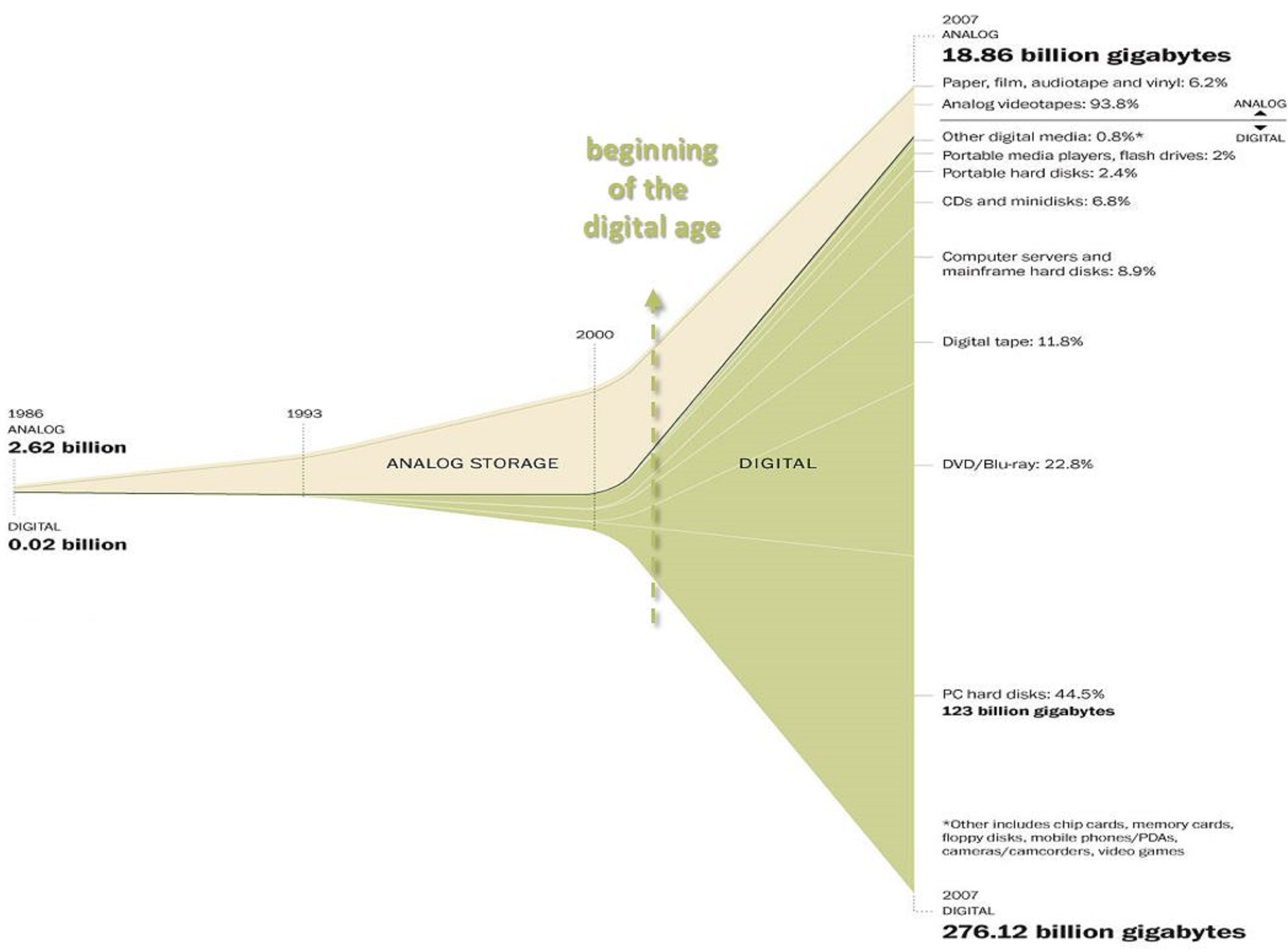

Volume of data created, captured, copied, and consumed worldwide

Department of Agriculture Food and the Marine of Ireland (DAFM)

The Department for Agriculture has said a report outlining a 200,000 reduction in dairy cows was a “modelling document”.

It was reported yesterday the cows would have to be “culled” at a cost of €600,000 to taxpayers over the next three years to meet climate emissions targets.

The Farming Independent said it got the figures in its report from an internal document through a freedom of information request.

A spokesperson for the Department of Agriculture, Food and the Marine said: “The Paper referred to was part of a deliberative process – it is one of a number of modelling documents considered by the Department of Agriculture, Food and the Marine and is not a final policy decision.

“As part of the normal work of Government Departments, various options for policy implementation are regularly considered.”

Ireland’s Agriculture sector was directly responsible for 38% of national Greenhouse Gases (GHGs) emissions in 2021 according to the Environmental Protection Agency.

Shoshana Zuboff

The real psychological truth is this: If you’ve got nothing to hide, you are nothing.

Work is meaningful and fun when it’s an expression of your true core.

Every century or so, fundamental changes in the nature of consumption create new demand patterns that existing enterprises can’t meet.

Earlier generations of machines decreased the complexity of tasks. In contrast, information technologies can increase the intellectual content of work at all levels. Work comes to depend on an ability to understand, respond to, manage, and create value from information.

フィルターバブル(鳥海不二夫、山本龍彦)

ビックデータを使ったプロファイリングとターゲティングにより、我々は情報の海のなかから、自身に「有益」と<される>情報を絞り込まれ、「自分用」 にカスタマイズされた情報を閲覧できるようになった。

しかし、それは良いことばかりではない。人間には、同じような考えを持つ者だけが集まって議論すると、その考え方がより過激化する傾向があると指摘されている(集団分極化)10。こうした人間の傾向とインターネットの特性の相互作用による現象と言われているものとして、「エコーチェンバー」と「フィルターバブル」がある。

アルゴリズムはユーザーの好み(preferences)を分析し、それに基づいた情報を優先的に表示する。ユーザーにとって有益な情報ばかりが優先的に表示される結果、まるで自分色の泡のなかに閉じ込められているかのように自分が見たい<とされる>情報しか見えなくなってしまう(「フィルターバブル」)。そしてこのバブルの内側では、自身と似た考え・意見が多く集まり、反対のものは排除(フィルタリング)されるため、その存在そのものに気付きづらい。

SNS上でも同様のことが起こる。自分と同様の興味関心を持つユーザーばかりをフォローした結果、特定の意見を発信するとそれと似たような意見ばかりが反響してくる(「エコーチェンバー」)。この反響により何度も同じような意見を聞くことで、それが正しく、間違いのないものであると、より強く信じ込んでしまう(「陰謀論」を想起してほしい)。

フィルターバブルやエコーチェンバーなどにより、集団分極化は加速すると指摘される11。傾向を極端化させた人々は考えが異なる他者を受け入れられず、話し合うことを拒否する。この2つの現象は、社会の分断を誘引し、民主主義を危険にさらす可能性がある。

Filter Bubble (Techopedia)

A filter bubble is the intellectual isolation that can occur when websites make use of algorithms to selectively assume the information a user would want to see, and then give information to the user according to this assumption. Websites make these assumptions based on the information related to the user, such as former click behavior, browsing history, search history and location. For that reason, the websites are more likely to present only information that will abide by the user’s past activity. A filter bubble, therefore, can cause users to get significantly less contact with contradicting viewpoints, causing the user to become intellectually isolated.

Personalized search results from Google and personalized news stream from Facebook are two perfect examples of this phenomenon.

Anti-EU propaganda (RT)

発達障害

全人口の2~6%を占めるともいわれる発達障害は。。。

医師から発達障害と診断された人は 48万1千人と推計され。。。

マリア・レッサ(Maria Ressa)

日経電子版

渡邉恒雄主筆が「このままでは持たない」と言う読売新聞に対し、日経電子版を成功させた日本経済新聞。新体制で方向転換するヤフー。三者三様の三国志から見通す「メディアの未来」。

──ネットは日経の独り勝ち。

杉田亮毅社長が無料サイト50億円の売上高を捨ててでもデジタル有料版をやると決断した2007年は、ネットの情報はタダが当然という時期。ネットで金を取っていた新聞はウォール・ストリート・ジャーナルだけでした。

まさにイノベーションのジレンマを破った。

日経は1970年代に当時の圓城寺次郎社長が「総合情報化路線」という新聞社のコンセプトを変える方針を打ち出した。分単位で相場などの情報を伝えるQUICKを作り、アーカイブ機能を持つ日経テレコンを作る。紙は情報提供の手段の1つという考えが若い世代に受け継がれたのが大きい。

また、「長期経営計画」というユニークなシステムがあった。30〜50代のエース級人材を局横断で集め、1つのテーマを1年間かけて研究、経営陣にレポートを提出させる。若い頃から日々の仕事とは別に技術革新などの大きなテーマを考える訓練がなされ、新しい市場に出る土壌があった。

事実はなぜ人の意見を変えられないのか

Humans are not wired to react dispassionately to information. Numbers and statistics are necessary and wonderful for uncovering the truth, but they’re not enough to change beliefs, and they are practically useless for motivating action. This is true whether you are trying to change one mind or many—a whole room of potential investors or just your spouse. Consider climate change: there are mountains of data indicating that humans play a role in warming the globe, yet 50 percent of the population does not believe it. Consider politics: no number will convince a hardcore Republican that a Democratic president has advanced the nation, and vice versa. What about health? Hundreds of studies demonstrate that exercise is good for you and people believe this to be so, yet this knowledge fails miserably at getting many to step on a treadmill.

In fact, the tsunami of information we are receiving today can make us even less sensitive to data because we’ve become accustomed to finding support for absolutely anything we want to believe with a simple click of the mouse. Instead, our desires are what shape our beliefs; our need for agency, our craving to be right, a longing to feel part of a group. It is those motivations we need to tap into to make a change, whether within ourselves or in others.

Rodrigo Duterte

Hitler massacred 3 million Jews … there’s 3 million drug addicts. There are. I’d be happy to slaughter them.

If you are corrupt, I will fetch you using a helicopter to Manila and I will throw you out. I have done this before. Why would I not do it again?

Just because you’re a journalist you are not exempted from assassination, if you’re a son of a bitch.

渡辺延志

河北新報は震災のニュースを全国に先駆けて入手し、迅速な報道によって、東北地方の人びとに震災の速報を次々と伝えたが、それと同時に「朝鮮人暴動」流言もまた、同紙の報道を通じて東北地方に広く伝播することになったのである。

震災直後の混乱期に河北新報の報道の中で大きな比重を占めたのは東京からの避難民の談話であり、これらの談話の内容は、とくに朝鮮人による暴行に関しては事実を著しく歪め、あるいは誇張した流言に満ちていた。

だが、新聞記者としてその場に自分がいたならと考えると、やはり同じ様な記事を書いただろうと思えてならない。聞いた話の内容が本当に事実なのかを確認する手段はない。

だが、語っている人たちに嘘をつく理由が考えられない。数多くの人に話を聞けば聞くほど、内容は似通っている。全国どこの新聞であっても、一本でも多くの記事を載せたいという段階だった。

そもそも事故や災害の現場で、体験者や目撃者を探して証言を集めるという取材は今日でも珍しいものではない。記者の基本動作ともいえる。

例えば、2020年、新型コロナウイルスによる大規模な感染が確認された中国の武漢から日本人を帰国させるために日本政府はチャーター便を運航した。到着する空港には多くの報道陣が待ち構えていた。そこで帰国者が語った言葉は、そのまま報じられたはずであり、日本国内から見ていただけでは想像できない切実な話であればあるほどニュース価値は高かったはずだ。

河北新報が群を抜いて多くの記事を掲載したことには理由があったように思えてならない。熱心に報道をしたのは確かだろうが、それと加えて被災者から話を聞くことのできる条件がそろっていたのだ。

Ahmed El Gody

Information manipulation and false content has been perceived and defined differently over time. In the Arab media context, fake news is not a new dilemma, and is more likely to be used as an instrument of content control, influence and public opinion manipulation. This is related to the issue of news dis/misinformation. Audience trust and credibility in Arab media outlets – especially government-owned – is at an all-time low (under 20 percent in various countries). Controlling fake news is becoming a primary concern for the Arab media industry. Source verification and managing organisational resources is an acute dilemma. Using Artificial Intelligence (AI), machine learning and NLP to automate the process of identifying fake news is looked upon as the cornerstone to separate the ’truth’ from ‘fake’ in the news field. This study aims at assessing the efforts of the Al Jazeera network in controlling fake news in its newsrooms. The study is based on qualitative structured and semi-structured interviews with Al Jazeera newsroom teams and artificial intelligence technology developers. The results showed a variety of efforts being conducted by various Al Jazeera teams to control fake content and prevent Al Jazeera content from being misused. They also showed the importance of the role of artificial intelligence, especially anticipation technologies, in detecting fake sources and managing newsroom operation.

Royal Society for Public Health, UK

What are the potential negative effects of social media on health?

Anxiety and depression

The unrealistic expectations set by social media may leave young people with feelings of self-consciousness, low self-esteem and the pursuit of perfectionism which can manifest as anxiety disorders. Use of social media, particularly operating more than one social media account simultaneously, has also been shown to be linked with symptoms of social anxiety.

Drawing on research findings it identifies the potential negative impacts of social media on health as: anxiety and depression, sleep, body image, cyber bullying and fear of missing out.

Sleep

Sleep and mental health are tightly linked. Poor mental health can lead to poor sleep and poor sleep can lead to states of poor mental health. Sleep is particularly important for teens and young adults due to this being a key time for development.

Numerous studies have shown that increased social media use has a significant association with poor sleep quality in young people.

Body image

Body image is an issue for many young people, both male and female, but particularly females in their teens and early twenties. As many as nine in 10 teenage girls say they are unhappy with their body.

There are 10 million new photographs uploaded to Facebook alone every hour, providing an almost endless potential for young women to be drawn into appearance-based comparisons whilst online. Studies have shown that when young girls and women in their teens and early twenties view Facebook for only a short period of time, body image concerns are higher compared to non-users.

Cyberbullying

Bullying during childhood is a major risk factor for a number of issues including mental health, education and social relationships, with long-lasting effects often carried right through to adulthood. The rise of social media has meant that children and young people are in almost constant contact with each other. The school day is filled with face-to-face interaction, and time at home is filled with contact through social media platforms. There is very little time spent uncontactable for today’s young people. While much of this interaction is positive, it also presents opportunities for bullies to continue their abuse even when not physically near an individual. The rise in popularity of instant messaging apps such as Snapchat and WhatsApp can also become a problem as they act as rapid vehicles for circulating bullying messages and spreading images.

Fear of Missing Out (FoMO)

The concept of the ‘Fear of Missing Out’ (FoMO) is a relatively new one and has grown rapidly in popular culture since the advent and rise in popularity of social media.

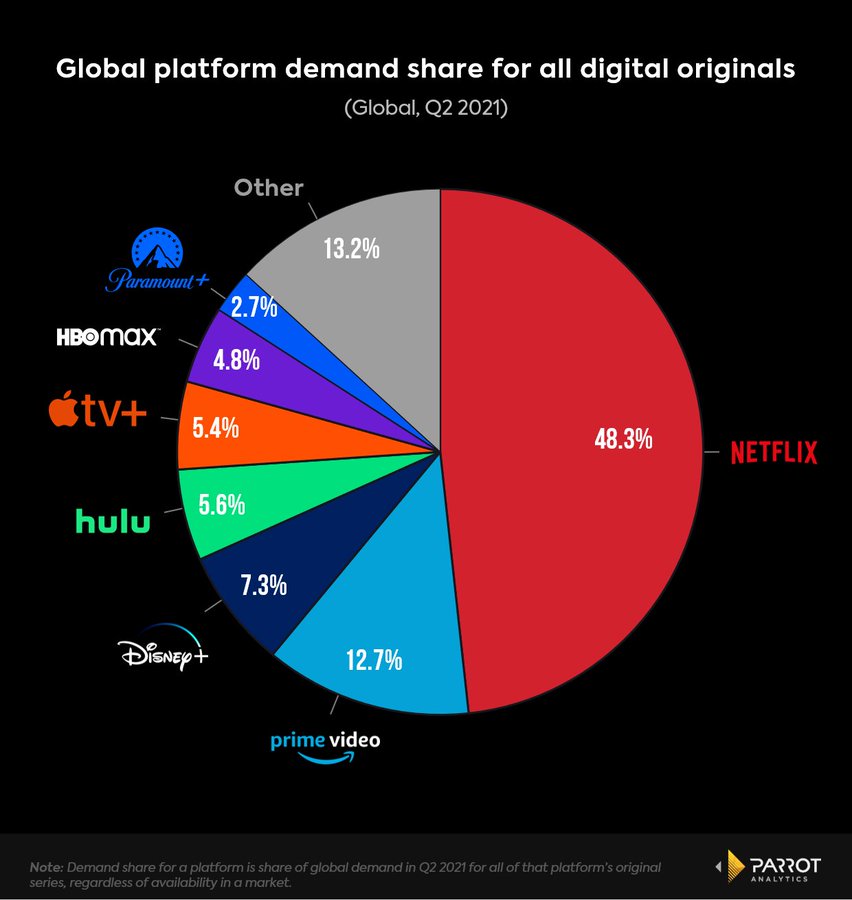

Parrot Analytics

中川淳一郎

私はニュースサイトの編集者をやっている関係で、ネット漬けの日々を送っているが、とにかくネットが気持ち悪い。そこで他人を「死ね」「ゴミ」「クズ」と罵倒しまくる人も気持ち悪いし、「通報しますた」と揚げ足取りばかりする人も気持ち悪いし、アイドルの他愛もないブログが「絶賛キャーキャーコメント」で埋まるのも気持ち悪いし、ミクシィの「今日のランチはカルボナーラ(*^_^*)」みたいなどうでもいい書き込みも気持ち悪い。うんざりだ。

私はニュースサイトの編集者をやっている関係で、ネット漬けの日々を送っているが、とにかくネットが気持ち悪い。そこで他人を「死ね」「ゴミ」「クズ」と罵倒しまくる人も気持ち悪いし、「通報しますた」と揚げ足取りばかりする人も気持ち悪いし、アイドルの他愛もないブログが「絶賛キャーキャーコメント」で埋まるのも気持ち悪いし、ミクシィの「今日のランチはカルボナーラ(*^_^*)」みたいなどうでもいい書き込みも気持ち悪い。うんざりだ。

**

『ウェブはバカと暇人のもの』から10年。ウェブはやっぱりバカと暇人のものだった。「まだまだウェブはバカと暇人のモノですか?」というお題については、こう答える。確かに“何か”は明確に変わった。それは以下である。

「ウェブはバカと暇人と格差社会勝者のもの」

上法玄

都内は無数の防犯カメラが存在する。「今、まともな人間は都内で犯罪をしない。すれば防犯カメラ映像がもとになり必ず捕まるから」と豪語する警視庁の捜査員がいるくらいだ。

東北大学附属図書館

本40万冊が落下、地震に頭抱える図書館 傾斜5度でもダメだった

大事なこと

「大事なこと」がある

考えなければと思う

実際に 多くの人たちが

「大事なこと」を考え始める

ところがいつも「何か」が起きる

「大事なこと」を考えるのは中断を余儀なくされ

「何か」によって

「大事なこと」は忘れ去られる

「何か」はいつも違う

地震や津波といった災害だったり

新型コロナウィルスのような感染症だったり

ウクライナのような紛争や戦争だったり

緊急度の高いことが 違う顔をしてやって来る

「何か」はとても大事

「大事なこと」よりも もっと大事

だから「大事なこと」は後回しにされ

誰も「大事なこと」を話さなくなる

あれっ?

「大事なこと」ってなんだったっけ?

思い出せないということは

きっと大事でなかったんだな

朽木誠一郎

公的機関が推奨するにあたっては、その情報は複数の専門家が世界や国内のデータを検証した上で、その機関が責任を持って提言しているはずのものです。他の情報よりも信頼性は高いと言えるでしょう。

そして、その情報が修正されたときに、柔軟にそれを受け入れることが必要です。

Ilaria Grasso Macola

In June 2019, the New York Times reported that the US launched cyberattacks into the Russian power grid.

According to the newspaper, US military hackers used American computer code to target the grid as a response to the Kremlin’s disinformation campaign, hacking attempts during the 2018 midterm elections and suspicions of Russia hacking the energy sector.

The story was condemned by President Trump, who said it was fake news, and experts, while the Kremlin said it was a possibility.

According to the 2018 National Defence Authorisation Act, government hackers are permitted to carry out “clandestine military activities” to protect the country and its interests.

Internet Society

The Internet Society supports and promotes the development of the Internet as a global technical infrastructure, a resource to enrich people’s lives, and a force for good in society.

Our work aligns with our goals for the Internet to be open, globally connected, secure, and trustworthy. We seek collaboration with all who share these goals.

Together, we focus on:

- Building and supporting the communities that make the Internet work;

- Advancing the development and application of Internet infrastructure, technologies, and open standards; and

- Advocating for policy that is consistent with our view of the Internet

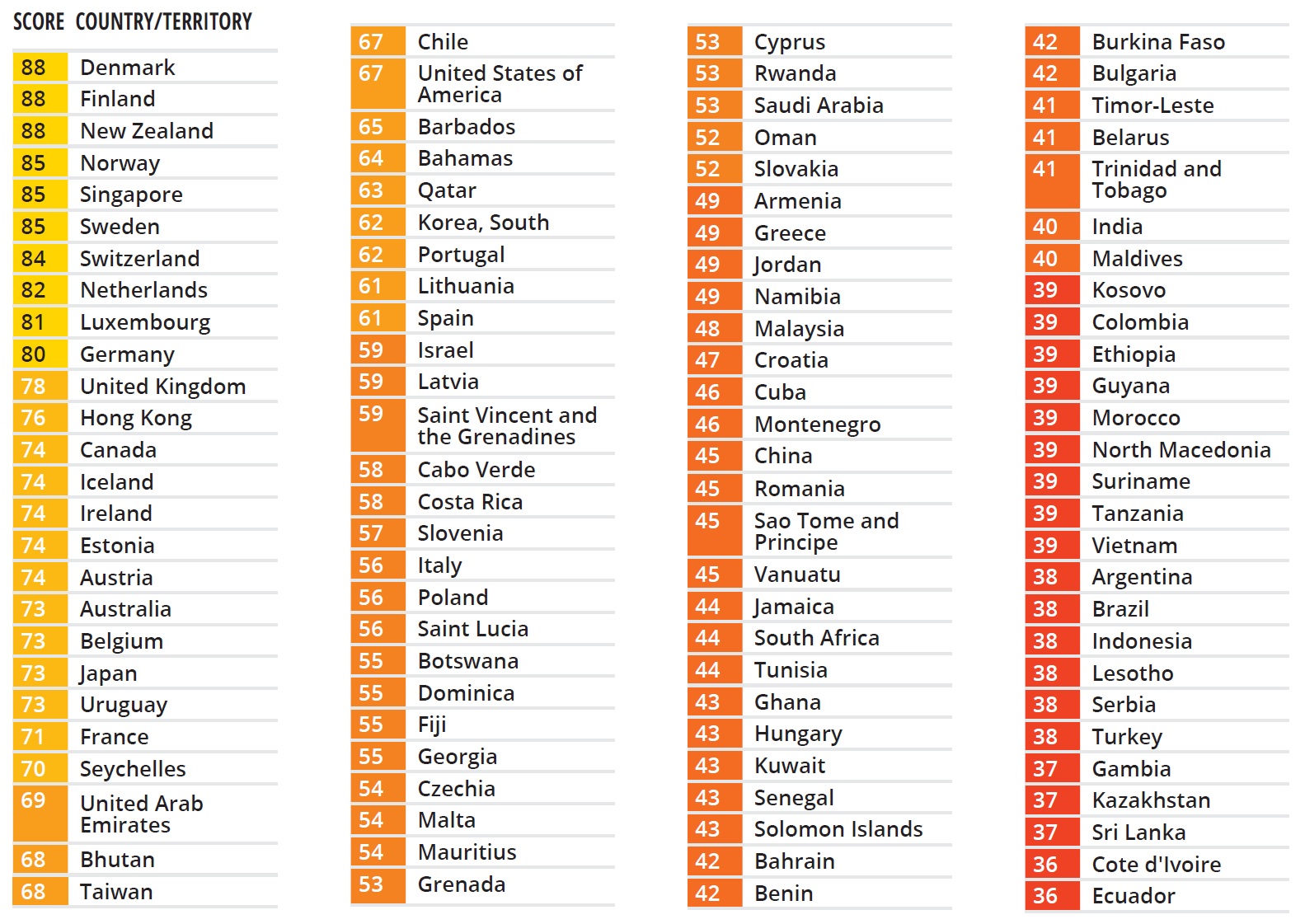

Transparency International

ITU

An estimated 37 per cent of the world’s population – or 2.9 billion people – have still never used the Internet.

New data from the International Telecommunication Union (ITU), the United Nations specialized agency for information and communication technologies (ICTs), also reveal strong global growth in Internet use, with the estimated number of people who have used the Internet surging to 4.9 billion in 2021, from an estimated 4.1 billion in 2019.

This comes as good news for global development. However, ITU data confirm that the ability to connect remains profoundly unequal.

Of the 2.9 billion still offline, an estimated 96 per cent live in developing countries. And even among the 4.9 billion counted as ‘Internet users’, many hundreds of millions may only get the chance to go online infrequently, via shared devices, or using connectivity speeds that markedly limit the usefulness of their connection.

福島香織

- 新聞記者は、もともと疑うのが仕事。一見単純に見える自称に複雑な裏があるんじゃないか、とか、立派そうな人にスキャンダルがあるんじゃないか、とか。でも、一番疑うべきは自分の正義感だと、記者歴20年目くらいからわかってくる。

- 記者が正義にこだわるのは、事実を報じることが必ずしもも社会にポジティブな影響を与えるわけではないからだ。というより、ネガティヴな影響の方が多い。あと誰かしら傷つけるものでもある。その罪悪感を薄めるために、いろいろ自分が正義であるかのような言い訳をしてしまう。若いころは特に。

- 少数マスメディアが社会の木鐸を名乗って世論誘導する時代から、ネットの個人メディアを含め多様なメディアが多様な事実、見方を提示して、それを社会の薬とするか、毒とするかは情報の受け手に委ねられる時代となったのだと思う。

- 大手メディアが政権に対するチェック機能を名乗る時代から、情報の受け手がsnsなどで発信するこえが世論を形成して、政権や、メディアの正しさを疑う時代になったのだと思う。

- 報じられた事実を社会の毒とするか薬とするかは、受けて側にある、と言うと、メディアの無責任と言われるのが日本だが。香港とかのメディア研究者の考え方は、メディアの責任は事実を報じるという一点に止まり、その社会的反応まで責任を負わされると、事実を隠蔽、自粛する面が出てくる。

- 自分の中に絶対正義を作ってしまうと、どうしても恣意的報道に陥る。恣意的報道はメディアの多様性の中である程度の補正がかかるのでまあいいとして、情報の隠蔽の言い訳にしてしまうことがある。

- 社会が未成熟な時代、メディアは知識人の集合体で、無知もうまいな大衆を教え導く存在を自負していた。暴走する世論を正しく教え導く立場。社会の木鐸を名乗るのはそう言う意識が元になっている。だかネットといもののおかげで、受け手もそれなりの集合知となっている。

Byung-Chul Han (Transparency Society)

Transparent language is a formal, indeed, a purely machinic, operational language that harbors no ambivalence. Wilhelm von Humboldt already pointed to the fundamental intransparency that inhabits human language:

Nobody means by a word precisely and exactly what his neighbour does, and the difference, be it ever so small, vibrates, like a ripple in water, throughout the entire language. Thus all understanding is always at the same time a not-understanding, all concurrence in thought and feeling at the same time a divergence.

A world consisting only of information, where communication meant circulation without interference, would amount to a machine. The society of positivity is dominated by the “transparency and obscenity of information in a universe emptied of event.” Compulsion for transparency flattens out the human being itself, making it a functional element within a system. Therein lies the violence of transparency.

David Brin (Transparency Society)

Whenever a conflict appears between privacy and accountability, people demand the former for themselves and the latter for everybody else.

おにぎり (id:kyonbokkun)

突然の不幸が起きた時、私たちはただ運が悪かったと受け入れることができずに理由を探そうとします。

「コロナにかかった人は遊び歩いていたにちがいない」

「痴漢にあったのは短いスカートをはいていたせいだ」

不幸の原因を本人のせいにして責め立てることで、自分は安全な場所にいるのだと安心する心理。

不幸にあった人を吊るし上げて被害者を2度苦しめ追い詰める社会。

「死亡予告を受けた者は罪を犯している」という言説を信じ込んでしまう人々。心底それを正義だと信じて悪質な書き込みをするネット民、一度真実になってしまった嘘を否定することの難しさ。

インターネットでの個人の吊るし上げ、監視社会。。。

Nina Schick

A deepfake is a type of ‘synthetic media,’ meaning media (including images, audio and video) that is either manipulated or wholly generated by AI. Technology has consistently made the manipulation of media easier and more accessible (ie through tools like Photoshop and Instagram filters). But recent advances in AI are going to take it further still, by giving machines the power to generate wholly synthetic media. This will have huge implications on how we produce content, and how we communicate and interpret the world. This technology is still nascent, but in a few years’ time anyone with a smartphone will be able to produce Hollywood-level special effects at next to no cost, with minimum skill or effort.

While this will have many positive applications – movies and computer games are going to become ever more spectacular – it will also be used as a weapon. When used maliciously as disinformation, or when used as misinformation, a piece of synthetic media is called a ‘deepfake’. This is my definition for the word. Because this field is still so new, there is still no consensus on the taxonomy. However, because there are positive as well as negative uses-cases for synthetic media, I single distinguish a ‘deepfake’ specifically as any synthetic media that is used for mis- and disinformation purposes.

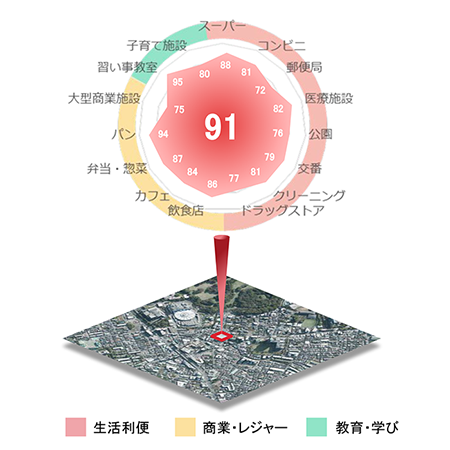

日建設計総合研究所

『Walkability Index』は、ある地点から徒歩で到達できる範囲に、生活利便施設*、商業・レジャー施設**、教育・学び施設*** といった生活をする上で近くにあって嬉しい「都市のアメニティ」がどれだけ集積しているかを100点満点で評価する指標です。本指標は、株式会社ゼンリン提供の各種データ及び都市に関するオープンデータを用いて算出しています。

『Walkability Index』は、ある地点から徒歩で到達できる範囲に、生活利便施設*、商業・レジャー施設**、教育・学び施設*** といった生活をする上で近くにあって嬉しい「都市のアメニティ」がどれだけ集積しているかを100点満点で評価する指標です。本指標は、株式会社ゼンリン提供の各種データ及び都市に関するオープンデータを用いて算出しています。

* スーパー、コンビニ、ドラッグストア、医療施設、公園など

** 飲食店、カフェ、パン、大型商業施設、娯楽施設、スポーツ施設など

*** 習い事教室、書店、文化施設、子育て施設など

Service-Public.fr

Doit-on remplacer son permis de conduire rose cartonné par un nouveau modèle ?

Remplacement du permis de conduire rose cartonné

Vous avez un permis de conduire rose cartonné et vous vous demandez ce qui va se passer en 2033 ?

À ce jour, aucune décision n’a été prise concernant son remplacement avant 2033.

Les informations de cette page restent d’actualité et seront modifiées dès publication d’un texte modificateur.

Non, le permis de conduire rose cartonné reste valable jusqu’au 19 janvier 2033.

Vous n’avez pas à demander son remplacement, sauf en cas de détérioration, perte ou vol.

弾圧

権力は言葉を嫌う

言葉に手錠にはめ

パトカーに押し込み

鉄格子の後ろに閉じ込める

それで言葉を制した気になっている

でも

どんなに厳重に警備をしても

言葉は鉄格子をすり抜け

外に出る

捕獲したと思っても

網をすり抜ける

権力の理不尽さと強さを見た人々は

委縮して静かになったように見える

でも人々の心は変わらない

本を焼き

インターネット上の文章を削除しても

人々の心のなかの言葉を

消すことはできない

人々を弾圧することはできても

言葉を弾圧することはできない

言葉は強い

知性という幻想

良くも悪くも 戦後の日本には 啓蒙の時代という時間が流れていた

そしてそこでは 民主主義を啓蒙するという矛盾が まかり通っていた

片側に知識人がいて 反対側にいる大衆を啓蒙する

知識人から大衆への知の流れがあって

それは 誰もがものを考える という民主主義の理想からは遠かったけれど

人は今よりずっと素直で まとまりやすかったのはではないか

良くも悪くも 今の日本には PR の時代という時間が流れている

そしてそこでは 嘘をロンダリングする騙しが まかり通っている

片側に何も言えなくなった権力者がいて 反対側に些細なことを騒ぎ立てる人たちがいる

権力者たちは あたり障りのないメッセージを読み上げ

それは 相互に利益をもたらす関係性を構築する という PR の理想からは遠かったけれど

人は自分の感情を画面にぶつけるだけで 何の行動も起こしはしない

「大東亜共栄圏」は「自由で開かれたインド太平洋」に置き換わり

「オリンピックを利用した金儲け」は「オリンピックの感動と興奮」の陰に隠れる

「人類が新型コロナウイルスに打ち勝った証しとしてのオリンピック」だとか

「オリンピック開催により被災地の復興が加速した」だとか

「安心安全のオリンピック」とかのフレーズが 繰り返し流される

どんな論理も感情にすり替えられ

データやエビデンスは検討されることもなく

肌感覚や粗野な感情が判断に使われる

大衆はひとりもいないのに 大衆が利用され

物言わぬ人たちが 知らないところに流されてゆく

どこに逃げようか

君と一緒に 北欧にでも行こうか

いや やっぱり ここにいて

どんなになるのか 見ていよう

韓ドラを見るように

楽しんで 笑いながら

活字離れ

人が本を読まなくなった社会では

焚書も発売禁止も必要ない

そもそも 読む人がいないのだから

禁止しても しなくても なんの変わりもない

映像を見ながら音声を聞くほうが

本のなかに並ぶ字を追うよりも面白いのだから

本離れは自然の成り行きで

嘆いても憂いても なにも変わらない

映像と音声に詰まった情報量は

本のなかの情報量の数千倍 いや 数万倍 いや数億倍

本に書かれたことが捏造だといっても

映像や音声の捏造に比べたら子供だましでしかない

編集後の映像や音声のもっともらしさは

現実のもっともらしさを はるかにしのぎ

本のなかの情報が危険だといっても

映像と音声の危険さには及ばない

映像と音声の受け取り方が受動的だったのは

もう昔のこと

今は情報の海のなかから

能動的に映像と音声を選び取る

面白いことが重要になると

面白い内容が選び取られ

哲学はドラマの陰に隠れ

文学はスポーツに負ける

人の好みをAIが検知し

人が見るものをAIが決める

AIが君を知っている

僕の知らない君を知っている

ハッキング

泣いた女が バカなのか 騙した男が 悪いのか

という歌があったけれど

騙したほうが悪いのか 騙されたほうが悪いのか

という議論は 永遠に尽きない

騙したほうが悪いに決まっているのだが

社会はそんなに甘くない

信用してはいけない人を信用したり

嘘を見抜けずに金を払ったりすれば

ひどい結果が待っている

良いことをすれば報われて

悪いことをすれば罰せられると

言われるままに信じてみたいけれど

高級住宅地に並んでいる家々は

悪いことをしなければ手に入るまい

泥棒に入られる方が悪いとか

いじめられた方が悪いとか

悪い人たちが弱者を悪く言う

ふざけるんじゃない

泥棒が悪いに決まっているし

いじめた人が悪いに決まっている

悪い人が悪い

そう言わなければいけない

騙したほうが悪いのだ

**

でも 騙したほうが悪いというような論理は

ハッキングには通じない

ハッキングする方が悪いなんて言っているうちに

ITシステムは次々にハッキングされ

ハッキングされる方が悪いとしか

言いようのない現実が広がっている

ホワイトハット・ハッカーと言われる人がいて

どんな時にも法を守り 倫理に反することはせずに

政府機関や民間企業などのITシステムを守っている

ブラックハット・ハッカーと言われる人もいて

法を破ることも 倫理に反することも 躊躇せず

政府機関や民間企業などのITシステムを攻撃する

グレイハット・ハッカーはその中間で

自分が任されているITシステムを守るためなら

時として法を破り 倫理に反する行動をとる

ホワイトハット・ハッカーに課せられた制約は大きく

ITシステムを防衛しようと思えば

グレイハット・ハッカーにならざるをえない

ホワイトハット・ハッカーは そもそも報酬が少ないし

いい道具と環境が与えられず 技術的に劣ることが多いから

ブラックハット・ハッカーの攻撃を 防ぐことはできない

ホワイトハット・ハッカーを 倫理的なハッカーと呼ぼうと

コンピュータセキュリティの専門家と呼ぼうと

侵入テストを繰り返していれば そうそうホワイトではいられない

ブラックハット・ハッカーの攻撃を防ぐには

ホワイトハット・ハッカーに 法を破り 倫理に反することを許し

ブラックハット・ハッカーの道具や環境以上のものを与えるしかない

いい道具や環境を与えたからといって

ハッキングが防げるわけでもない

こちらのホワイトハット・ハッカーは

あちらにとってはブラックハット・ハッカーで

こちらのブラックハット・ハッカーは

あちらにとってはホワイトハット・ハッカーなのだ

ハッキングは戦いだと心に決めて

味方を守るために戦うしかない

負けた武将が消えるしかなかったように

負けたハッカーは消えるしかない

優秀なハッカーを揃えた集団が

勝ち残っていくという現実から

目をそむけないほうがいい

ハッキングが いい悪いで語られた時代は

もう とうに 終わったのだ

ハッキングは強いほうがいいに決まっている

同じ情報 違う情報

同じ情報に触れていると

同じように考える人ができる

違う情報に触れていると

違うふうに考える

同じ新聞を見ている人となら

話が通じる

同じテレビ番組を見ている人とは

話が合う

インターネットで同じようなサイトばかり見ていると

世界中がそのようなサイトで溢れていると思い込み

じつは違うサイトのほうがずっと多いことに

思い至りはしない

同じ情報に触れている人は

味方に感じ

違う情報に触れている人は

敵に感じられる

同じ情報に触れている人が集まって

違う情報に触れている人といがみ合う

同じ情報に触れている人との繋がりや共感は

違う情報に触れている人への憎しみや反感でもある

同じ情報という繋がりや共感は

違う情報の人たちをはねのける

違う情報という憎しみや反感は

同じ情報の人たちを結束させる

同じ情報を流す人たちには

罪悪感の欠片もない

もちろん その人たちに

罪があるわけでもない

違う情報を流す人たちに

罪悪感があるはずがない

だから その人たちを

罪に問うことはできない

同じ情報の人たちの

想いが同じなら

違う情報の人たちの

考え方が違うのはあたりまえ

違う情報に触れていることは

悪いことではない

同じ情報に触れていることが

悪いことではないように

朝日新聞

一部を加工しています

テレビ

テレビがつまらない

どのチャンネルもつまらない

そんなわけで仕方なく

外国の番組にチャンネルを合わせたりする

テレビ局で働いている人たちは

家にも ろくに帰れないくらい忙しい

過重労働が蓄積して

病んでしまっている

病んだ人たちが集まって

みんな 朦朧としていて

なぜか テンションは高くて

でも やっぱり眠そうで

そんな状態で作る番組が

おもしろいわけはない

でも それよりも なによりも

テレビの番組がおもしろくないのは

それが作り手が作りたい番組で

僕が見たい番組ではないからだ

スポンサーがお金を払いたくなる番組は

好感度が高く 視聴率が高いような

要するに見ても見なくてもいい

あたりさわりのない番組なのだ

テレビから

垂れ流される番組を見せられて

洗脳されるのと

インターネットの中から

選び取ったページを見て

考えるのと

どっちを選ぶかといえば

僕はインターネットを選ぶ

どんなこともビジネスとしてしか

考えられない人たちがいて

そんな人たちにとっては

テレビもインターネットも

儲けるための道具でしかなくて

どちらのほうが

売り上げにつながるかとか

どちらのほうが

人を操りやすいかとか

そんなろくでもないことが重要で

そんな人たちが集まって作るのだから

テレビ番組も

インターネットのコンテンツも

おもしろくない

インターネットよりテレビのほうが

人を操るのが簡単だったのは

もう 昔の話でしかない

集団で騙されていた人たちが

いま ひとりひとり騙されている

IoT のセンサーが街中にあって

AIや ブロックチェーンは

どんどん進化して

ますます見えなくなっていて

ビッグデータの分析で

わからないはずのことが

わかってしまっていて

どこにいても 何をしても

私たちは操られている

権力者たちや金持ちたちに

騙されている

それにしても テレビはつまらない

テレビを喜んで見ている人は

まるで異星人だ

そう言いながら僕は

今日もテレビを見ている

バカ面をして

口をあけて

テレビを見ている

山中伸弥

普通の病気は、患者さんや家族を苦しめます。しかし、新型コロナウイルスはより多くの人を苦しめています。

- 感染した方、そのご家族

- エッセンシャルワーカーの方々。医療従事者、公共交通、物流、インフラ、小売り、窓口業務など、自らの感染リスクと、偏見や差別に耐えながら社会を支えて頂いている方々。(提言)

- 活動自粛により、仕事、介護、教育などの権利を奪われている方々

収束には長期間を要します。しかし夜は必ず明けます。それまでの間、これらすべての方々を守らなければなりません。

私はどれにも該当しません。一番の社会貢献は、できるだけ外出を控えてエッセンシャルワーカーの方々への負担を減らすことです。しかし、それ以外に何か出来ないか、常に模索しています。一人一人のプラスアルファの努力が求められています。

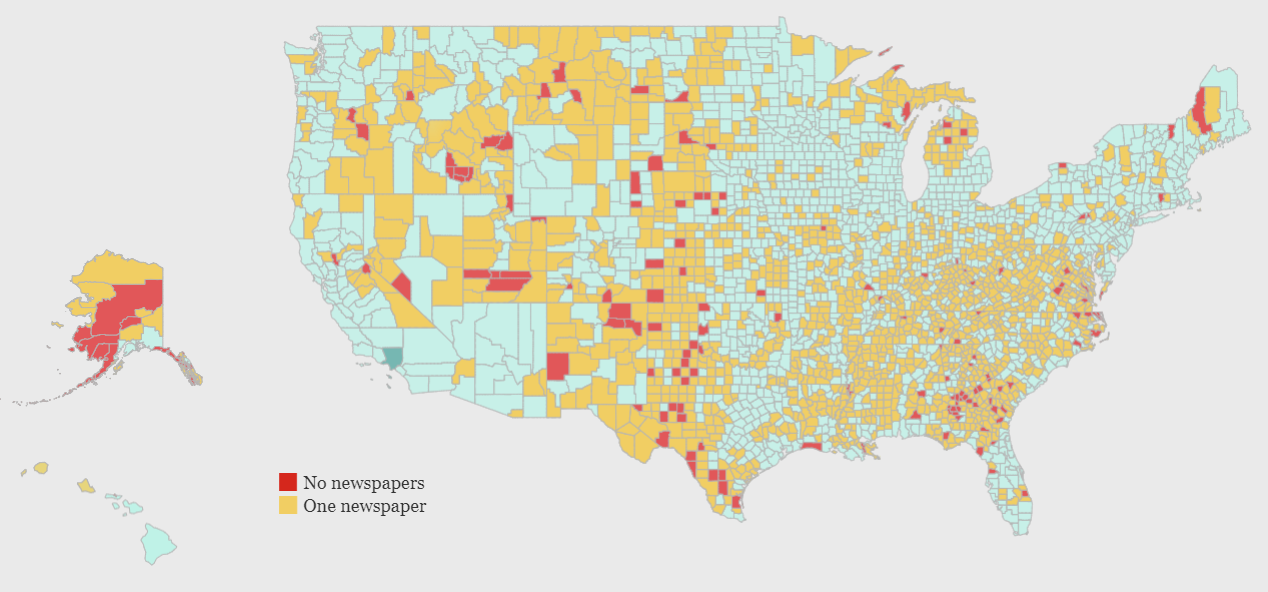

School of Media and Journalism, University of North Carolina at Chapel Hill

Frank Blethen

The COVID-19 pandemic has simultaneously proved the life-and-death importance of local journalism in our democracy and accelerated the destruction of the free press at a scale that only Congress can reverse.

During the novel coronavirus outbreak, readership of The Seattle Times and newspapers nationwide is at an all-time high, but with plummeting advertising revenue. So many papers have laid off staff that journalism enterprises are in danger of not being able to provide the necessary local coverage vital to community connection just when it is most needed: in the midst of a national health and economic crisis.

While the newspaper industry has been struggling with a changing business model precipitated by digital news and advertising platforms, it still has a crucial role to play. When stay-home orders hit, advertisers closed their shops and canceled advertising that supported local newsroom jobs.

Kristina Läsker

久保大

先ほど私は、公表されている統計データを引用して、少年犯罪の増加等の主張に否定的な説明を試みてまいりました。そして、それにもかかわらず、少年犯罪が改善されていると主張しようというのではないのだというふうにも述べました。

実は、私には、同じデータを使って、例えば平成十四年ごろまでというように都合よく期間を区切って引用することにより、全く反対に、増加しているという説明を加えることも可能だったわけです。

つまり、私が犯罪統計を読み進むうちに気づいたことは、この統計自体から一定の結論を導き出すことは危ういことであるが、反対に、ある結論を導き出すために自説に都合よく統計データを引用することは大変簡単だということでした。

ウィキペディア

管理社会とは社会の形態を表す言葉の一つ。社会統制により個人が抑圧される、否定的な意味合いで用いられることが多い。

情報技術が発達することで、社会の多岐にわたる事柄に関して徹底した情報収集と集中管理が技術的に可能となる。これを社会で包括的かつ積極的に導入し、社会のなかの個人や組織を統一管理しようとする社会またはその概念や風潮をいう。

古くは各々の物々交換であったやりとりを、通貨を介する方法に統一管理されたこと、さらには銀行で決済を行ったりするようになったことも、経済(経国済民)の面での管理社会化といえる。さらに現在は、プリペイドカードなどの仮想通貨も普及しつつある。

運用次第では、人間に関しても病歴などの個人情報や、移動場所や購買履歴などの生活・行動に関する様々な情報が収集され、それらが特定個人の情報として(横方向にも)関連付けられる。個人の行動様式も把握可能になる。

一方で、便利さの裏返しとして個人の情報を特定機関(多くは政府)に預けることになり、アクセスできる者の不正窃視・改ざん、情報漏えい、また個人の行動が監視・記録されることで個人の信条や趣向などが解析され、政府などが個人の自由や権利を抑圧したり、特定組織人のみが利するような極めて不平等・不自由な社会となっていく。

この言葉は、1960年代より日本の学会やマスコミで用いられるようになった。個人の信条や行動が監視され、抑圧・統制されるようになる社会といった否定的な意味合いで用いられることが多い。「1984年 (小説)」では、特定政党が牛耳る管理社会・監視社会で、情報操作により一般市民が愚民化・奴隷化し、それに対する抵抗も無に帰すフィクションが描かれている。

ウィキペディア

監視社会とは、警察や軍隊、憲兵などにより過剰な監視が生じた社会のことを指す。

ソビエト連邦、中国や北朝鮮では、党や軍が一方的に国民を統制、監視しているため、監視国家といわれる。自由主義国家においても、街頭や公共施設における多くの監視カメラの設置や、警察のシンパサイザーであり市民による相互監視組織とも言える防犯ボランティアの活動など、漠然とした犯罪不安を背景とした治安意識の過剰な高まりが、監視社会化の懸念として論じられている 。 監視システムによる過度の国民の監視が人権侵害として問題視される一方、人権侵害が一切起こりえない社会は人権侵害を監視する監視社会でしか実現できないというジレンマも存在する。

ある人が他の人の発言や行動に過剰に反応し、他の人もある人の発言や行動に敏感に発言し、多人数で多人数を相互に監視することを特に相互監視社会という。2chやフェイスブック、インターネット掲示板では多くの人間の発言内容を監視、チェックし、少しでも社会に対する認識にズレが有ると思われる人物には個人攻撃あるいは無視を続けたり、そのような人物が頻繁にコンタクトを取ってきた場合に警告を発して隔離する習慣があるが、これも相互監視社会である。

一方、資本の企業経営手法としての「相互監視システム」は、社内各部門のプロジェクト・課題・進捗状況などをあえて公開・発表させる、更にお互いのチェック・意見交換で、部門間の競争を引き出し、迅速・多大・確実な業績を上げる手法である。

仮に、上記を「正の相互監視システム」とするならば「負の相互監視システム」とでも呼ぶべきが、社内の人・モノ・カネの流れをあえてオープンにし、一個人に任せない手法である。 使用者側は労働者に対して常に互いに「見られていること」を意識させ、もって組織内の不正・不利を容易に抑止することが可能になる。例を挙げれば、労働者のタイムカード・勤務表・スケジュールの半公開(各人のそれを互いに見られる状態にする)、資材・備品を共有スペースに置く、外回り・出張を2人1組で行わせる、などがある。

Steve Ranger

One of the most significant technologies being targeted by the intelligence services is encryption.

Online, encryption surrounds us, binds us, identifies us. It protects things like our credit card transactions and medical records, encoding them so that — unless you have the key — the data appears to be meaningless nonsense.

Encryption is one of the elemental forces of the web, even though it goes unnoticed and unremarked by the billions of people that use it every day.

But that doesn’t mean that the growth in the use of encryption isn’t controversial.

For some, strong encryption is the cornerstone of security and privacy in any digital communications, whether that’s for your selfies or for campaigners against an autocratic regime.

Others, mostly police and intelligence agencies, have become increasingly worried that the absolute secrecy that encryption provides could make it easier for criminals and terrorists to use the internet to plot without fear of discovery.

As such, the outcome of this war over privacy will have huge implications for the future of the web itself.

The Japan Times

The U.S. National Security Agency sought the Japanese government’s cooperation in 2011 over wiretapping fiber-optic cables carrying phone and Internet data across the Asia-Pacific region, but the request was rejected.

The agency’s overture was apparently aimed at gathering information on China given that Japan is at the heart of optical cables that connect various parts of the region. But Tokyo turned down the proposal, citing legal restrictions and a shortage of personnel.

The NSA asked Tokyo if it could intercept personal information from communication data passing through Japan via cables connecting it, China and other regional areas, including Internet activity and phone calls.

Faced with China’s growing presence in the cyberworld and the need to bolster information about international terrorists, the United States may have been looking into whether Japan, its top regional ally, could offer help similar to that provided by Britain.

Shane Harris

Today, this global surveillance system continues to grow. It now collects so much digital detritus — e-mails, calls, text messages, cellphone location data and a catalog of computer viruses — that the N.S.A. is building a 1-million-square-foot facility in the Utah desert to store and process it.

Wikipedia

Global mass surveillance refers to the mass surveillance of entire populations across national borders. Its roots can be traced back to the middle of the 20th century when the UKUSA Agreement was jointly enacted by the United Kingdom and the United States, which later expanded to Canada, Australia, and New Zealand to create the present Five Eyes alliance. The alliance developed cooperation arrangements with several “third-party” nations. Eventually, this resulted in the establishment of a global surveillance network, code-named “ECHELON” (1971).

Its existence, however, was not widely acknowledged by governments and the mainstream media until the global surveillance disclosures by Edward Snowden triggered a debate about the right to privacy in the Digital Age.

Steven D. Levitt

Information is a beacon, a cudgel, an olive branch, a deterrent–all depending on who wields it and how.

The conventional wisdom is often wrong.

林香里

- 日本社会で最も心配すべきは、「メディア不信」ではなく「メディアへの無関心」、ひいては「社会への無関心」だ。

- 日本社会では、政治・経済関連のニュースを知ることを市民としての義務と感じる規範精神が弱く、実態でも関心が高くない。メディアに対しても、その仕事、つまり報道やニュースの在り方についても、さほど関心が向いていない。

- 日本では、長年の無難なコンテンツ頼みが功を奏してか、他国より新聞部数が高止まり、テレビ視聴時間も減少速度は遅い。しかし、こうした状態はメディアが映す社会のイメージと、社会の実態とが、かけ離れてしまっていることを示唆する。つまり、日本の場合、メディアそのものは分極化していないが、メディアと社会との距離はますます開いているのではないか。

柴山哲也

安倍政権下では政権に批判的だった岸井成格、国谷裕子、古舘伊知郎氏といった有名キャスターが次々と番組を降板させられた事件があった。極めつけはNHK会長や経営委員の人事である。会長にはメディアとは何の関係もない経済界の籾井勝人氏が選ばれ、経営委員の中には百田尚樹、長谷川三千子といった人も知る右翼人士がそろって名を連ねていた。概ね安倍首相に近いといわれた人物が目立つ人選だった。NHK内部で何らかの忖度が働いたと思われる。

しかも籾井氏は就任の記者会見で「政府が右というものを左とはいえない」と露骨に公共放送の中立性を否定する政府寄り発言をして、NHKの路線変更を示唆した。そうか、NHKはこれからさらに右傾化するのか、と思ったものだ。案の定、原発報道を熱心にやっていた夜9時のニュース担当の大越健介キャスターが急に降板した。

最近ではテレビ朝日の報道ステーションで古舘氏とコンビを組んでいた小川彩佳キャスターの降板が伝えられている。小川キャスターは日本に珍しいクールなアメリカ型のテレビキャスターを思わせ、自分の意見をズバリといい、冷静に事実を伝える人だが、ネットメディアによれば、安倍政権に批判的であったため更迭されるというのだ。テレビ朝日の放送番組審議会委員長には、安倍首相のシンパといわれる幻冬舎の見城徹氏が就任している。

Mooyann Shae

InTrust Administrator

Please remember, the information that we are providing is extremely fluid, it is changing on a daily basis, and often getting superseded. What we present here today may very likely be irrelevant or incorrect tomorrow. As such, please know that it is only accurate as of today (certainly not going forward) and that even as of today the information is only our best understanding based on how we have reviewed/interpreted the information.

bookma!

ch.books

望月衣塑子

社会派を気取っているわけでも、自分が置かれた状況に舞いあがっているわけでもない。おかしいと思えば、納得できるまで何があろうととことん食い下がる。新聞記者として、警察や権力者が隠したいと思うことを明るみに出すことをテーマとしてきた。そのためには情熱をもって何度も何度も質問をぶつける。そんな当たり前のことをしたいと思う。

社会派を気取っているわけでも、自分が置かれた状況に舞いあがっているわけでもない。おかしいと思えば、納得できるまで何があろうととことん食い下がる。新聞記者として、警察や権力者が隠したいと思うことを明るみに出すことをテーマとしてきた。そのためには情熱をもって何度も何度も質問をぶつける。そんな当たり前のことをしたいと思う。

質問する様子がメディアでも取り上げられるようになり、雑誌やテレビなどのインタビューや講演の依頼を多くいただくようになった。東京新聞にも一般の方からたくさんの応援が届く。本当に励まされる一方で、バッシングや不審な電話、間接的な圧力などもある。

ただ、正義のヒーローのように言われることも、反権力記者のレッテルを貼られるのも、実際の自分とは距離があると感じている。

Joanne McNeil

It’s Time to Unfriend the Internet

It’s Time to Unfriend the Internet

The company is one of the biggest mistakes in modern history, a digital cesspool that, while calamitous when it fails, is at its most dangerous when it works as intended. Facebook is an ant farm of humanity.

(“Lurking” doesn’t just highlight the internet’s problems, it also voices her hope for an alternative future. In her final chapter, titled “Accountability,” McNeil compares a healthy internet to a “public park: a space for all, a benefit to everyone; a space one can enter or leave, and leave without a trace.” Or maybe the internet should be more like a library, “a civic and independent body … guided by principles of justice, rights and human dignity,” where “everyone is welcome … just for being.”)

Elisabeth Rosenthal

There is a tradition in China (and likely much of the world) for local authorities not to report bad news to their superiors. During the Great Leap Forward, local officials reported exaggerated harvest yields even as millions were starving. More recently, officials in Henan Province denied there was an epidemic of AIDS spread through unsanitary blood collection practices.

Indeed, even when Beijing urges greater attention to scientific reality, compliance is mixed.

Greta Thunberg, Malala Yousafzai

グロービス

山本マサヤ

SNS上には「事実かわからないけどもっともらしい情報」が溢れている。そのなかから正しい情報を選択する必要があるが、我々はウイルスの専門家ではないため、「正しい情報を見抜け!」「情報リテラシーを高めろ!」と言われても、なかなか難しい。

だからこそ、人間が持つ「信じたい情報を信じる」という脳のクセを理解して、それは「信じるべき情報なのか」「信じたい情報なのか」判断をする必要があるのではないか。

デマ情報は真実よりも約2倍の量リツイートされるという研究結果がある。人の恐怖を煽ったり、新しい刺激のある情報は、その情報が真実かどうか、悪意か善意か関係なく拡散されてしまう。そして、多くリツイートされた情報は「多くの人に支持されている情報」として、人間が反射的に認識してしまう。人間の脳はその都度考えたりせず、省エネで情報や状況を判断できるようにする脳のクセによるものだ。この機能は、情報の判断だけに限らず、物事の良い/悪い、安い/高いなど様々な人間の意思決定に無意識に影響を与えている。

そのことを知っておかなければ、あなたもコロナウイルスのデマ情報に惑わされてしまうかもしれない。

New York Times

Scarlett Johansson

Clearly this doesn’t affect me as much because people assume it’s not actually me in a porno, however demeaning it is. I think it’s a useless pursuit, legally, mostly because the internet is a vast wormhole of darkness that eats itself. There are far more disturbing things on the dark web than this, sadly. I think it’s up to an individual to fight for their own right to the their image, claim damages, etc. …

Clearly this doesn’t affect me as much because people assume it’s not actually me in a porno, however demeaning it is. I think it’s a useless pursuit, legally, mostly because the internet is a vast wormhole of darkness that eats itself. There are far more disturbing things on the dark web than this, sadly. I think it’s up to an individual to fight for their own right to the their image, claim damages, etc. …

The Internet is just another place where sex sells and vulnerable people are preyed upon. And any low level hacker can steal a password and steal an identity. It’s just a matter of time before any one person is targeted. …

People think that they are protected by their internet passwords and that only public figures or people of interest are hacked. But the truth is, there is no difference between someone hacking my account or someone hacking the person standing behind me on line at the grocery store’s account. It just depends on whether or not someone has the desire to target you. …

Obviously, if a person has more resources, they may employ various forces to build a bigger wall around their digital identity. But nothing can stop someone from cutting and pasting my image or anyone else’s onto a different body and making it look as eerily realistic as desired. There are basically no rules on the internet because it is an abyss that remains virtually lawless, withstanding US policies which, again, only apply here.

Shoshana Zuboff

These data flows empty into surveillance capitalists’ computational factories, called “artificial intelligence,” where they are manufactured into behavioral predictions that are about us, but they are not for us. Instead, they are sold to business customers in a new kind of market that trades exclusively in human futures. Certainty in human affairs is the lifeblood of these markets, where surveillance capitalists compete on the quality of their predictions. This is a new form of trade that birthed some of the richest and most powerful companies in history.

In order to achieve their objectives, the leading surveillance capitalists sought to establish unrivaled dominance over the 99.9 percent of the world’s information now rendered in digital formats that they helped to create. Surveillance capital has built most of the world’s largest computer networks, data centers, populations of servers, undersea transmission cables, advanced microchips, and frontier machine intelligence, igniting an arms race for the 10,000 or so specialists on the planet who know how to coax knowledge from these vast new data continents.

Greta Thunberg, Ingmar Rentzhog

Greta Thunberg has rapidly risen to international fame as a 16-year-old activist rallying global youth against the menace of climate change.

Greta Thunberg has rapidly risen to international fame as a 16-year-old activist rallying global youth against the menace of climate change.

But before she had the attention of the world’s leaders and news editors, Thunberg relied on a public relations expert to help popularize her narrative.

Ingmar Rentzhog, the Swedish founder and CEO of We Don’t Have Time, a startup social network for climate activism, has said he is the one who “discovered” Thunberg. He first promoted her brand last August in a Facebook and Instagram post that went viral, racking up 14,000 likes and nearly 6,000 shares.

Abdullah Shihipar

These days, the very word “data” elicits fear and suspicion in many of us — and with good reason. DNA-testing companies are sharing genetic information with the government. A firm hired by the Trump campaign gained access to the private information of 50 million Facebook users. Hotels, hospitals, and a consumer credit reporting agency have admitted to major breaches. But while many of us are rightfully concerned about the misuse of our personal data by private entities, we should be just as worried about the important national stories that aren’t told when our fellow citizens don’t feel secure enough to share theirs with researchers.

Part of the reason so many of us are nervous about our data and who has access to it is that pieces of our data can be combined to paint a detailed picture of our lives: how much money we make, what we’re interested in, what car we drive. But in a similar way, individual experiences become data points in sets that shape our understanding of what’s happening in this country.

Pablo Martinez Monsivais

EUGDPR

The EU General Data Protection Regulation (GDPR) is the most important change in data privacy regulation in 20 years.

The regulation will fundamentally reshape the way in which data is handled across every sector, from healthcare to banking and beyond.

The aim of the GDPR is to protect all EU citizens from privacy and data breaches in today’s data-driven world.

Luc Rocher, Julien M. Hendrickx, Yves-Alexandre de Montjoye

While rich medical, behavioral, and socio-demographic data are key to modern data-driven research, their collection and use raise legitimate privacy concerns. Anonymizing datasets through de-identification and sampling before sharing them has been the main tool used to address those concerns. We here propose a generative copula-based method that can accurately estimate the likelihood of a specific person to be correctly re-identified, even in a heavily incomplete dataset. On 210 populations, our method obtains AUC scores for predicting individual uniqueness ranging from 0.84 to 0.97, with low false-discovery rate. Using our model, we find that 99.98% of Americans would be correctly re-identified in any dataset using 15 demographic attributes. Our results suggest that even heavily sampled anonymized datasets are unlikely to satisfy the modern standards for anonymization set forth by GDPR and seriously challenge the technical and legal adequacy of the de-identification release-and-forget model.

Christopher Tozzi

Big Data is defined by the following six features:

1. Highly scalable analytics processes – Big Data platforms have become popular due in large part to their ability to scale. The amount of data that they can analyze without a degradation in performance is virtually unlimited. This is what sets these tools apart from traditional methods of investigating data, such as basic SQL queries.

2. Flexibility – Big Data is flexible data. Whereas in the past all of your data might have been stored in a specific type of database using consistent data structures, today’s datasets come in many forms. Effective analytics strategies are designed to be highly flexible and to handle any type of data that is thrown at them. Fast data transformation is an essential part of Big Data, as is the ability to work with unstructured data.

3. Real-time results – Traditionally, organizations could afford to wait for data analytics results. In the world of Big Data, however, maximizing value means gaining insights in real time. After all, when you are using Big Data for tasks like fraud detection, results received after the fact are of little value.

4. Machine learning applications – Machine learning is not the only way to leverage Big Data. It is, however, an increasingly important application in the Big Data world. Machine learning use cases set Big Data apart from traditional data, which was very rarely used to power machine learning.

5. Scale-out storage systems – Traditionally, data was stored on conventional tape and disk drives. Today, Big Data often relies on software-defined scale-out storage systems that abstract data away from the underlying storage hardware. Of course, not all Big Data is stored on modern storage platforms, which is why the ability to move data quickly between traditional storage and next-generation storage remains important for Big Data applications.

6. Data quality – Data quality is important in any context. With the increasing complexity of Big Data, however, has come greater attention to the importance of ensuring data quality within complex data sets and analytics operations. Attention to data quality is a core feature of any effective Big Data workflow.

Jeannette Wing

Geoff Nunberg

So it’s natural to be wary of our new algorithmic overlords. They’ve gotten so good at faking intelligent behavior that it’s easy to forget that there’s really nobody home.

Chris Cannucciari

Martin Hilbert, Priscila López

Cambridge University Library

Six things Darwin never said:

- It is not the strongest of the species that survives, nor the most intelligent that survives. It is the one that is most adaptable to change.

- In the struggle for survival, the fittest win out at the expense of their rivals because they succeed in adapting themselves best to their environment.

- In the long history of humankind (and animal kind, too) those who learned to collaborate and improvise most effectively have prevailed.

- The universe we observe has precisely the properties we should expect if there is, at bottom, no design, no purpose, no evil, no good, nothing but blind, pitiless indifference.

- I was a young man with uninformed ideas. I threw out queries, suggestions, wondering all the time over everything; and to my astonishment the ideas took like wildfire. People made a religion of them.

- The fact of evolution is the backbone of biology, and biology is thus in the peculiar position of being a science founded on an improved theory, is it then a science or faith?

Takuya Kimura

IoT にフォーカスした深層学習技術の研究開発を行う Preferred Networks (PFN) は 2017年8月4日、トヨタ自動車を引受先とした第三者割当増資を実施し、約105億円を調達したと発表した。トヨタ自動車はPFNとの共同研究・開発により、モビリティ分野へのAI技術の応用を進めていく狙いだ。

PFNとトヨタは2014年10月から共同で研究開発を開始し、関係強化を目的に、同じくトヨタから10億円を調達していた(2015年12月)。

PFNはプレスリリースのなかで、「今回の資金調達により、計算環境の拡充、優秀な人材の確保をすすめ、モビリティ事業分野におけるトヨタとの関係強化、共同研究・開発をさらに加速させる」としている。

David Chalmers

I like to distinguish between intelligence and consciousness. Intelligence is a matter of the behavioral capacities of these systems: what they can do, what outputs they can produce given their inputs. When it comes to intelligence, the central question is, given some problems and goals, can you come up with the right means to your ends? If you can, that is the hallmark of intelligence. Consciousness is more a matter of subjective experience. You and I have intelligence, but we also have subjectivity; it feels like something on the inside when we have experiences. That subjectivity — consciousness — is what makes our lives meaningful. It’s also what gives us moral standing as human beings.

Forbes

The World’s Top 10 Highest-Paid Athletes in 2019:

| 1 | Lionel Messi | Soccer | $127 M |

| 2 | Cristiano Ronaldo | Soccer | $109 M |

| 3 | Neymar | Soccer | $105 M |

| 4 | Canelo Alvarez | Boxing | $94 M |

| 5 | Roger Federer | Tennis | $93.4 M |

| 6 | Russell Wilson | Football | $89.5 M |

| 7 | Aaron Rodgers | Football | $89.3 M |

| 8 | LeBron James | Basketball | $89 M |

| 9 | Stephen Curry | Basketball | $79.8 M |

| 10 | Kevin Durant | Basketball | $65.4 M |

Nisha Mody

In this age of fake news, facts aren’t necessarily the answer. The news is embedded within a broad political spectrum, and acknowledging the slants from the messages we receive is more powerful than grasping for facts.

The media is biased; it is funded by advertisers and run by businessmen. Businesses have interests, the media has interests. … Conversations lose nuance and are skewed for the sake of survival.

It’s also no secret that news publications and television networks are politicized. … The news is not neutral — nothing is neutral.

ICEYE

Satellite data enables efficient mapping and monitoring of the Earth’s resources, ecosystems, and events. The information can be used for various scientific, administrative and commercial applications. Accurate information based on satellite data helps users to understand how we humans affect our cities and environments, which in turn enables data-based decisions and actions. Access to timely satellite data gives an opportunity to take action on what is going on right now, on large and small scales.

The use of satellite data helps governments and industries to share information, to make better decisions, to act on time and to provide improved or totally new services. The original raw satellite images contain data with parameters that can be interpreted via remote sensing software. The parameters can be then combined and verified, for example with spatial data, for further analysis. When activities, issues, changes, and trends can be detected, monitored and analysed more efficiently with satellite data, the benefits for people and environment can be tremendous.

Important applications serve the interests of agriculture, forestry, urban development, insurance, energy, and security-related operators and industries, among others. The volume of applications is huge, and it is rapidly increasing thanks to new innovations.

Synspective

Synspective gathers broad and high frequency monitoring data from our own SAR satellite constellation and extracts information using machine learning technology to better enable decision-making and action by companies and governments,. The information has multiple benefits such as visualization and prediction of economic activity, monitoring of terrain and structures, and immediate understanding of disaster situations.

Maarten van Doorn

Since we’re deeply convinced that we need that data, yet it wasn’t there to be found, we went and created some instead. We would like to know about these unknowable things so badly, that we pretend there’s some fact about societal engagement ‘there’, which our surveys tap into.

BBC News

Ian Bremmer

Russia’s parliament approved a law that might allow the country to cordon off its internet from the rest of the world, creating an unprecedented “sovereign” internet.

If Russia is able to pull this off (a very big if), it will be the most tangible step yet toward fracturing the web.

This law will regulate how internet traffic moves through critical infrastructure for the internet. By November internet service providers will have to adopt new routing and filtering technology and grant regulators the authority to directly monitor and censor content it deems objectionable. But the real groundbreaker is the intent to create a national domain name system (DNS) by 2021, probably as a back-up to the existing global system that translates domain names into numerical addresses. If Russia builds a workable version and switches it on, traffic would not enter or leave Russia’s borders. In effect, it means turning on a standalone Russian internet, disconnected from the rest of the world.

Ross Douthat

In our age of digital connection and constantly online life, you might say that two political regimes are evolving, one Chinese and one Western, which offer two kinds of relationships between the privacy of ordinary citizens and the newfound power of central authorities to track, to supervise, to expose and to surveil.

The first regime is one in which your every transaction can be fed into a system of ratings and rankings, in which what seem like merely personal mistakes can cost you your livelihood and reputation, even your ability to hail a car or book a reservation. It’s one in which notionally private companies cooperate with the government to track dissidents and radicals and censor speech; one in which your fellow citizens act as enforcers of the ideological consensus, making an example of you for comments you intended only for your friends; one in which even the wealth and power of your overlords can’t buy privacy.

The second regime is the one they’re building in the People’s Republic of China.

John Walcott

Most of the administration’s case for that war made absolutely no sense, specifically the notion that Saddam Hussein was allied with Osama bin Laden. That one from the get-go rang all the bells — a secular Arab dictator allied with a radical Islamist whose goal was to overthrow secular dictators and reestablish his Caliphate? The more we examined it, the more it stank. The second thing was rather than relying entirely on people of high rank with household names as sources, we had sources who were not political appointees. One of the things that has gone very wrong in Washington journalism is ‘source addiction,’ ‘access addiction,’ and the idea that in order to maintain access to people in the White House or vice president’s office or high up in a department, you have to dance to their tune. That’s not what journalism is about.

We had better sources than she (Judith Miller) did and we knew who her sources were. They were political appointees who were making a political case.

I first met him (Ahmed Chalabi) in ’95 or ’96. I wouldn’t get dressed in the morning based on what he told me the weather was, let alone go to war.

豊田洋一

米映画「記者たち 衝撃と畏怖の真実」を公開当日、仕事帰りに観に行った。

心に残る映画「スタンド・バイ・ミー」を撮ったロブ・ライナー監督作品であることに加え、筆者のワシントン勤務と同時期の、米新聞記者の奮闘を描いた実話であることが、映画館へと足を急がせた。

「衝撃と畏怖」は二〇〇三年三月、イラク戦争開戦時の米軍の作戦名。圧倒的軍事力でイラク側の戦意をくじくという意味だ。

ブッシュ政権が開戦の大義としたのが大量破壊兵器の存在だが、イラクはそんなものは持っていなかった。証拠をでっち上げ、ウソの理由で戦争が始められ、多くの命が失われた。米国の報道機関のほとんどが政権の誤った情報を垂れ流した。ニューヨーク・タイムズなど米国を代表する新聞も例外ではない。

その中で、地方新聞社を傘下に置くナイト・リッダー社の記者たちは政権のウソを報じ続ける。映画はその奮闘ぶりを当時のニュース映像などを交えて描き出していた。

筆者はあの当時、大量破壊兵器の存在に懐疑的ながらも、政権発の情報は、その都度報じざるを得なかった。外国のメディアだとしても、米メディアと同罪だろう。

権力はウソをつく、は歴史の教訓だ。そのウソに新聞記者としてどう立ち向かい、真実を暴くのか。エンドロールを見つめながら、当時を思い出し、自問した。

Power Estate

Lissa Harris

Intelligence may be written in our genes, but in a language we don’t yet know how to read.

Kian Katanforoosh

Deep learning is a subset of what we call Artificial Intelligence. I would say that AI is about developing machines (computers, smartphones, websites, robots) able to perform tasks that normally require human intelligence, thanks to algorithms mixing mathematics and computer science. It can be seen as a science of automation that can affect many industries. It is like a science of automation that applies to any type of industry.

Deep learning is a subset of what we call Artificial Intelligence. I would say that AI is about developing machines (computers, smartphones, websites, robots) able to perform tasks that normally require human intelligence, thanks to algorithms mixing mathematics and computer science. It can be seen as a science of automation that can affect many industries. It is like a science of automation that applies to any type of industry.

Deep learning is a family of algorithms in artificial intelligence inspired by the interactions between neurons in the human brain, namely « neural networks ». It has recently boomed after outstanding results have been observed in applications such as image detection, natural language processing and speech recognition, sometimes exceeding the human-level performance.

Andrew Ng

Jay Liebowitz

If intelligence and stupidity naturally exist, and if AI is said to exist, then is there something that might be called “artificial stupidity?”